Recruiting developers in 2026 means prioritizing privacy. Developers now demand greater transparency, consent-based data handling, and control over their personal information. With stricter regulations like GDPR, CCPA, and the EU AI Act, recruiters must adapt or face legal and reputational risks. Here's what you need to know:

- Transparency: Clearly explain what data is collected, why, and who will access it.

- Consent: Use opt-in systems for data sharing and allow candidates to withdraw consent anytime.

- Data Minimization: Only collect essential information and delete it promptly after use.

- Avoid Intrusive Practices: No data scraping or hidden collection; focus on ethical tech recruitment practices.

::: @figure  {Developer Privacy Expectations in Recruitment 2026: Key Statistics}

{Developer Privacy Expectations in Recruitment 2026: Key Statistics}

What Privacy Do Developers Expect During Recruitment?

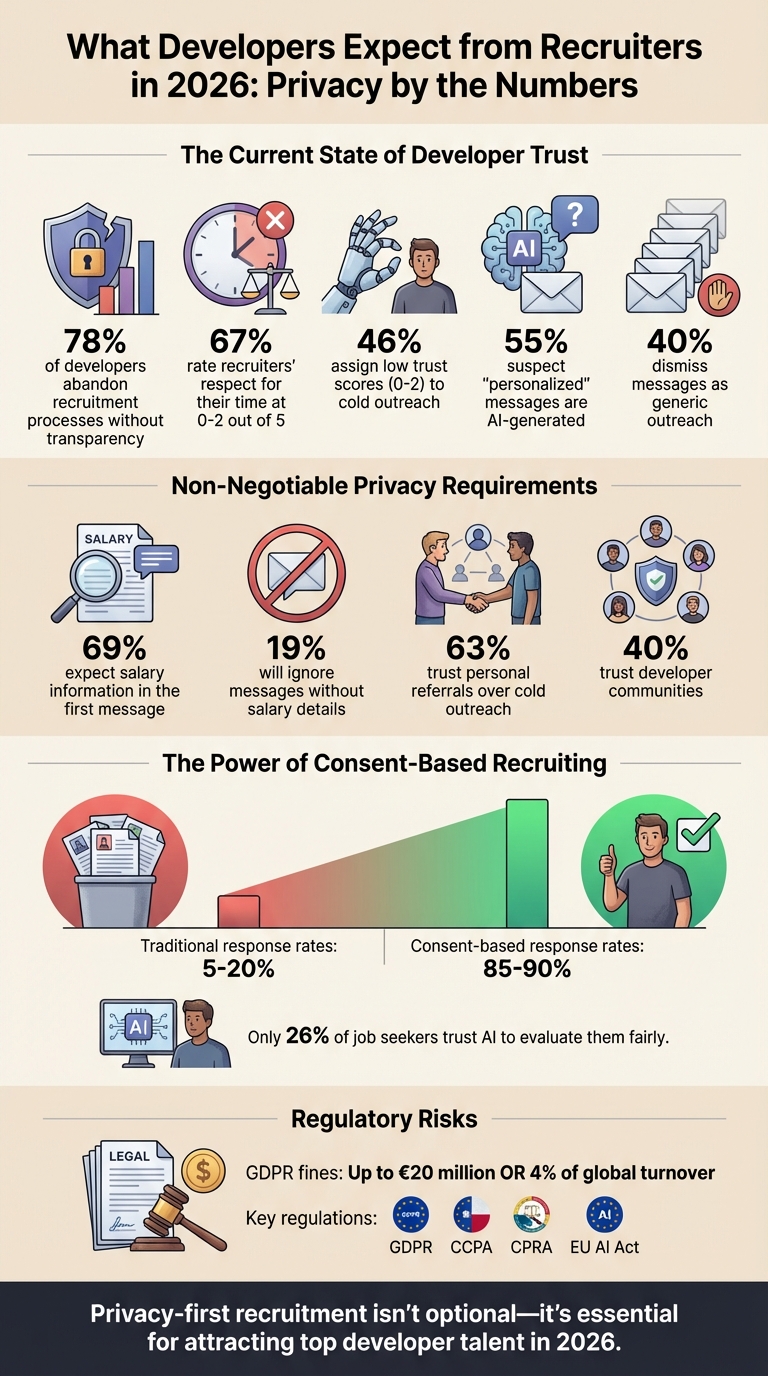

Developers in 2026 expect genuine control over their personal data, not just surface-level compliance. They want recruiters to clearly outline what information is being collected, why it’s necessary, and who will have access to it - before any data is shared. Without this level of transparency, a staggering 78% of developers walk away from recruitment processes entirely .

This push for consent-first systems reflects a broader trend: consent has become the cornerstone of data usage in AI-powered recruiting . Developers demand granular choices for how their data is used, along with the ability to revoke consent easily. This isn’t just about meeting legal requirements; it’s about building trust.

"Consent is the last reliable signal left" in AI-driven recruiting, according to Didomi's CRO .

The numbers speak volumes: 67% of developers rate recruiters’ respect for their time at a dismal 0–2 out of 5, and 46% assign similarly low trust scores to cold outreach . They also expect upfront salary information - 69% believe it should be included in the first message - along with clear job details and no hidden data collection. Let’s explore these expectations through three key principles: transparency, consent, and non-intrusiveness.

Transparency in Data Collection

Clear communication about data collection is non-negotiable. Developers expect to know upfront what personal information is being gathered - such as resumes, GitHub activity, or contact details - and the exact purpose behind each data point. For instance, platforms must disclose AI usage in candidate screening to comply with Illinois regulations and provide notices in line with the CCPA for California-based applicants .

Practical steps include detailed privacy notices at the start of the application process, visual data flow maps showing which HR systems and vendors handle the data, and real-time dashboards that track access. Developers also want to know how long their data will be retained and who will review it, ensuring they feel fully informed.

Consent-Based Data Sharing

Explicit, opt-in consent is essential before sharing developer data with third parties, such as background check providers or partner agencies. This approach not only fosters trust but also reduces candidate drop-off rates and minimizes the risk of legal penalties. Developers prefer unbundled consent options - separate checkboxes for each type of data use - rather than a blanket "agree to all" button. They also expect the ability to withdraw consent at any time without consequences.

Platforms like daily.dev Recruiter have adopted double opt-in systems, requiring developers to explicitly approve before their profiles are shared with hiring teams . This transforms recruiting from impersonal cold outreach into a verified, permission-based process.

Avoiding Intrusive Practices

Beyond transparency and consent, recruiters must steer clear of invasive methods. Developers are firmly against practices like automatic data scraping, hidden data collection, and overly intrusive background checks. Recruiters should only collect data that candidates have explicitly agreed to share, disabling auto-scraping features in developer hiring platforms and ATS and notifying candidates whenever data is sourced.

Conducting Data Protection Impact Assessments for high-risk AI tools and incorporating human oversight where required by law are additional safeguards . Vendor contracts with audit rights and bias testing can help ensure compliance with data minimization standards . Dark patterns - like pre-checked consent boxes or vague privacy notices - erode trust instantly. In fact, 55% of developers already suspect that even “personalized” recruiter messages are generated by AI . Authenticity and clear communication are the only ways to rebuild trust in these interactions.

How to Handle Candidate Data Responsibly

Managing candidate data responsibly starts with transparency and privacy safeguards. Begin by documenting every point where candidate information enters your system. Identify how data flows in - whether through your Applicant Tracking System (ATS), emails, or recruiting platforms - and track what is collected, such as resumes, interview notes, or GitHub profiles. Determine who has access to this data and establish clear timelines for deletion . For instance, ensure outdated emails or spreadsheets are purged once the hiring process concludes.

Next, streamline data collection by using standardized intake forms. These forms help limit the collection of unnecessary sensitive information. For example, instead of asking for a full birth date, you can simply confirm if a candidate is over 18. Similarly, requesting only the city or country of residence is often sufficient for relocation assessments. Centralize interview feedback in a secure, access-controlled system rather than leaving it scattered across personal inboxes .

Following GDPR and CCPA Best Practices

To comply with regulations like GDPR and CCPA, establish a lawful basis for collecting and processing each piece of candidate data. For example, consent is necessary for adding candidates to talent pools for future opportunities, while contract obligations apply when handling identification, tax, or visa information during the offer stage. Legitimate interest can justify data use during early screening or platform security checks . Non-compliance could lead to fines of up to €20 million or 4% of global turnover .

Under CCPA, California residents have the right to know what data is collected and can opt out of data sales . To meet these requirements, ensure third-party vendors - like background check services or ATS providers - sign Data Processing Agreements that define data access, usage, and retention periods . Keep detailed records of processing activities and maintain audit trails for consent .

Using Data Minimization Strategies

"Data minimization is simple: collect only what you need, when you need it." - Technical Talent Group

Adopt this principle across your hiring process. For example, only request social media handles when they are relevant and after obtaining explicit consent. Avoid asking for marital or family status, as these details have no bearing on a candidate's qualifications . While regulations like the EEOC require retaining certain personnel records for a set period, this doesn’t justify keeping unnecessary data indefinitely .

Third-party services, such as staffing agencies or background check providers, should only access data essential to their role, with clear retention limits outlined in writing . When using AI resume parsing and screening tools, ensure vendors follow zero-retention policies and avoid training public models on candidate data. This is particularly important as only 26% of job seekers trust AI to evaluate them fairly, making responsible data handling a competitive advantage .

Using Secure Platforms

Regional data residency is another key factor. For example, GDPR mandates that data from EU candidates must remain within the EU . Choose platforms that integrate with your existing encryption, identity standards, and access controls to reduce the risk of data breaches. Additionally, ensure these systems maintain a Record of Processing Activities (ROPA), detailing who processes what data, where, and for what purpose . Secure platforms not only protect candidate information but also help you stay compliant with GDPR and CCPA.

Platforms like daily.dev Recruiter use a double opt-in model, requiring developers to explicitly approve before their profiles are shared with hiring teams . This approach reduces unauthorized data sharing and ensures all interactions are consent-based. Furthermore, the platform provides tools to support candidate rights, eliminating the need for manual processes. When incorporating AI, ensure these systems operate within your secure infrastructure to maintain your established security protocols .

Why Privacy-First Recruitment Builds Trust with Developers

Developers often approach recruiters with a healthy dose of skepticism. The numbers back this up: 40% of developers dismiss messages as generic outreach, and 55% suspect AI-generated personalization . By prioritizing privacy and transparency, you can stand out and demonstrate genuine respect for their time and data.

Taking a privacy-first approach transforms the recruitment process. It’s no longer just about sourcing names - it’s about earning attention and trust. When developers see that you’re only collecting the data you truly need and respecting their consent, they’re much more likely to engage openly .

Better Candidate Experience

A recruitment process built on privacy feels respectful, not invasive. For instance, skipping unnecessary data requests - like social security numbers or references too early in the process - can ease candidate anxiety and reflect professionalism. Developers also value clear communication about what data you’re collecting and why.

The numbers tell a story: 67% of developers rate recruiter respect for their time at a low 0–2 out of 5 . To improve this, share upfront details like the tech stack, salary range, and work model - what many call the "Big Three." This transparency lets candidates quickly decide if they’re a good fit, saving time for everyone involved. It’s worth noting that 19% of developers will ignore a message outright if it doesn’t include salary information , making clarity a non-negotiable for engagement.

A respectful and transparent process doesn’t just help with current candidates - it boosts your long-term reputation as a recruiter or employer.

Building a Reputation for Transparency

Privacy-first recruitment isn’t just about immediate results; it’s about building trust that lasts. Even candidates who don’t get the job can become advocates for your company if the process feels professional and transparent. In a sea of generic, unsolicited messages, prioritizing privacy becomes a key differentiator for your employer brand. This approach also aligns seamlessly with GDPR, CCPA, and other data protection strategies discussed earlier .

Developers tend to trust personal referrals (63%) and developer communities (40%) far more than cold outreach . When your privacy practices align with these trust-based channels, you position your company as one that values developers as individuals, not just data points. This reinforces your image as a "culture-first" employer and opens the door to stronger, trust-based relationships .

Conclusion: Meeting Developer Privacy Expectations in 2026

To align with developers' privacy expectations, your recruitment process must prioritize transparency, consent, and data minimization. These aren't just buzzwords - they're the cornerstones for earning trust and attracting top talent. Developers today expect clear privacy notices, double opt-in systems, and strict data retention policies that treat them as individuals, not just entries in a database.

Staying compliant with regulations like GDPR, CCPA, and newer laws such as the California Privacy Rights Act (CPRA) does more than protect you legally - it strengthens trust and fosters genuine connections. The numbers speak for themselves: when developers explicitly consent to being contacted, response rates soar from 5–20% to an impressive 85–90% .

Adopting privacy-first practices also reduces operational risks. By collecting only the necessary information at each stage, conducting Data Protection Impact Assessments (DPIAs) for AI tools, and using role-based access control (RBAC), you limit the chances of data breaches. With regulators keeping a closer eye on AI-driven recruiting tools in 2026, these measures are no longer optional - they're essential .

Start by taking actionable steps today: publish privacy notices in plain, accessible language, audit your vendors' data practices, and set clear data retention periods of 6–12 months. Even small gestures, like offering brief feedback after rejections, can help maintain positive relationships and protect your brand reputation.

FAQs

How can recruiters prove they didn’t scrape my data?

Recruiters can show they haven’t scraped your data by using consent-based platforms, being upfront about how they source candidate information, and following privacy laws like GDPR and CCPA. These laws emphasize the need for clear consent and encourage practices like data minimization to safeguard personal information.

What candidate data should be deleted, and when?

When candidate data is no longer required for recruitment purposes, if the candidate withdraws their consent, or when the retention period mandated by regulations like GDPR or CCPA ends, it should be deleted. Make sure candidates are clearly informed about how their data is managed, and always comply with your organization's privacy guidelines.

How do I withdraw consent once I’ve applied?

To withdraw your consent after applying, start by checking the platform's communication or privacy policy for an opt-out option. Thanks to GDPR, candidates have the right to revoke consent at any time, often through a link provided in emails or on the platform. If you can't find a clear option, reach out directly to the recruiter or the data controller to make your request. It's always a good idea to review the privacy policy for detailed instructions on managing your preferences.

.png)