Hiring data engineers in 2026 is challenging but essential, with 2.9 million open data-related roles globally and demand growing 23% year-over-year. These professionals build the infrastructure that powers AI, analytics, and business insights. Without them, projects stall, and teams remain underutilized. To succeed in hiring, you must:

- Define the role clearly: Differentiate between data engineers, analytics engineers, and data scientists to target the right candidates.

- Focus on key skills: SQL, Python, Spark, Airflow, dbt, and cloud platforms are non-negotiable. Communication and teamwork are equally important.

- Source strategically: Engage with candidates in specialized communities (e.g., dbt Community, GitHub) and attend industry events.

- Streamline hiring: Complete the process in under three weeks to avoid losing top candidates to competitors.

- Offer competitive compensation: Salaries range from $70,000 to $320,000+, depending on experience and location.

Speed, clarity, and precision in your hiring process are critical to securing the best talent in this competitive market.

Data Engineering Market and Role Definitions

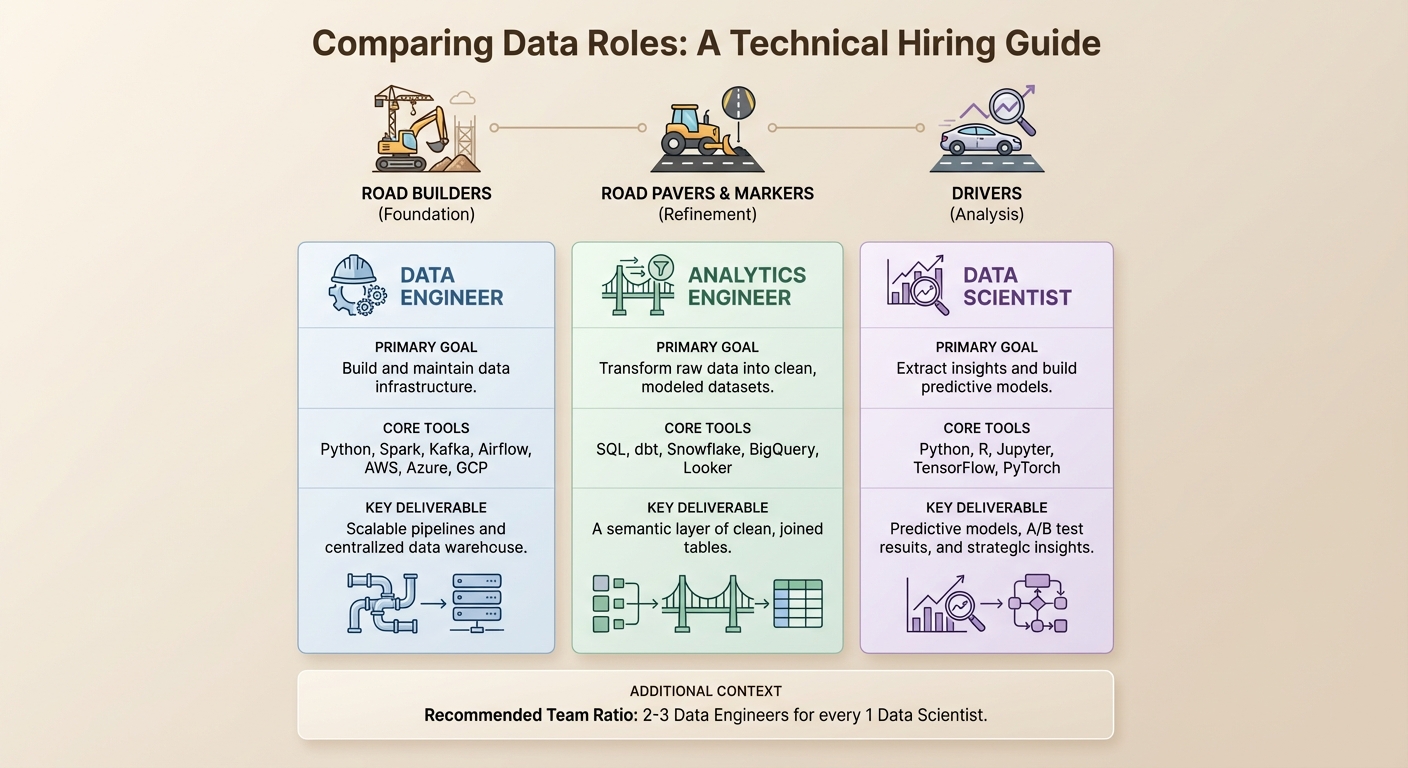

::: @figure  {Data Engineer vs Analytics Engineer vs Data Scientist: Roles, Tools & Deliverables Comparison}

{Data Engineer vs Analytics Engineer vs Data Scientist: Roles, Tools & Deliverables Comparison}

Data Engineer vs. Data Scientist vs. Analytics Engineer

Understanding the differences between these roles is essential for recruiters, as each position requires distinct skills and delivers unique outcomes. Misidentifying them can lead to missed opportunities, with outreach messages often going unanswered.

Data engineers are the backbone of the data ecosystem. They design and maintain the infrastructure - pipelines, warehouses, and data lakes - that make data accessible and usable . Think of them as the road builders of the data world. Their work involves tools like Python, Spark, Kafka, Airflow, and cloud platforms such as AWS, Azure, and GCP. Their primary goal? To create a scalable, production-ready infrastructure that other teams can depend on.

Analytics engineers bridge the gap between data engineering and data analysis. They use tools like dbt to turn raw data into clean, well-documented datasets, often referred to as the "semantic layer" . Essentially, they pave and mark the roads built by data engineers. Their expertise lies in SQL, dbt, Snowflake, BigQuery, and visualization tools like Looker and Tableau.

Data scientists are the drivers on these roads . They use the infrastructure crafted by data engineers to perform advanced statistical analysis, develop predictive models, and generate strategic insights . This distinction highlights why hiring a senior data engineer before bringing on data scientists is so important. Without solid infrastructure, data scientists often spend their time on manual data cleaning . A balanced team typically includes two to three data engineers for every data scientist .

| Role | Primary Goal | Core Tools | Key Deliverable |

|---|---|---|---|

| Data Engineer | Build and maintain data infrastructure | Python, Spark, Kafka, Airflow, AWS, Azure, GCP | Scalable pipelines and centralized data warehouse |

| Analytics Engineer | Transform raw data into clean, modeled datasets | SQL, dbt, Snowflake, BigQuery, Looker | A semantic layer of clean, joined tables |

| Data Scientist | Extract insights and build predictive models | Python, R, Jupyter, TensorFlow, PyTorch | Predictive models, A/B test results, and strategic insights |

Grasping these distinctions helps illuminate why the demand for data engineers is growing, especially as we approach 2026.

Market Trends Driving Data Engineer Demand in 2026

The rising demand for data engineers is closely tied to challenges in operationalizing AI. As Tom Kenaley, Senior Partner and President at KORE1, aptly puts it:

The bottleneck was never the models. It was the plumbing. That's what data engineers do. Build the plumbing. And suddenly everybody needs a plumber .

Despite heavy investments in AI, projects often stall without strong data infrastructure. This need has driven data engineering roles to grow by 23% annually, with hiring growth rates reaching 35% according to industry reports .

Several key trends are fueling this demand:

- Cloud Migration and Evolving Architectures: By 2026, over 94% of enterprises have adopted some form of cloud infrastructure . Companies are now moving beyond basic warehousing to embrace hybrid cloud lakehouses and data mesh architectures . These modern systems require advanced architectural expertise. Today’s cloud warehouses allow data to be stored in its raw form and transformed later using the warehouse’s compute power . As a result, data engineers now manage intricate, high-performance cloud setups rather than isolated batch pipelines .

- Real-Time Streaming: Tools like Apache Kafka and Spark Streaming have become indispensable for achieving sub-minute data updates .

- AI-Ready Infrastructure: Data engineers are increasingly tasked with building pipelines for model training, managing feature stores, and supporting real-time inference pipelines . While off-the-shelf tools can handle 75–90% of data sources, specialized SaaS vendors often require custom-built, mission-critical pipelines .

Competition for mid-level talent, particularly those with 4–6 years of experience, is intense. This is the segment where salary bidding wars are most common . In enterprise environments, the average time to fill a data engineering role ranges from 60 to 90 days . If your hiring process takes longer than three weeks, you risk losing candidates to faster-moving competitors .

Hiring engineers?

Connect with developers where they actually hang out. No cold outreach, just real conversations.

Skills and Competencies to Assess

Technical Skills: SQL, Python, Spark, Airflow, dbt, and Cloud Platforms

When it comes to data engineering, SQL and Python are the backbone. Candidates should demonstrate advanced capabilities in SQL, such as handling window functions, query optimization, and creating complex joins. For Python, look for expertise with tools like PySpark and Pandas, as well as experience in building and maintaining APIs.

Proficiency in data transformation and orchestration tools is just as critical. Candidates should have hands-on experience with dbt for SQL-based transformations and orchestration tools like Airflow, Prefect, or Dagster for managing pipelines. Cloud expertise is now non-negotiable - whether it's AWS, Azure, or GCP/BigQuery - and familiarity with modern architectures like Snowflake or Databricks is a major plus. This makes sense, given that over 94% of enterprises have adopted some form of cloud technology .

Modern roles also demand DataOps skills, such as CI/CD for pipelines, Infrastructure-as-Code, and containerization. Strong candidates should showcase system design thinking, with an understanding of distributed systems, failure points, and cost-performance tradeoffs. As cloud expenses rise, the ability to optimize table schemas through partitioning and compression is becoming increasingly valuable.

Another growing area is AI fluency. Data engineers are increasingly tasked with creating pipelines for vector databases and supporting machine learning models. Skills in real-time streaming using tools like Apache Kafka and Spark Streaming are also becoming standard for event-driven systems.

Finally, while technical expertise is critical, it must be paired with strong communication skills to translate technical work into business value.

Communication and Teamwork Skills

Being technically skilled is only half the battle; a great data engineer must also excel at communication. They need to explain complex technical ideas in ways that non-technical stakeholders can understand. This includes translating business requirements into actionable technical strategies. Michael Kaminsky from dbt Labs puts it bluntly:

If you hire a data engineer who is solely focused on backend tasks and hates working with less technical folks, you're going to have a bad time.

The best engineers are team players who amplify their team’s effectiveness. They bridge the gap between technical execution and business goals, ensuring their work drives measurable results. During interviews, ask candidates to explain a complex process in simple terms or describe a past project by focusing on the business problem it solved rather than just listing the tools they used. Look for individuals who take initiative - whether it's cleaning up inefficiencies in code or improving documentation - even when it’s outside their formal responsibilities.

Where to Source Data Engineering Candidates

If you're looking to hire data engineers, it’s crucial to focus on the platforms and communities where they are most active and engaged.

Data Communities and Platforms: dbt Community and Developer Networks

Top-tier data engineers often spend their time in specialized communities rather than on generic job boards. For instance, the dbt Community is a major hub for analytics and data engineers who work with modern transformation tools. With over 100,000 active members exchanging more than 5,000 Slack messages daily, it’s a vibrant space for addressing pipeline challenges and sharing solutions .

Developer networks like GitHub and GitLab also offer a unique advantage. These platforms allow you to evaluate candidates based on their actual work. By exploring public repositories, you can assess their pipeline code, Docker configurations, CI/CD integrations, coding standards, documentation practices, and problem-solving skills .

Industry events are another excellent way to connect with data engineers. For example, the dbt Summit 2026 (September 15–18 in Las Vegas) will feature over 100 sessions , while the Databricks Data + AI Summit (June 15–18, 2026 in San Francisco) will host more than 800 sessions covering Spark, Delta Lake, and cloud platforms . Attending these events not only helps you network but also strengthens your employer brand among engineers who value cutting-edge technologies.

If speed is a priority, staff augmentation firms like KORE1 and Boundev can provide access to pre-vetted candidates who may not be actively job hunting. According to Tom Kenaley of KORE1, internal hiring typically takes 45 to 90 days, whereas specialized firms can reduce that timeline to just 3–6 weeks . Additionally, India has become a strong source of highly skilled data engineers, with senior professionals available through staff augmentation at annual rates ranging from $33,000 to $69,000 .

These insights can help you refine your approach to finding and attracting top talent.

How to Write Outreach Messages That Get Responses

Generic recruiting messages won’t cut it with data engineers. To stand out, your outreach needs to show a clear understanding of their role and the technical challenges they face.

Start with a specific hook that references their work, such as: "I saw your post about migrating to Spark 3.5." This demonstrates genuine interest and familiarity with their expertise .

Next, highlight your tech stack right away. Engineers are drawn to modern tools, so mention technologies like Snowflake, Databricks, dbt, or Airflow early in your message . If your company uses older systems, be transparent about your plans for modernization and emphasize how the engineer will play a key role in that transformation. Avoid blending data scientist and data engineer responsibilities in your job descriptions - be clear about the focus on building reliable data pipelines.

Keep your message short and to the point. LinkedIn InMails under 400 characters have a 22% higher response rate . End with a simple, low-pressure call to action, such as: "Are you open to a quick chat?" instead of immediately asking for a resume. Also, time your messages to align with the candidate’s local business hours (early morning or around lunchtime) for better visibility. If you don’t get a response, follow up once after 5–7 days. Sending more than two messages can risk being seen as spam .

Technical Assessment Framework for Data Engineers

Traditional algorithm-focused tests, like those on LeetCode, often miss the mark when it comes to evaluating the practical skills data engineers rely on every day. Research shows a strong connection (0.72 correlation) between success in SQL challenges and on-the-job performance, while abstract algorithm puzzles barely register with a 0.15 correlation. To truly assess candidates, focus on tasks they encounter regularly: writing SQL queries, designing pipelines, and debugging production issues.

"The data engineering interview process remains broken - testing algorithms that engineers never use while ignoring skills they need daily." – Reliable Data Engineering

Practical Technical Assessments and Coding Challenges

Start with a structured SQL challenge that takes about an hour. Begin with simple tasks like joins and aggregations, progress to more advanced concepts like window functions (e.g., RANK(), LEAD/LAG), and wrap up with optimizing a slow query using tools like EXPLAIN ANALYZE. Using real or anonymized production data helps mirror real-world complexity.

For pipeline design exercises, present candidates with a business problem requiring an end-to-end solution. This should evaluate their ability to choose the right tools, handle potential failures (like API downtime), and address scalability issues. For senior roles, include troubleshooting scenarios where candidates debug a broken pipeline using provided logs and metrics.

Take-home projects are a good fit for senior candidates or those in different time zones. These assignments, capped at 3–4 hours, should simulate real tasks - like building a pipeline with data quality checks. After submission, conduct a live review to discuss their design decisions. Disqualify candidates who struggle with basic SQL, fail to design systems without clarifying requirements, or overlook operational concerns like monitoring and data quality.

Adjust the depth of these assessments based on the candidate's experience level.

Adjusting Assessments by Seniority Level

Tailor the evaluation process to match the candidate's seniority, ensuring the challenges are both fair and relevant.

For junior and mid-level candidates (1–6 years of experience), a streamlined approach works best. After a practical SQL challenge, follow up with a 45-minute discussion on simplified pipeline scenarios and behavioral fit. Focus on foundational skills like joins, window functions, basic Python, and a solid grasp of ETL versus ELT concepts.

Senior and staff-level candidates (7+ years of experience) require a more in-depth process. Dedicate 90 minutes to advanced SQL and code reviews, another 90 minutes to system design deep dives, 45 minutes to troubleshooting simulations, and an hour to discuss leadership and strategy. These sessions should evaluate their ability to design data models (e.g., Star Schema), handle Slowly Changing Dimensions (SCD Type 2), optimize Spark jobs to address data skew, and architect scalable, efficient solutions.

"Speed wins. That is the single most important piece of advice I can give you about hiring data engineers in 2026." – Tom Kenaley, President, KORE1

To secure top talent, aim to complete the hiring process within three weeks.

Compensation and Offer Strategies

Crafting effective compensation and offer strategies is crucial to complement your technical assessments and sourcing efforts in attracting top-tier data engineering talent.

Salary Benchmarks by Seniority and Location

The demand for data engineers has driven salaries upward, especially in 2026, as companies compete for limited talent. In the U.S., the average total compensation for data engineers sits at approximately $150,234, but this varies based on experience, location, and specialization.

For instance, senior data engineers in San Francisco working at leading AI companies can earn base salaries as high as $300,000. Interestingly, data engineers now boast a higher median salary ($171,000) compared to data scientists ($148,000), reflecting the growing emphasis on infrastructure roles. Seattle offers a unique advantage: senior engineers there can take home roughly $15,000 more than their counterparts in San Francisco or New York, thanks to Washington's lack of state income tax.

Mid-level engineers, with 4–6 years of experience, are in especially high demand, with salaries typically ranging from $119,000 to $150,000. The rise of remote work has narrowed the salary gap between traditional tech hubs and smaller markets, with base salaries spanning from $64,000 to $275,000. Specialization plays a significant role in earnings: expertise in real-time streaming tools like Kafka and Flink can lead to a 20–30% salary boost over standard batch processing roles. Meanwhile, AI/ML Python engineers have seen the fastest growth in rates, with an 18% year-over-year increase.

| Seniority Level | Experience | Base Salary Range (National) | Key Skills Required |

|---|---|---|---|

| Junior / Entry | 0–2 Years | $70,000 – $110,000 | SQL, Python, basic cloud exposure |

| Mid-Level | 4–6 Years | $119,000 – $150,000 | Pipeline building, ETL, Spark, Airflow |

| Senior | 5–8 Years | $147,000 – $230,000 | Architecture, mentoring, dbt, Snowflake |

| Staff / Principal | 8+ Years | $200,000 – $320,000+ | Company-wide data platforms, real-time systems |

On top of base salaries, total compensation often includes an additional $24,251 in cash benefits on average. It’s worth noting that a U.S. employee's base salary typically accounts for only 60–70% of the total cost to the company once benefits, payroll taxes, and other overhead costs are included.

Understanding these salary trends is just the first step - closing candidates effectively and managing counteroffers is equally critical.

Closing Candidates and Managing Counteroffers

To secure top-tier talent, aligning competitive salary benchmarks with strategic offer approaches is essential.

Speed matters. Companies that complete the hiring process - from initial screen to offer - within three weeks are more likely to land their preferred candidates. When presenting offers, highlight the cutting-edge tools candidates will work with, such as Snowflake, dbt, Airflow, and Databricks. For roles involving legacy systems, emphasize your plans for modernization to attract engineers who thrive on solving complex challenges. Position the job as a "multiplier" role, showcasing how their contributions will enhance the productivity of data scientists and analysts.

Address salary expectations and potential counteroffers early - ideally during the recruiter’s first conversation with the candidate. Providing a 30/60/90-day ramp-up plan during the offer stage can ease concerns and demonstrate that your company has a clear vision for their success. Michael Kaminsky from dbt Labs offers a cautionary note:

If you hire a data engineer who just wants to muck around in the backend and hates working with less technical folks, you're going to have a bad time.

Make it clear that the role is more than just technical execution - it’s about being a strategic partner in the organization.

Another way to stand out? Compensate candidates $200–$500 for take-home assignments. This small gesture respects their time and differentiates your hiring process from others. With global data-related job openings projected at 2.9 million and an annual growth rate of around 23%, top candidates have plenty of options. Your ability to move quickly, offer transparency, and demonstrate genuine value could make all the difference in securing the right hire.

Conclusion

Hiring data engineers in 2026 requires a thoughtful and precise approach. With 2.9 million global data-related job vacancies and a 23% year-over-year growth rate, competition for talent is steep . To succeed, you need to clearly define the role, connect with niche communities, and evaluate candidates through practical challenges that test both technical skills and team compatibility.

Start by defining the role clearly. Data engineers focus on building the infrastructure that powers your data operations. Mixing up their responsibilities with those of data scientists or analytics engineers in your job description can attract the wrong candidates - or worse, signal to qualified engineers that your team lacks clarity about its needs . If you're starting from scratch, hiring a senior data engineer first is key. They’ll lay the groundwork for your data systems, enabling data scientists to work effectively with clean, organized data .

Once the role is well-defined, shift your attention to strategic sourcing. Find data engineers where they naturally gather - specialized communities like the dbt Community, developer forums, and platforms focused on cutting-edge tools. When you reach out, highlight your tech stack and demonstrate familiarity with the field. Data engineers are often wary of recruiters who conflate their role with data science, so showing you understand their work can make a big difference. During the assessment phase, prioritize "data thinking" over tool-specific expertise. Use case studies or live working sessions to see how candidates handle architectural challenges and ensure data quality.

Speed matters in this competitive hiring landscape. Companies that move quickly are the ones securing top talent. Aim to complete your hiring process in under three weeks, from initial screening to final offer. Offering $200–$500 for take-home assignments shows respect for candidates' time, while competitive pay and the chance to work with modern infrastructure can help seal the deal . As Tom Kenaley from KORE1 puts it:

The companies that move quickly get the best candidates. The rest get whoever is left.

In a market where candidates often juggle multiple offers within days, your ability to act decisively and demonstrate a deep understanding of the role will set you apart. Moving with precision and purpose could mean the difference between hiring top-tier talent and settling for less.

FAQs

What should my first data engineer hire look like?

The first data engineer you hire should prioritize creating a reliable and scalable data infrastructure. This infrastructure serves as the backbone for your entire data team, enabling smooth operations and accurate insights.

Key Skills to Look For

Your ideal candidate should have strong proficiency in:

- SQL: Essential for querying and managing data.

- Python: A versatile tool for scripting and data manipulation.

- Spark: Useful for handling large-scale data processing.

- Airflow: Critical for orchestrating workflows and automating tasks.

- Cloud Platforms: Familiarity with tools like Snowflake or BigQuery ensures seamless data storage and accessibility.

Core Responsibilities

Their primary tasks will include:

- Managing Data Pipelines: Ensuring data flows efficiently from its source to where it's needed.

- Maintaining Data Quality: Establishing processes to guarantee clean, accurate, and reliable data.

- Creating Scalable Workflows: Building systems that can grow with your business needs.

By focusing on these areas, your data engineer will help analysts and data scientists access well-organized, trustworthy data, enabling them to deliver actionable insights effectively.

How can I assess real data engineering skills fast?

To effectively evaluate data engineering skills, consider using a structured technical assessment that emphasizes practical, hands-on abilities. Prioritize tasks such as creating data pipelines, managing cloud platforms, and improving workflows with tools like SQL, Python, and Spark. Additionally, incorporate assessments of data validation and observability practices to gain a well-rounded understanding of a candidate’s ability to design and maintain production-level data systems.

What tech stack details should I include in outreach?

When reaching out to data engineers, it's important to focus on the technologies they work with daily. Core skills include SQL, Python, Spark, Airflow, and dbt - tools that are essential for building and managing data pipelines.

Additionally, familiarity with cloud-based platforms is crucial. Mentioning Snowflake, BigQuery, and Databricks demonstrates an understanding of the environments where modern data engineering happens. Highlighting these technologies ensures your message resonates with their expertise and day-to-day priorities.

.png)