Hiring compliance for technical recruiters is more complex than ever. By 2026, regulations like GDPR, NYC Local Law 144, and the EU AI Act are reshaping how organizations hire, especially when using AI-driven tools. Violations can lead to steep fines - up to €35 million under the EU AI Act or $1,500 per day for ongoing breaches of NYC Local Law 144.

Key challenges include:

- Automated Tools: Many AI hiring tools amplify bias, requiring human oversight and bias audits.

- Data Privacy: GDPR and CCPA demand transparent handling of candidate data, including rights to review, deletion, and consent.

- Bias Mitigation: Structured interviews, blind resume reviews, and standardized scoring are essential to avoid discrimination.

- Worker Classification: Misclassifying contractors as employees can result in heavy penalties.

What to do now?

- Audit all AI tools for compliance and bias.

- Ensure human review of AI decisions.

- Update candidate notices to meet GDPR, CCPA, and local laws.

- Conduct annual bias audits and keep detailed records.

Staying compliant isn't optional - it protects your organization from legal risks while improving hiring practices.

The Compliance Landscape for Tech Recruiting

Technical recruiters work within a complex web of regulations, balancing federal standards with stricter state and local laws. While traditional HR compliance emphasizes general anti-discrimination practices, tech recruiting faces additional challenges like ensuring transparency in algorithms, secure handling of candidate data, and oversight of automated tools. The real difficulty lies in navigating overlapping rules, where even a minor mistake can lead to serious consequences.

GDPR and CCPA: What Recruiters Need to Know

GDPR Article 22 limits automated decision-making that has legal or similarly significant effects, including hiring decisions . This means recruitment tools that automatically reject candidates without human oversight may violate compliance. To address this, recruiters must ensure meaningful human involvement - a trained individual who can review and override AI decisions - and allow candidates to challenge these decisions .

In the U.S., the CCPA grants California residents rights over their personal data, including the ability to know what data is collected, request its deletion, and opt out of data sales. For recruiters, this means updating privacy notices to disclose when AI tools analyze candidate information (like GitHub profiles) and creating processes to handle deletion requests .

But data privacy laws are only part of the equation. Federal and state hiring regulations add another layer of complexity.

EEOC, OFCCP, and State-Level Hiring Laws

Federal laws such as Title VII, ADA, and ADEA prohibit discrimination but don’t specify how AI tools should be managed. The EEOC uses the "four-fifths rule" to identify potential bias in technical hiring: if the selection rate for a protected group is less than 80% of that for the group with the highest rate, it could trigger an investigation . Importantly, employers are still responsible for discriminatory outcomes, even when using third-party AI tools.

For federal contractors, OFCCP regulations demand proactive recruitment of veterans and disabled individuals, alongside rigorous recordkeeping to maintain contracts . Meanwhile, state laws introduce additional hurdles. For example:

- NYC Local Law 144 requires annual independent bias audits for automated tools and mandates 10 business days’ notice before their use .

- Illinois mandates consent for AI video analysis and requires data deletion within 30 days if requested .

- California FEHA amendments (effective October 1, 2025) now consider anti-bias testing as key evidence in discrimination investigations .

Each of these laws imposes unique procedural requirements, creating a patchwork of rules that exceed federal guidelines.

| Regulation | Key Requirement | Geographic Scope |

|---|---|---|

| GDPR Article 22 | Right to human review of automated decisions | EU / EU Residents |

| NYC Local Law 144 | Annual independent bias audit & 10-day notice | New York City |

| Illinois AIVIA | Consent for AI video analysis & 30-day deletion | Illinois |

| EU AI Act | High-risk classification; strict data governance | European Union |

| CA FEHA | ADS anti-bias testing used as legal evidence | California |

These regulations highlight the unique challenges technical recruiters face when managing developer data and deploying AI-driven hiring tools.

Hiring engineers?

Connect with developers where they actually hang out. No cold outreach, just real conversations.

Compliance Challenges Specific to Technical Recruiters

Technical recruiters often navigate compliance issues that general HR teams might not encounter. Tasks like sourcing developers from GitHub, evaluating portfolios, and classifying tech contractors come with legal risks that require a deeper understanding of specific regulations.

Managing Developer Data: GitHub, Portfolios, and Open Source

With over 190 million repositories and 65 million users, GitHub is a treasure trove for finding developer talent . However, sourcing from public profiles on platforms like GitHub brings unique compliance challenges, especially under GDPR and CCPA.

For GDPR, passive sourcing often relies on "Legitimate Interests" (Article 6) rather than consent, as getting permission during initial discovery isn't practical . Even so, recruiters need to document a balancing test to show that their hiring needs don't outweigh a candidate's privacy rights. Additionally, Article 14 mandates that recruiters inform candidates about data collection within a reasonable period - typically during the first point of contact .

"GDPR compliance for AI sourcing tools means collecting and processing candidate data lawfully (Article 6), transparently informing candidates (Articles 13/14), minimizing data and protecting it, enabling data-subject rights, governing vendors and transfers, and avoiding solely automated decisions (Article 22)." - Ameya Deshmukh, Director of Recruiting

Web scraping profiles isn't automatically illegal, but it must respect robots.txt directives and the platform's terms of service . Recruiters should also steer clear of gathering or inferring sensitive data - like race or health status - that may inadvertently appear in profile photos or open-source contributions . If AI tools are used to rank GitHub profiles based on metrics like commit history or repository stars, these tools might fall under Automated Employment Decision Tools (AEDTs) as defined by NYC Local Law 144. This law mandates annual independent bias audits, though compliance rates for such audits remain low .

To stay compliant, include a direct link to your privacy notice in your first outreach message to passive candidates. Be upfront about where you found their data and ensure you have clear procedures for handling deletion requests .

While managing developer data is complex, ensuring proper classification of workers is another critical area for compliance.

Contractor vs. Employee Classification Risks

Misclassifying a developer as a contractor when they should be classified as an employee can lead to serious legal consequences. Technical recruiters must navigate two federal frameworks to avoid this: the IRS "Common Law Rules" (focusing on control over behavior, finances, and the relationship) and the Department of Labor's "Economic Reality Test" (centered on control and worker independence) .

By February 2026, the Department of Labor plans to return to a framework similar to 2021 standards, emphasizing two core factors: the degree of control and the worker's ability to profit or lose independently . However, state laws can complicate matters further. States like California, Illinois, Massachusetts, and New Jersey use the stricter "ABC Test," which assumes workers are employees unless proven otherwise .

"The department's proposed rule seeks to protect these workers' entrepreneurial spirit and simplify compliance for American job creators navigating a modern workplace, all while maintaining robust protections for employees under the Fair Labor Standards Act." - Lori Chavez-DeRemer, Secretary of Labor

The penalties for misclassification can be steep. The IRS may impose penalties up to 100% of unpaid tax liability plus interest . In New Jersey, employers could face fines of up to 5% of the worker's gross earnings from the previous 12 months, along with additional penalties of $250 per misclassified worker for first offenses and $1,000 for repeat violations .

To minimize risks, conduct annual audits of developer roles as they evolve. A contractor's role might gradually shift into what legally qualifies as an employee relationship . Keep detailed records showing that contractors set their own schedules, use their own tools, and determine their own work methods. Use specific Statements of Work (SOW) to outline deliverables and timelines instead of relying on open-ended agreements . Additionally, confirm that contractors are engaged in independent trade by verifying they have multiple clients and cover their own business expenses .

Avoiding Bias in Technical Screening

In the increasingly complex world of hiring compliance, ensuring unbiased technical screening is not just a best practice - it’s a necessity. Whether you're using manual methods or AI tools, any hint of bias in your evaluations while reducing time to hire for technical roles can open the door to EEOC lawsuits, GDPR penalties, or state-level enforcement. The key is to treat every interview and assessment as a structured, documented process rather than an informal back-and-forth.

Structured Interviews and Standardized Scoring

Every interview question and evaluation criterion should be directly tied to a documented job analysis outlining the specific skills and competencies required for the role . Without this documentation, defending your process against EEOC challenges becomes much harder. For seasoned candidates, behavioral interview questions based on the STAR method (Situation, Task, Action, Result) are effective in drawing out detailed responses. For entry-level roles, situational or hypothetical questions can better gauge problem-solving abilities.

"Organizations that treat the interview as an informal conversation rather than a structured assessment instrument expose themselves to adverse impact liability."

- Talent Acquisition Authority

To make evaluations consistent, use Behaviorally Anchored Rating Scales (BARS). These scales offer clear benchmarks for scoring, which helps reduce rater drift and biases like the halo effect, where one strong impression skews the entire evaluation. Research shows that structured interviews have a validity coefficient of 0.51, compared to just 0.38 for unstructured interviews . Another way to minimize bias is to have interviewers score candidates independently before group discussions, which prevents anchoring bias.

When using AI in your hiring process, ensure that a qualified human reviews all decisions, as required by GDPR Article 22 . This review should include clear reason codes for every decision - such as "Missing required Python certification" - to maintain transparency for both candidates and auditors .

"Meaningful human involvement means a trained person with authority reviews inputs and reasoning, questions the system, considers new information, and can change the decision."

- Ameya Deshmukh

For employers with 15 or more employees, EEOC regulations (29 CFR § 1602.14) require retaining interview documentation for at least one year . For high-volume technical roles, monthly comparisons of selection rates using the four-fifths rule can help identify potential adverse impact. If the selection rate for any demographic group is less than 80% of the rate for the group with the highest selection rate, this could signal a problem . Anonymizing resume data is another effective step to ensure unbiased evaluations.

Blind Resume Reviews

Blind resume reviews eliminate protected attributes like race, gender, age, and disability from the initial screening process. They also remove proxies such as university names or ZIP codes, which can unintentionally reflect those attributes . This approach shifts the focus to skills, certifications, and work samples rather than superficial credentials . By configuring your ATS or AI tools to redact sensitive data - like names, graduation years, and club memberships - you can reduce bias while keeping a separate, secure dataset for fairness testing .

This method is particularly effective in addressing algorithmic redlining, where AI systems unintentionally favor certain groups. For instance, Amazon’s 2018 recruiting algorithm was found to downgrade resumes that included terms like "women's", as in "women's chess club" .

| Feature to Blind | Reason for Redaction | Impact on Technical Hiring |

|---|---|---|

| Name / Gender | Reduces implicit bias related to gender or ethnicity | Keeps the focus on technical skills |

| Graduation Year | Avoids age-related bias | Prioritizes current skills over perceived seniority |

| University Name | Prevents "prestige bias" | Broadens opportunities for self-taught or bootcamp-trained developers |

| ZIP Code | Avoids socio-economic and racial bias | Reduces the risk of "algorithmic redlining" |

Before opening a role, clearly define what qualifies as "acceptable evidence" for the position - specific certifications, years of experience with relevant tools, or portfolio examples . Require reason codes for every screening decision to ensure evaluations are skills-based, not identity-based . To further safeguard fairness, implement a "human-in-the-loop" process where recruiters review a sample of anonymized rejections to catch any unintended filtering of qualified, diverse candidates .

"High overall accuracy in an AI hiring model is meaningless if error rates and selection rates differ sharply across demographic groups. Fairness requires disaggregated analysis, not just a single performance metric."

- Pertama Partners

How to Handle Candidate Data Correctly

Handling candidate data properly is a cornerstone of compliance in tech hiring. Collect only the information absolutely necessary for the hiring process. For example, avoid requesting details like full addresses, graduation years, or club memberships unless they are essential. Keeping data collection minimal reduces compliance risks and aligns with both automated and manual hiring processes.

Consent and Data Minimization

When it comes to GDPR, while "legitimate interest" might justify initial candidate sourcing, you’ll need explicit consent to retain their data for future opportunities. For candidates in California, under CCPA/CPRA, ensure opt-in boxes are left unchecked by default. If you’re reaching out to passive candidates on platforms like LinkedIn or GitHub, include your privacy notice to explain what data you collect and why.

Your applicant tracking system (ATS) should enforce retention policies, such as deleting or anonymizing non-hired candidates' data after 6–12 months. Keep detailed logs of when and how consent was obtained to stay prepared for audits. For AI-driven video interviews, Illinois law requires explicit consent before recording and mandates deletion of recordings within 30 days of a candidate’s request .

Beyond limiting data collection, it’s equally important to handle candidate requests and potential data breaches with care.

Right to Deletion and Breach Notification

When a candidate requests data deletion, verify their identity and check for any legal holds that might require retaining the data. Under CCPA/CPRA, you must respond to these requests within 45 days .

Make sure your vendors comply with data processing agreements (DPAs) by erasing candidate data as required. Generally, keep records only as long as needed for audits - typically 3–4 years. If a data breach occurs, preserve all related records, consult legal counsel immediately, and cooperate with investigations. Automated tracking can help you meet California's breach notification deadlines.

For AI tools, if you discover bias or compliance issues, pause their use immediately, investigate the problem, and document your corrective actions. NYC Local Law 144 requires you to retain bias audit results and candidate data for at least 3 years , while general compliance records should be kept for 4 years to support potential audits .

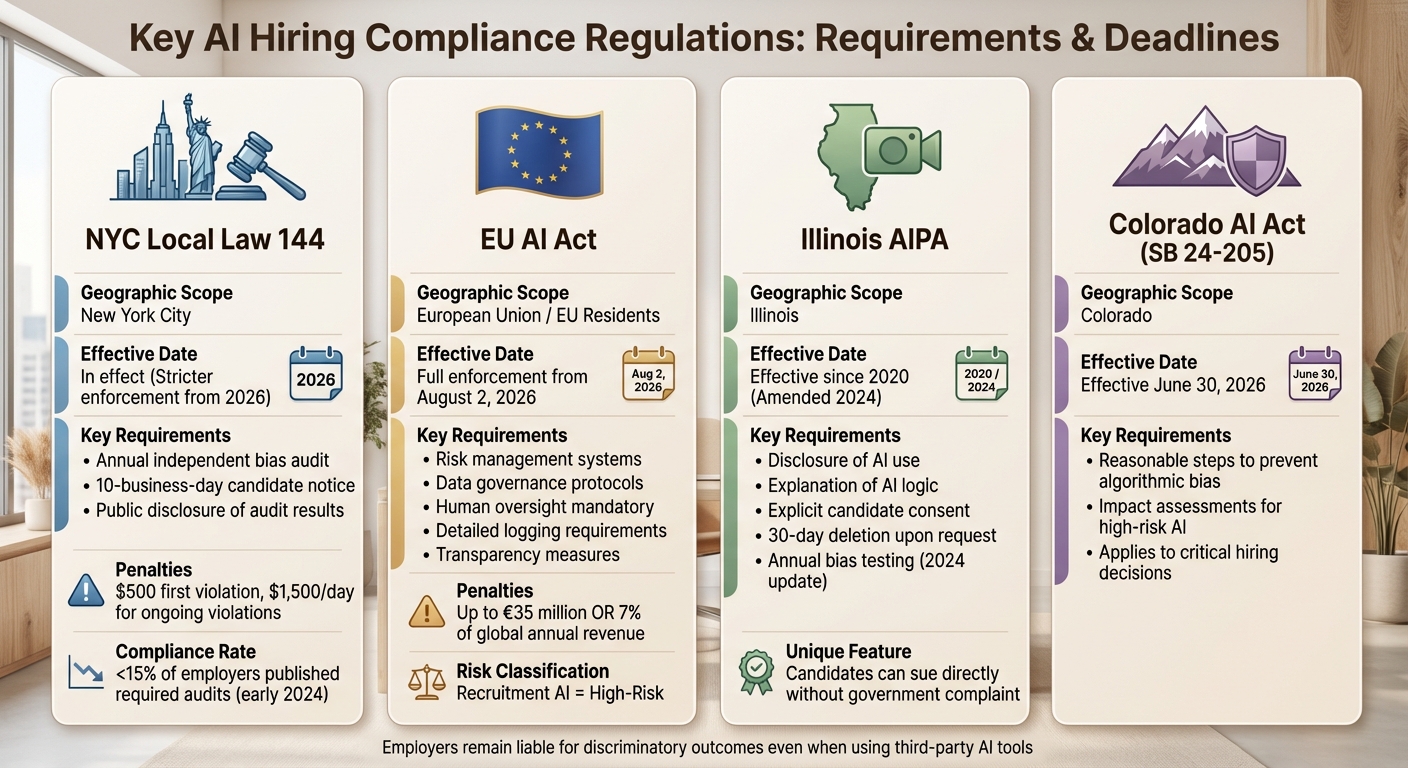

AI Regulations in Hiring: What You Need to Know

::: @figure  {Key AI Hiring Compliance Regulations: Requirements and Deadlines Comparison}

{Key AI Hiring Compliance Regulations: Requirements and Deadlines Comparison}

Using AI for tasks like resume screening, ranking candidates, or analyzing video interviews places your hiring practices under tight regulation. These rules build on earlier guidance about handling candidate data and addressing bias through proven diversity methods. Many regulators now categorize recruitment AI as a high-risk activity, similar to credit scoring or granting access to essential services. This means companies must maintain detailed documentation, conduct regular monitoring, and ensure human oversight.

The regulatory environment is complex and varies depending on where your candidates live. For instance, a developer in Brooklyn will have different protections compared to someone in Denver or an engineer in the EU. To stay compliant, you may need to adjust your processes based on the location of your applicants.

Here’s a breakdown of the major AI laws shaping how recruiters manage automated hiring tools across different regions.

Key AI Laws: EU AI Act, NYC Local Law 144, and Illinois AIPA

NYC Local Law 144

This law has been in force for some time, but stricter enforcement begins in 2026. Following a December 2025 audit that revealed 75% of 311 calls were misrouted, enforcement measures were tightened. The law mandates an annual independent bias audit, public disclosure of audit results, and a 10-business-day notice to candidates. Non-compliance penalties start at $500 for the first violation and can escalate to $1,500 per day for ongoing violations .

In early 2026, Summit Talent Partners faced $42,000 in remediation costs after a candidate complaint highlighted the absence of a public audit summary. An audit later revealed an impact ratio of 0.72 for one intersectional group, which fell below the 0.80 threshold that indicates potential adverse impact. This incident underscores the need for consistent audits and oversight .

The EU AI Act

Under the EU AI Act, most recruitment and selection AI tools are considered high-risk. This classification requires strict measures, including risk management, data governance, transparency, detailed logging, and human oversight. Full enforcement began on August 2, 2026, with penalties for non-compliance reaching up to €35 million or 7% of global annual revenue. Companies hiring EU-based talent must implement robust risk management and oversight processes .

Illinois AIPA

Effective since 2020, Illinois' AIPA - formerly the AI Video Interview Act - was updated in 2024 to require annual bias testing for video AI tools. The law also mandates clear disclosures, explanations of AI logic, explicit candidate consent, and the option for candidates to request the deletion of recordings within 30 days. Illinois is unique in allowing candidates to directly sue employers for AI-driven discrimination without needing to file a government complaint first .

Colorado AI Act

Scheduled to take effect on June 30, 2026, the Colorado AI Act (SB 24-205) requires employers to take reasonable steps to prevent algorithmic bias and mandates impact assessments for high-risk AI used in critical hiring decisions .

Even if you rely on a third-party vendor for AI tools, you are still legally responsible for discriminatory outcomes. To reduce risk, request validation studies and bias audit results from vendors, and ensure contracts specify data retention and deletion terms.

AI Regulations Compared: Requirements and Deadlines

The table below outlines the core requirements, geographic scope, and effective dates for these key regulations.

| Law | Key Requirements | Applicability | Effective Dates |

|---|---|---|---|

| NYC Local Law 144 | Annual independent bias audit; 10-business-day candidate notice; public disclosure | Applies to tools significantly aiding NYC hiring | In effect (Stricter enforcement from 2026) |

| EU AI Act | Risk management; data governance; human oversight; detailed logging | High-risk classification for recruitment AI | Full enforcement from August 2, 2026 |

| Illinois AIPA | Disclosure; AI logic explanation; explicit candidate consent; 30-day deletion | Applies to AI-analyzed video/audio interviews in IL | Effective since 2020 (Amended 2024) |

| Colorado AI Act | Measures to prevent bias; mandatory impact assessments | Covers high-risk AI in critical hiring decisions | Effective from June 30, 2026 |

As of early 2024, fewer than 15% of NYC employers using AI hiring tools had published the required bias audit results. It’s crucial to review every AI tool in your hiring pipeline - whether it’s a resume screener, video analysis system, or chatbot - and assess whether it qualifies as an Automated Employment Decision Tool. Ensure you publish clear audit summaries on your careers page, provide candidates with an alternative selection process upon request, and use transparent "reason codes" (e.g., "Role requires X certification; not found") to explain AI screening outcomes.

In February 2026, Nexlify Fintech, a 450-employee company in New York City, successfully avoided penalties during a DCWP inquiry. Their approach included maintaining a Compliance Binder containing anonymized applicant data, intersectional impact ratio tables, and a signed attestation from the Chief People Officer confirming human override rights. This example highlights the importance of systematic documentation aligned with compliance checklists.

"High overall accuracy in an AI hiring model is meaningless if error rates and selection rates differ sharply across demographic groups. Fairness requires disaggregated analysis, not just a single performance metric." – EEOC and EU AI Act Guidance

Compliance Checklist for Technical Recruiters

Recruiters face the constant risk of compliance missteps, and even a small oversight can lead to serious consequences. The difference between a smooth audit and a hefty remediation bill often lies in consistent and thorough documentation. Below is a practical checklist designed to help you audit your hiring process and address potential compliance risks before regulators step in.

10 Steps to Audit Your Hiring Process

1. Inventory Every AI and Automated Tool

Create a detailed map of every tool in your hiring process - resume parsers, chatbots, video interview platforms, ATS scoring features - and document its role in decision-making. If a tool significantly influences or replaces human decisions, it may fall under regulations like NYC Local Law 144 or the EU AI Act. Use a spreadsheet to track tool names, vendors, functions, and the jurisdictions where they are used.

2. Assess Jurisdictional Requirements

Understand which laws apply based on your candidates' locations, not your company's headquarters. For example, hiring a developer in Brooklyn triggers NYC Local Law 144, while sourcing an engineer in the EU requires GDPR compliance, including a Legitimate Interests Assessment for passive sourcing. Map out your applicant flow by geography and flag regions with stricter regulations.

3. Conduct Annual Independent Audits and Adverse Impact Testing

For roles in NYC, schedule yearly independent bias audits, ensuring the auditor has no financial ties to the tools being evaluated. Use the four-fifths rule to check for adverse impact: if a protected group’s selection rate is less than 80% of the highest group’s rate, it’s a red flag. Regular testing is crucial - by early 2024, fewer than 15% of NYC employers using automated tools had published the required audit results .

4. Implement Human-in-the-Loop Checkpoints

Ensure that every AI-generated recommendation is reviewed by a trained human with the authority to override decisions. Keep records of who reviewed each decision and whether any overrides occurred.

5. Update Transparency Notices for Each Jurisdiction

Provide clear, location-specific transparency notices. For example, NYC requires a 10-business-day advance notice before using automated tools, specifying which qualifications the tool evaluates. Illinois mandates explicit consent for AI-analyzed video interviews, while GDPR requires notices at first contact. Post these notices on your careers page and in candidate communications.

6. Enforce Data Minimization and Retention Limits

Only collect data directly related to job qualifications and ensure sensitive attributes like race, health status, or marital status are excluded from your AI systems. Set up automated deletion workflows to comply with jurisdictional retention limits, and schedule reminders to purge unnecessary data once retention periods expire.

7. Perform Vendor Due Diligence

Request validation studies and security certifications from vendors. Contracts should include GDPR Article 28 data processing terms and prohibit unauthorized model training. Ask vendors for "model cards" that outline data sources, fairness metrics, and explainability features.

"The vendor said it was unbiased is not a defense." – Pertama Partners

8. Establish Clear Accommodation Pathways

Make it easy for candidates to request alternative assessments, meeting ADA requirements and emerging opt-out rights under CCPA/CPRA. Include this information in candidate notices and on your careers page, offering simple options like a dedicated email or form.

9. Maintain Audit Trails with Reason Codes

Log every automated recommendation with specific reason codes, such as "Advanced due to verified Python portfolio" or "Role requires AWS certification; not found." Include details like model versions, candidate notices, and human override actions. When sourcing developer data from platforms like GitHub, ensure your AI evaluates verified attributes, such as certifications or years of experience, rather than proxies like school names . Regularly review these logs as part of your compliance process.

10. Schedule Recurring Compliance Reviews

Adopt a continuous review process. Conduct adverse impact tests regularly, refresh bias audits annually, and review tool inventories, vendor contracts, and candidate notices quarterly. Assign a compliance owner to oversee and sign off on each review cycle. A consistent review schedule helps catch issues early, minimizing risks and costs.

"Compliance is not a brake on progress; it's your speed governor - letting you move fast without flying blind." – Ameya Deshmukh, EverWorker

This checklist should evolve with your hiring tools and the regulatory landscape. By updating it quarterly, you can build a proactive system that identifies and resolves compliance risks before they escalate into fines or legal challenges.

How daily.dev Recruiter Handles Compliance

daily.dev Recruiter takes a developer-first approach to compliance, centering its process on a double opt-in consent system. This ensures that every step of candidate interaction is transparent, voluntary, and aligned with GDPR and CCPA regulations. Developers first agree to be discoverable for opportunities and later actively confirm their interest in a specific role before any contact is made. This method guarantees that consent is specific, informed, and can be withdrawn at any time, meeting GDPR standards .

The platform is built on clear principles for handling candidate data. When developers opt in, they are provided with detailed information about what data is shared, how it will be used, and who will have access to it. Transparency is treated as a fundamental right. Recruiters can only view profiles of developers who have explicitly agreed to engage, and candidates retain full control over their data. They can withdraw consent or request data deletion whenever they choose, aligning with GDPR's Right to Deletion and the CCPA's data access and deletion requirements .

daily.dev Recruiter also avoids the pitfalls of automated decision-making and opaque algorithms. Every match is human-reviewed and initiated by the candidate, ensuring compliance risks tied to AI-driven screening are eliminated . The platform follows data minimization principles, allowing recruiters to see only the information that developers choose to share. This ensures that all data collected is directly relevant to the hiring process, reducing unnecessary risks.

Additionally, audit trails are maintained for every opt-in, match, and withdrawal of consent. These detailed records provide a complete regulatory history, showcasing how compliance is applied in data-driven recruitment .

"Transparency is not a banner in the footer; it's a candidate right." – Ameya Deshmukh, EverWorker

Conclusion

Compliance in technical recruiting is no longer just a box to check - it's a strategic edge that can define success in today's hiring landscape. What was once a theoretical debate about "responsible AI" has evolved into enforceable regulations with real financial stakes.

The upside? Staying ahead of compliance doesn't mean slowing down. As Ameya Deshmukh from EverWorker explains:

"Compliance is not a brake on progress; it's your speed governor - letting you move fast without flying blind" .

By prioritizing skills-based evaluations, maintaining human oversight, and ensuring transparent audit trails, you not only strengthen your hiring process but also improve your talent pipeline. Developers, in particular, place a high value on data privacy and transparency, often rewarding these efforts with higher application completion rates and better candidate satisfaction scores (NPS) .

To get started, focus on the basics:

- Standardize screening criteria around validated, job-relevant skills using AI-driven parsing.

- Ensure meaningful human oversight of AI-driven decisions.

- Set up your ATS to log every decision with clear reason codes.

- Conduct monthly adverse impact tests to detect model drift early.

- Require independent bias audits from AI tool vendors.

Aim to establish a compliance framework within 30 days by assigning clear roles, updating candidate notices, and implementing ongoing monitoring practices . Keep in mind that regulations are tightening - California's FEHA amendments took effect in October 2025, and Illinois HB-3773 rolls out in January 2026 . Companies that treat compliance as a dynamic, operational framework - not just a static policy - will be better positioned to attract top technical talent while avoiding legal and reputational risks.

The choice is clear: build trust through transparency and proactive measures, or risk falling behind as compliance becomes a central battleground in AI-driven hiring. Use these strategies to refine your approach and stay ahead in the competitive world of tech recruitment.

FAQs

Which hiring laws apply based on where my candidates live?

Hiring regulations vary based on where candidates live. For instance, NYC Local Law 144 mandates bias audits and notifications for job applicants in New York City. In the European Union, the GDPR oversees how data is handled and ensures candidates' rights are protected. Meanwhile, at least 12 states across the U.S. are rolling out laws addressing AI in hiring, and federal guidelines, such as those from the EEOC, influence compliance on a national scale.

When does an AI tool count as an automated hiring decision?

An AI tool is considered to be making an automated hiring decision when it processes candidate data on its own and either directly determines or heavily influences employment outcomes - without any human intervention. This becomes particularly important when these decisions carry significant consequences for candidates, as outlined in regulations such as GDPR and comparable legal frameworks.

What records should I keep to prove compliance in an audit?

To show compliance during an audit, keep detailed records of the following: candidate data, consent notices, adverse impact testing results, bias audit reports, human review documentation, and vendor contracts. These documents not only confirm your commitment to regulations but also highlight transparency in your hiring practices.

.png)