Hiring backend engineers who can deliver production-ready code is critical to avoiding costly mistakes and ensuring smooth operations. The right engineer doesn't just write code - they design systems that handle real-world challenges, mitigate risks, and support business goals. Here's what you need to know:

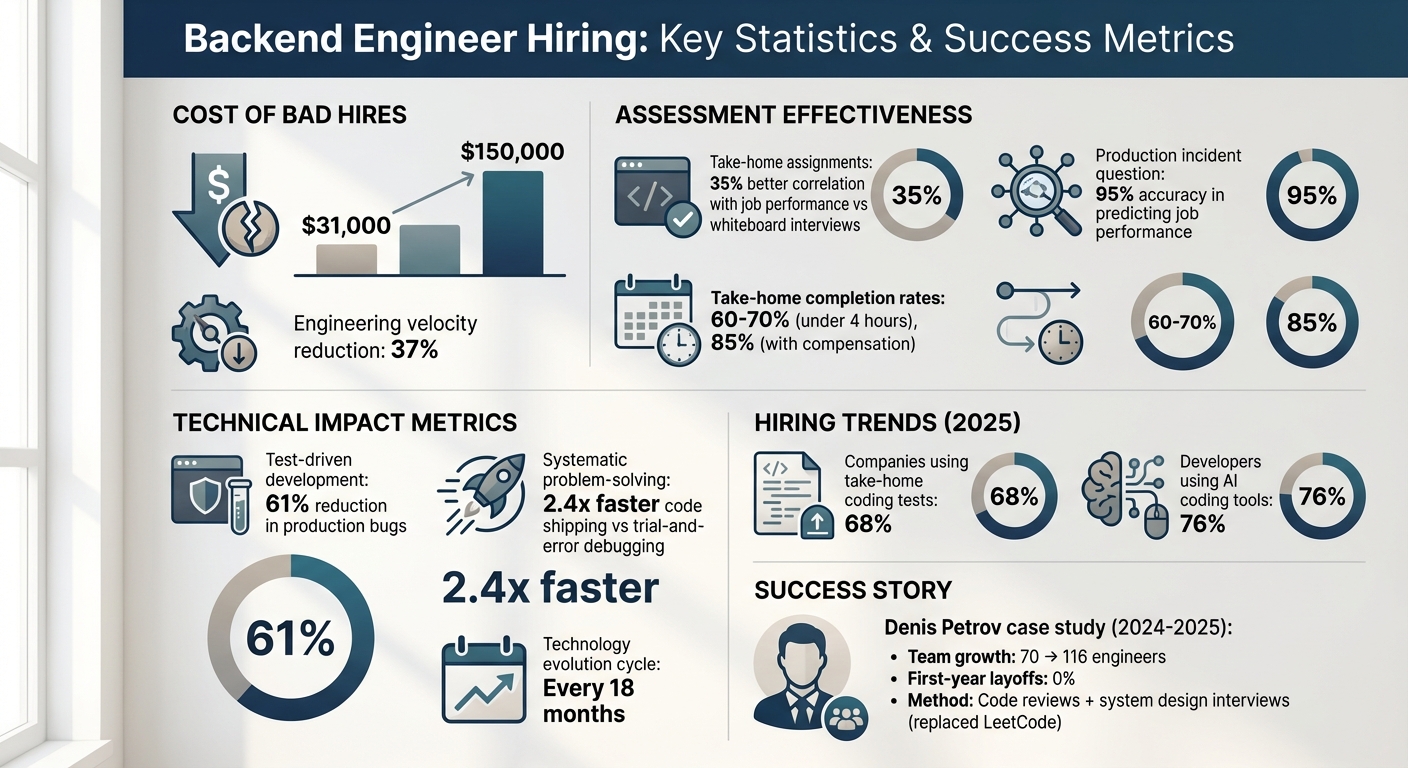

- Bad hires are expensive: Costs range from $31,000 to $150,000 due to wasted salaries, rework, and reduced productivity.

- Key skills to look for: API design, database optimization, observability tools, debugging workflows, and DevOps practices like CI/CD.

- Soft skills matter: Ownership, clear communication, and problem-solving under pressure are essential.

- Effective screening methods: Focus on GitHub reviews, code review sessions, and real-world scenarios instead of abstract puzzles.

- System design expertise: Test scalability knowledge and ability to manage trade-offs in system architecture.

- Behavioral interviews: Ask about past production incidents to gauge accountability and technical decision-making.

To hire the best, combine practical assessments like take-home assignments and pair programming with behavioral and system design interviews. This ensures you find engineers who deliver reliable, maintainable code that drives business success.

::: @figure  {Backend Engineer Hiring: Key Statistics and Success Metrics}

{Backend Engineer Hiring: Key Statistics and Success Metrics}

What Makes a Backend Engineer Production-Ready?

Being production-ready means more than just writing functional code - it’s about preparing for the unexpected. Engineers at this level design systems that can handle real-world challenges, like third-party API failures, sudden traffic surges, or database bottlenecks. They actively identify risks such as race conditions, memory leaks, and cascading failures, ensuring systems remain stable under pressure .

When things go wrong, production-ready engineers don’t panic - they act. They dive into logs, apply temporary fixes as needed, and focus on implementing long-term solutions to prevent the same issues from happening again .

Technical Skills That Matter

To ship reliable code, there are certain technical skills every backend engineer should master. API design and database optimization are at the core. Engineers need to be fluent in REST and GraphQL APIs, including implementing OAuth2 authentication, managing rate limits, and handling errors across service boundaries . On the database side, they should know how to design efficient schemas, configure high availability for systems like PostgreSQL or MySQL, and fine-tune queries for performance .

Observability is another key area. Tools like Prometheus, Grafana, and Datadog help engineers stay ahead of potential problems rather than just reacting to them . They also need experience setting Service Level Objectives (SLOs), running load tests with tools like k6 or JMeter, and implementing circuit breakers to maintain system responsiveness . Security fundamentals are equally critical. Engineers should be familiar with OWASP standards, secrets management tools like Vault or KMS, and compliance requirements such as GDPR. DevOps skills - like creating CI/CD pipelines with GitHub Actions or Jenkins, using Terraform for Infrastructure as Code, and managing containers with Docker and Kubernetes - round out the technical toolkit .

But technical expertise alone isn’t enough. Communication and accountability play a huge role in managing production issues effectively.

Soft Skills for Production Environments

In high-pressure situations, soft skills like ownership and clear communication are indispensable. The best engineers take full responsibility for their actions, using first-person accountability to explain how they resolved incidents . They also connect technical issues to business outcomes. For example, instead of saying, “I improved code quality,” they might explain how refactoring an authentication service reduces security risks that could compromise customer data .

Composure during outages is another hallmark of a production-ready engineer. They view failures as opportunities to improve, focusing on long-term fixes rather than quick patches . Interestingly, asking candidates to walk through a past production incident has proven to be a strong predictor of job performance, with a 95% success rate . And in a field where technologies evolve every 18 months, the ability to learn quickly is non-negotiable. Engineers who can systematically break down problems tend to ship code 2.4 times faster than those who rely on trial-and-error debugging .

"They've played failure scenarios in their head before someone asked them to. That habit is the difference between engineers who prevent incidents and engineers who respond to them."

- CodexLab, Author, Stackademic

How to Screen Backend Engineering Candidates

When screening backend engineers, it’s essential to focus on their ability to build and maintain production-ready systems. Resumes alone often fail to capture the depth of a candidate's experience, so the screening process should prioritize real-world accomplishments over theoretical knowledge. The key is to identify engineers who not only write functional code but also understand how their work impacts live systems. This involves assessing their experience with deployed code, their approach to technical trade-offs, and their ability to learn from past challenges.

Skills-Based Screening Methods

One of the most effective ways to evaluate backend engineers is to focus on their practical skills. Instead of relying on traditional resume reviews, try these approaches:

- GitHub Reviews: Spend 10–15 minutes reviewing their repositories to gauge coding habits. Look for meaningful commit histories, clear documentation, and evidence of testing practices. These details can reveal how they approach real-world projects.

- Code Review Sessions: In a 15–20 minute session, ask candidates to analyze a code snippet. Focus on how they address issues like thread safety, error handling, or I/O efficiency. This provides insight into their problem-solving mindset and attention to detail.

Avoid abstract coding puzzles that don’t reflect real-world challenges. Instead, present scenarios that mimic production environments. For instance, ask them to spot potential issues in a code snippet, such as race conditions or inefficient database queries (e.g., N+1 problems). Follow up with questions like, “Why did you choose X over Y?” to understand their reasoning. Strong candidates will discuss trade-offs - such as latency, operational costs, or team familiarity - rather than offering surface-level answers based on tutorials.

Evaluating Production Experience

True production experience goes beyond the number of years listed on a resume. It’s about having shipped code that serves actual users and being able to articulate the impact of technical decisions. For example, a standout candidate won’t just mention API failures - they’ll explain how a broken checkout system led to a $28,000 revenue loss and what they learned from the situation.

Look for familiarity with tools and processes that are critical in production environments:

- CI/CD Pipelines: Can they describe how they’ve used continuous integration and deployment tools to streamline releases?

- Observability Tools: Ask about their experience with monitoring platforms like Grafana or Datadog. Top candidates will share specific examples of how these tools helped them identify and resolve issues before they escalated.

- Debugging Workflows: Probe their approach to troubleshooting. For instance, how do they handle debugging a critical issue at 3 AM? Can they systematically navigate logs and pinpoint the root cause?

Be mindful of red flags during these discussions. Candidates who blame "messy codebases" or fail to explain their own decisions may struggle with accountability and ownership. On the other hand, those who can describe specific debugging sessions or deployment challenges demonstrate the kind of hands-on experience you’re looking for.

Next, we’ll explore how to evaluate system design and scalability skills to complete your assessment process.

How to Assess System Design and Scalability Skills

System design interviews are a window into a backend engineer's ability to build systems that can handle real-world production traffic. These evaluations focus on how candidates manage trade-offs, pinpoint bottlenecks, and design systems that can endure heavy loads. As Arslan Ahmad, Founder & CEO of Design Gurus, explains:

"Nailing the algorithmic round gets you the job; nailing the system design round gets you the Senior/Staff-level compensation."

When evaluating candidates, focus on four key areas: Problem Solving (how well they scope ambiguous requirements), Technical Design (application of components like load balancers or message queues), Trade-off Analysis (e.g., SQL vs. NoSQL, consistency vs. availability), and Communication (their ability to clearly explain complex decisions) . Strong candidates will start by asking clarifying questions - things like user metrics, read/write ratios, and latency needs - before jumping into diagrams. This approach builds on their past production experience while highlighting their understanding of system design and scalability.

System Design Interview Questions

Some common system design scenarios include:

- URL shortener: Tests for handling read-heavy systems and caching strategies. Candidates should calculate metrics like 100 million new URLs per month, translating to approximately 40 writes per second and 4,000 redirects per second (assuming a 100:1 read-to-write ratio) .

- News feed: Explores fan-out models, especially when differentiating between regular users and high-profile accounts.

- Ride-sharing app: Assesses geospatial indexing and real-time location updates .

Another example is designing an API rate limiter, which evaluates distributed state management and the choice between "fail-open" or "fail-closed" behavior during failures . Candidates should justify their technology decisions based on the constraints of the system .

While system design is essential, verifying a candidate's scalability expertise is equally important.

Testing Scalability Knowledge

To gauge scalability skills, focus on practical aspects like monitoring, deployment strategies, and failure recovery. For instance, in a real-time messaging system, candidates should understand that each WebSocket connection uses about 10KB of server memory. This means 1 million concurrent connections would require roughly 10GB of RAM per gateway node .

Discuss deployment trade-offs, such as balancing speed with resource duplication . Senior candidates should recognize that exactly-once processing is often impractical and instead design for at-least-once processing using idempotency keys . Lauren from InterviewPal sums it up well:

"Senior engineers design systems they'd actually want to be on-call for."

Top-tier candidates should provide clear metrics - like error rates or revenue impact - when describing their solutions. They should also demonstrate the ability to identify root causes (e.g., N+1 queries or connection pool exhaustion) and propose long-term fixes rather than temporary workarounds .

How to Conduct Behavioral and Debugging Interviews

After evaluating system design and scalability, it’s just as important to understand how candidates handle real-world production stress. A simple yet effective question like, "Tell me about a production incident you handled. Walk me through it," has been shown to predict on-the-job performance with 95% accuracy . This question reveals how candidates think and act under pressure.

The best candidates provide clear and detailed accounts, including timestamps, error rates, and specific technical causes. For example, one standout candidate described a 2 AM checkout failure where Datadog revealed a 34% error rate caused by HikariCP pool exhaustion from an N+1 query. They calmly explained how they increased the pool size and applied a hotfix, minimizing downtime to 47 minutes and limiting losses to 800 transactions . On the other hand, weaker candidates often deflect blame to "messy codebases" or offer vague explanations, relying on generic team efforts without metrics to back up their claims .

Testing Production Debugging Skills

An interactive role-playing exercise can be a powerful tool to evaluate debugging skills. In this exercise, candidates are asked to request metrics or logs, testing their ability to filter out irrelevant data and focus on what matters most . Dan Slimmon, an experienced SRE, emphasizes:

"I'm much more interested in how you go about investigating the problem than in how far you get" .

To raise the stakes, introduce constraints mid-interview, such as "Assume logs are unavailable" or "This issue only occurs in production." This tests how well candidates adapt to unexpected challenges. The goal is to see if they prioritize forming testable hypotheses rather than making random code changes. At the end, ask them to summarize their findings as if they were updating a colleague. This ensures they can communicate technical details effectively under pressure .

These exercises naturally transition into behavioral assessments, where the focus shifts to understanding how candidates make decisions during high-pressure situations.

Behavioral Interview Techniques

Behavioral questions can provide deeper insights into a candidate’s critical thinking and problem-solving abilities. For example, asking "What could go wrong with this approach?" helps gauge their ability to anticipate failure modes . Similarly, questions like "How did users experience this issue?" reveal whether they can connect technical failures to business impacts, such as lost revenue or abandoned carts .

Strong candidates back their answers with evidence, referencing logs, metrics, or timestamps instead of relying on memory alone. Techniques like the "5 Whys" are also useful for probing deeper into their thought processes. For instance, a candidate might start with "The database was slow" but ultimately trace the issue to a root cause like "no query count assertion in tests" .

These behavioral insights complement the debugging assessments, giving you a complete picture of how candidates handle complex, real-world challenges.

Technical Assessment Methods That Work

Once you've assessed a candidate's debugging abilities and behavioral traits, it's time to evaluate how they perform in actual coding scenarios. This step focuses on how candidates write code, collaborate with others, and apply their expertise in realistic situations. Research shows that take-home assignments correlate 35% more closely with job performance than traditional whiteboard interviews . The key to success is aligning the assessment with your team's tech stack. For instance, if your team uses Node.js, PostgreSQL, and Docker, test those skills directly instead of relying on abstract puzzles .

By 2025, 68% of companies have incorporated take-home coding tests into their hiring process . Assignments that take less than four hours to complete have a 60–70% completion rate, which can increase to 85% when candidates are compensated . For senior roles, offering compensation between $200 and $500 shows respect for their time and greatly improves participation rates . However, Scott Keller has pointed out that overly long assignments, like those spanning two days, can negatively impact the candidate experience .

Follow-up is crucial. A live "forensic" interview, where candidates explain their architectural decisions and trade-offs, provides deeper insights into their thought process . This step is particularly important as 76% of developers now use AI tools for coding assistance . Including a debugging exercise - where candidates locate and resolve issues in existing code - offers a chance to evaluate how they navigate and reason through complex systems . These methods not only confirm technical skills but also ensure candidates can deliver maintainable, production-quality solutions. Below are three effective assessment methods: take-home assignments, pair programming, and job simulations.

Take-Home Coding Assignments

Take-home assignments are most effective when they closely resemble real-world development tasks. Examples include creating a REST API endpoint, debugging an integration, or optimizing a database query . To streamline the process, provide boilerplate code so candidates can focus on solving the core problem rather than setting up the basics . Time limits should be clear and role-specific: 2–3 hours for junior engineers (L3), and 3–4 hours for mid-level (L4) and senior engineers (L5+) .

Pair Programming Sessions

Pair programming sessions offer a glimpse into a candidate's real-time problem-solving and collaboration skills. Instead of relying on whiteboards, use collaborative IDEs like VS Code, and allow candidates access to tools like Google, StackOverflow, or AI assistants if these are part of your team's workflow . Structure the session around practical tasks, such as refactoring a component, fixing an API endpoint, or optimizing a database query. Keep the session under 90 minutes to maintain focus and engagement . Encourage candidates to explain their decisions to demonstrate their ability to navigate complex codebases effectively.

Job Simulation Exercises

Job simulations mimic real production challenges, making them an excellent way to assess candidates' readiness for the role. For senior positions, this might involve reviewing a pull request or drafting a technical design document . Present tasks in formats similar to those used on the job, such as Jira or Asana-style templates . These exercises are particularly effective because they emphasize practical application over theory, helping you identify candidates who can thrive in a production environment. Simulations also highlight whether candidates incorporate essential production-ready elements - like API versioning, error handling, and security measures - into their workflow .

Conclusion

Hiring backend engineers who can consistently ship production-ready code requires a focus on practical skills rather than purely theoretical knowledge. The best way to evaluate candidates is by combining take-home challenges with pair programming sessions, which together provide insight into their ability to work independently and collaborate effectively . Look for candidates who demonstrate "production thinking" by addressing key aspects like thread safety, I/O efficiency, and observability in their work.

The stakes of hiring the wrong person are high. A bad hire can cost around $31,000 and reduce engineering velocity by as much as 37% . Real-world examples back this up: between 2024 and 2025, Denis Petrov successfully scaled his engineering team from 70 to 116 members without any first-year layoffs. His secret? Replacing generic LeetCode-style tasks with code reviews and system design interviews that reflected the company's actual production environment .

"A 3-year engineer who's built APIs can be better than a 10-year engineer who's maintained legacy systems. Hire for fundamentals, not your exact stack." - daily.dev

The best candidates don’t just deliver results - they justify their technical choices. Top-tier engineers take ownership of incidents, use measurable metrics (like reducing P99 latency from 4.2 seconds to 800 milliseconds), and grow from their failures . To assess these skills, present broken code to test debugging abilities, and use real-world system design scenarios to evaluate how they tackle scalability and handle failure modes.

Engineers who prioritize test-driven development can reduce production bugs by 61% . Look for individuals who value code quality, thorough documentation, and testing over raw speed. These traits are far better indicators of long-term project success than any single technical assessment. By integrating these strategies into your hiring process, you can build a team of backend engineers who consistently deliver reliable, production-ready code.

FAQs

What signals show a backend engineer has shipped production code?

A backend engineer with experience shipping production code showcases their skill in designing systems capable of managing practical challenges, such as sudden traffic surges or unexpected outages. They bring expertise in deploying and maintaining systems that scale effectively, along with a solid history of delivering dependable, high-quality software tailored to meet business objectives.

How can I test debugging and on-call readiness in an interview?

Evaluating a candidate's debugging skills and on-call readiness requires more than just theoretical questions - it’s about seeing how they perform under pressure with realistic scenarios. Presenting them with real-world incidents or past challenges can provide a clear picture of their approach to troubleshooting and problem-solving.

Pay attention to how they diagnose issues, especially when faced with incomplete or ambiguous information. Do they ask the right questions? Can they identify the root cause without getting sidetracked by irrelevant details? Their ability to navigate dead ends and pivot to alternative solutions is a key indicator of their resilience.

It’s also important to assess their communication skills. Can they clearly explain their thought process as they work through the problem? Effective communication is critical when collaborating with teams during high-stakes production issues.

Practical exercises, like asking them to simulate handling a specific incident or solve a hypothetical system failure, can reveal their ability to prioritize tasks and manage stress. These exercises offer insight into how they would handle real on-call responsibilities and production challenges.

What’s the best way to assess system design and scalability skills?

To evaluate a candidate's system design and scalability skills, focus on their ability to build systems that are scalable, secure, and easy to maintain. Practical exercises can be a great way to test this - ask them to design something like microservices or tackle challenges involving high traffic loads. Pay attention to how they explain and justify their design choices.

You can also introduce problem-solving scenarios that test their understanding of distributed systems, database management, and performance optimization. Look for insights into their experience with scalability patterns and how they approach system resilience under stress. These exercises reveal not just technical know-how but also their ability to think critically and plan for long-term system reliability.

.png)