Evaluating cultural fit in technical hiring can improve team dynamics and retention, but it’s often misused, leading to biased decisions. Here's how to make the process fair and effective:

- Define cultural fit clearly: Focus on shared company values like ownership or collaboration, not personal preferences like hobbies or communication styles.

- Document your team’s culture: Outline specific practices (e.g., async communication, no-meeting days) to provide candidates with a clear understanding.

- Use structured interviews: Standardize questions, scoring, and criteria to minimize bias and improve hiring accuracy.

- Incorporate interview scorecards: Translate values into measurable behaviors and use consistent evaluation methods.

- Leverage skills-based assessments: Test technical abilities before interviews to focus on merit, not resumes or personal backgrounds.

- Assemble diverse hiring panels: Include varied perspectives to challenge bias and ensure balanced decisions.

- Shift to cultural add: Assess how candidates enhance your team with new perspectives rather than just fitting in.

::: @figure  {Cultural Fit Hiring Statistics: Impact on Team Performance and Retention}

{Cultural Fit Hiring Statistics: Impact on Team Performance and Retention}

1. What Cultural Fit Means in Technical Hiring

Defining cultural fit clearly is a critical step in ensuring fair and evidence-driven hiring decisions, especially in technical roles. At its core, cultural fit means aligning an employee's values, beliefs, and behaviors with those of the company . For technical teams, this concept becomes more tangible when you consider how engineers collaborate daily. It’s reflected in practices like async-first communication (relying on written documentation instead of meetings), prioritizing uninterrupted coding time, and determining whether engineers play a role in shaping product strategy or focus solely on implementation .

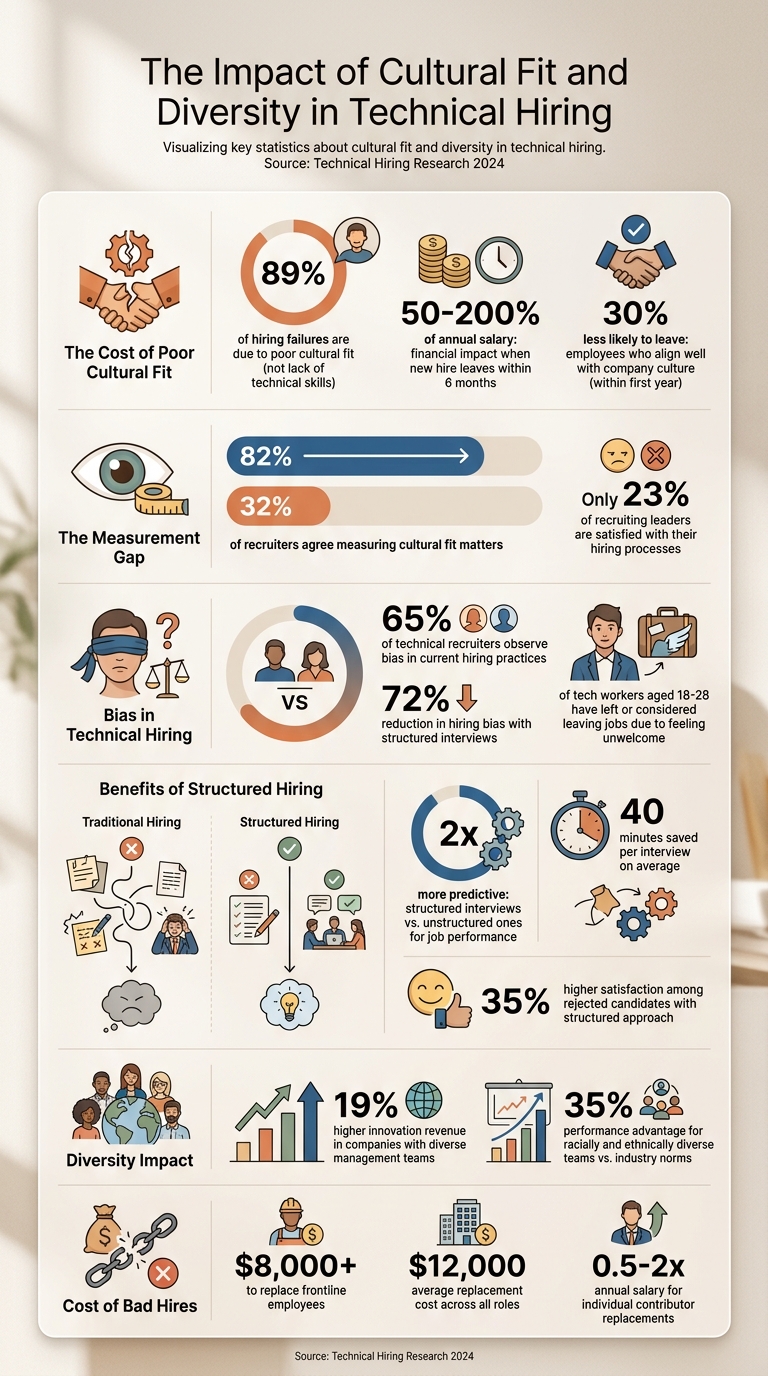

89% of hiring failures are due to poor cultural fit rather than a lack of technical skills . The financial impact of a new hire leaving within six months can range from 50% to 200% of their annual salary, factoring in recruitment costs and lost productivity . On the other hand, employees who align well with the company culture are 30% less likely to leave within their first year .

Despite its importance, there’s a disconnect: while 82% of recruiters agree that measuring cultural fit matters, only 32% actively do it . This often happens because companies haven’t clearly defined what their culture looks like in actionable terms.

1.1. Core Values vs. Personal Preferences

Understanding the distinction between core values and personal preferences is key to building a strong, effective team. Core values are the unchanging principles that shape how your team approaches decisions and problem-solving. Personal preferences, on the other hand, are flexible traits like hobbies, communication styles, or educational background.

Jason Wodicka from Karat highlights that meaningful cultural values must be specific enough to spark disagreement . Generic statements like "be kind" or "value innovation" don’t provide actionable guidance for hiring. Instead, core values should address real-world trade-offs. For instance, does your team prioritize "shipping speed" over "technical elegance"? Such statements clarify decision-making priorities.

To make these values actionable, translate them into observable behaviors. Here’s how core values might look in practice during interviews:

| Value | Observable Behavior |

|---|---|

| Ownership | Shares examples of taking responsibility for failures, not just successes |

| Intellectual Honesty | Describes instances of changing their mind when presented with new evidence |

| Customer Focus | Brings up end-user needs during the interview without being prompted |

| Collaboration | Explains how they incorporated others’ ideas alongside their own contributions |

"Vague values ('we value innovation') are useless for hiring. Translate each value into observable behaviors."

- Aisha Malik, People & Leadership Editor at EntrepreneurBytes

1.2. How to Document Your Engineering Culture

Before you can assess cultural fit in candidates, you need to articulate what your culture actually is. Start by surveying your engineering team at all levels to uncover the values and norms that guide their day-to-day decisions . Use this feedback to document the behaviors that contribute to the team’s success.

This documentation should outline specific practices like no-meeting days to protect deep work, blameless postmortems for learning from mistakes, and a preference for async-first communication over real-time collaboration. These concrete practices provide a clearer picture of your culture than vague mission statements .

For example, PostHog publicly shares a handbook that outlines their commitment to transparency, including publishing all salaries . Linear enforces a "deep work" culture by eliminating standups and minimizing recurring meetings to ensure engineers have uninterrupted time for coding . These aren’t arbitrary preferences - they’re structured workflows that candidates can evaluate before joining.

The ultimate goal is to create what Wodicka calls a "yardstick" for decision-making . When choosing between candidates, your documented culture should guide you toward the individual whose behaviors align with your team’s way of working - not just the one who feels familiar.

The next step is to integrate these cultural markers into your interview process.

2. How to Structure an Objective Interview Process

Once you've clearly documented your engineering culture, the next step is to create a standardized interview process. This ensures candidates are assessed fairly and consistently.

A structured interview process minimizes technical hiring bias by applying the same methods to evaluate all candidates. This includes using identical questions, a consistent scoring system, and predefined qualifications. By doing so, it helps counteract confirmation bias, where interviewers might unconsciously seek evidence to support initial impressions .

Studies reveal that 65% of technical recruiters observe bias in their current hiring practices . However, implementing structured interviews can reduce hiring bias by as much as 72% . Beyond fairness, structured processes also save time - on average, 40 minutes per interview - and even candidates who aren't selected report being 35% more satisfied with this approach compared to informal, instinct-based evaluations .

"Structured interviews are one of the best tools we have to identify the strongest job candidates."

- Dr. Melissa Harrell, former hiring effectiveness expert, Google

2.1. Standardize Your Interview Format

Start by conducting a job analysis that outlines the role’s core responsibilities, challenges, and success factors. This ensures that your interview questions remain focused on the job itself rather than personal biases. Use a mix of behavioral questions (e.g., "Tell me about a time you shipped a change that affected production") and hypothetical scenarios (e.g., "How would you respond if a competitor began charging for a feature we offer for free?") to evaluate both past achievements and strategic thinking .

Be consistent in your follow-up questions and avoid giving additional hints or explanations to any specific candidate . Incorporate a 1–5 rating scale with behavioral anchors tied to observable actions, such as “1: misses fundamentals” or “5: demonstrates clear tradeoffs and strong ownership” .

To further ensure fairness and clarity, integrate interview scorecards into your process.

2.2. Create and Use Interview Scorecards

Building on a structured format, interview scorecards transform your cultural values into measurable criteria for unbiased evaluations. Focus on identifying 6–12 key competencies that directly influence success in the role, such as teamwork, independence, or adaptability . Each competency should have a clear scoring system (typically 1–5) with specific behavioral examples for each level .

For instance, instead of vaguely noting that a candidate "seemed collaborative", a scorecard might define:

- 3 = "Explained technical concepts clearly but required follow-up."

- 5 = "Detailed a specific conflict resolution strategy and its successful outcome" .

Adjust the weight of each competency based on its importance to the role. For example, collaboration might account for 25% of the score for a leadership position but only 10% for an entry-level role .

Interviewers should complete scorecards immediately after each interview to avoid memory loss and reduce groupthink during discussions . Include sections for written observations that provide concrete examples to support the numerical ratings. When the hiring committee meets, every "Hire" or "No hire" decision should be directly tied to evidence from the scorecard’s competencies .

3. Pre-Interview Assessments That Reduce Bias

Pre-interview assessments are a powerful way to level the playing field in hiring. By focusing on technical skills right from the start, these assessments create an objective baseline, reducing the influence of factors like prestigious schools or well-known employers. This approach ensures that candidates are evaluated on their actual abilities rather than superficial credentials.

Research highlights the value of diversity in the workplace, showing that racially and ethnically diverse teams outperform industry norms by 35% . However, traditional resume reviews often introduce bias, as they can be swayed by educational background or personal networks. Technical assessments provide a more reliable and measurable alternative, reinforcing a commitment to fair and unbiased hiring practices.

"Technical skill assessment... provides an objective measure of a candidate's qualifications, rather than a resume reviewer's bias-prone impression."

- Team CodeSignal

3.1. Skills-Based Screening Methods

The first step in designing effective assessments is conducting a job analysis. This helps differentiate between the "must-have" skills candidates need on day one and the "nice-to-have" skills that can be developed later. Using this analysis, you can create a skills matrix to shape assessments that are directly tied to the role's responsibilities.

To further minimize bias, anonymize submissions by removing identifying details such as names, locations, or educational affiliations. For example, you can replace GitHub handles with randomly generated IDs, ensuring evaluators focus solely on the quality of the work. Platforms that automatically grade submissions against standardized test cases can also help maintain consistency across evaluations.

When creating assessments, aim for a duration of 45 to 90 minutes. This timeframe allows you to measure a candidate's skills without testing their endurance, making the process fairer for those with different schedules. Additionally, ensure the assessment focuses only on job-relevant skills to avoid unintentionally disadvantaging certain groups.

Define clear scoring criteria using a scale, such as 1 to 5, with behavioral anchors. For instance, a "1" in debugging might indicate a failure to identify basic errors, while a "5" reflects the ability to diagnose root causes and propose architectural improvements. Testing the assessment with current employees can also help establish realistic benchmarks and cutoff scores.

3.2. Document Results Before Forming Opinions

It's essential to document scores and observations immediately after the assessment. Delaying this step risks letting subjective impressions creep in, which can reintroduce bias into the process.

"Don't wait until later: your memory fades fast, and scores without notes invite bias back into the process."

- Alex Blog

When recording results, focus on specific, measurable observations, such as "optimized SQL query in under two minutes", rather than vague impressions like "seemed smart." This approach creates a clear and defensible record, showing that decisions were made based on job-relevant criteria.

To further reduce bias, require all panel members to submit their scores and notes independently before engaging in group discussions. This prevents groupthink and ensures decisions are based on individual evaluations rather than being swayed by dominant voices. Having documented data before meeting candidates also helps avoid the "halo effect", where impressive resumes or personal rapport can overshadow objective assessments.

With this solid foundation of objective data, you can take the next step: assembling diverse hiring panels to further ensure fairness in the selection process.

4. Build Diverse Hiring Panels

Creating a diverse hiring panel goes beyond just ticking a box - it’s about challenging assumptions and reducing bias in the hiring process. When panelists come from different backgrounds and bring varied perspectives, they’re more likely to question subjective “gut feeling” decisions and focus on evidence-based evaluations. This approach is especially important given that 65% of HR specialists, hiring managers, and IT leaders acknowledge bias in technical role recruitment .

A diverse panel also sends a clear message of inclusion. It shows candidates that your company values representation and helps them see themselves as part of your team. This matters, especially when half of tech workers aged 18 to 28 have left or considered leaving jobs due to feeling unwelcome or uncomfortable . By fostering diversity from the start, you’re not only ensuring fairness but also setting the tone for unbiased, structured evaluations.

"The cost [of bias] is not only financial; it is strategic, affecting your team's ability to ship excellent products on schedule."

- Elena Bejan, People Culture and Development Director, Index.dev

4.1. How to Assemble a Diverse Panel

To create a well-rounded panel, include individuals with diverse backgrounds, areas of expertise, and career stages. This mix ensures candidates are assessed from multiple perspectives rather than a single, narrow viewpoint. For example, engineers with different specializations, team members at varying career levels, and those who approach problems differently can all contribute valuable insights.

Distribute interview responsibilities evenly to avoid overburdening certain panelists. Additionally, rotating panel members regularly helps bring fresh perspectives while avoiding burnout.

4.2. Train Panelists to Recognize Bias

Even with a diverse panel, unconscious bias can still creep in. That’s why training panelists to recognize and address bias is essential. Start by using tools like observational shadowing and mock interview sessions to align everyone on evaluation standards.

For instance, new interviewers can observe experienced ones to learn best practices, and then experienced panelists can provide feedback when the roles are reversed. Mock interviews with standardized questions help the team agree on what constitutes strong versus weak responses. This process ensures that any differences in scoring reflect genuine perspectives, not inconsistent standards .

Keep track of score distributions using a data-driven recruitment checklist to spot interviewers who consistently rate candidates much higher or lower than their peers. Pair these individuals with top-performing panelists for co-interviews to help recalibrate their approach. Additionally, require panelists to submit independent feedback before group discussions. This step minimizes groupthink and ensures that a range of viewpoints shapes the final decision .

5. Shift from Cultural Fit to Cultural Add

Relying on cultural fit in hiring can unintentionally create teams that think and act alike, which can hinder fresh ideas and progress. Instead, companies are moving towards focusing on cultural add - evaluating how a candidate’s unique qualities can enhance the team. The question shifts from "Does this person fit?" to "What unique value does this person bring?"

The benefits of diversity in teams are clear. Companies with diverse management teams see 19% higher innovation revenue compared to those with less diversity . The key is to assess candidates based on shared values and their potential to contribute something new.

"Hiring for culture fit may sound thoughtful in theory, but it can lead to teams that all look and think alike."

This approach encourages hiring decisions that prioritize fresh perspectives over maintaining the status quo.

5.1. How to Evaluate Different Perspectives

To uncover a candidate’s unique contributions, ask questions that dig deeper into their experiences and viewpoints. Examples include:

- "What perspective or experience do you bring that others might not?"

- "Can you share a time when understanding someone else’s perspective helped solve a problem?"

- "What’s your impression of our company culture, and how could it improve?"

These types of questions not only highlight a candidate’s ability to think differently but also gauge their willingness to challenge norms and bring in fresh ideas.

Netflix uses a similar strategy by focusing on behaviors like judgment, courage, and curiosity rather than personality traits. Their hiring process evaluates whether candidates exhibit actions that align with success . Automattic takes a hands-on approach, offering candidates a paid 3–5 week trial period to work on real projects. This allows the team to see their contributions in action before making a full-time offer .

By emphasizing unique contributions, companies can build teams that are more dynamic and innovative. The next step is to focus on technical skills while avoiding biases tied to personal similarities.

5.2. Prioritize Skills Over Similarity

When hiring, the focus should remain on job-related skills and technical expertise rather than whether a candidate shares personal traits with the existing team. Despite 82% of recruiters agreeing on the importance of cultural fit, only 32% have a structured process for evaluating it . This lack of structure often leads to subjective decisions that favor familiarity over merit.

To minimize bias, separate the evaluation of technical skills and cultural values into different interview stages. This prevents the "halo effect", where a candidate’s technical strength might overshadow a lack of alignment with company values . Use a standardized scoring system - like a 1-to-5 scale - before interviews to ensure fair comparisons.

Additionally, require interviewers to submit independent scores and evidence after interviews. This avoids anchoring bias and ensures that multiple perspectives shape the final decision . For example, Slack evaluates qualities like empathy, craftsmanship, and playfulness by observing behaviors rather than relying on subjective impressions .

6. Create Clear Evaluation Criteria

Once you've structured interviews and assembled diverse panels, the next step is to establish clear evaluation criteria. These criteria transform company values into measurable, job-specific standards. Without them, hiring decisions often rely on gut feelings, which can lead to inconsistency. For instance, instead of vaguely labeling "innovation" as a value, define it in actionable terms like "prioritizing speed over perfection while maintaining accountability." For a backend engineer, this could translate to "delivers working prototypes within sprint deadlines and documents technical debt for future iterations" .

"Hiring for culture fit does not mean cloning your existing team or prioritizing personality over performance. It's about shared principles, not shared backgrounds."

- Belen Rocha, Communications and Culture Coordinator, BEON.tech

Right now, only 23% of recruiting leaders are satisfied with their hiring processes . The main culprit? Criteria that are too subjective. To address this, identify competencies that truly matter for each role. For instance, a manager might need "Building a Team", while a senior engineer might require "System Design." Assess these competencies through the lens of your company values . Assign specific interviewers to evaluate particular skills, ensuring a thorough and focused process without redundancy .

Shift the emphasis from subjective impressions to observable behaviors by requiring written justifications tied to specific examples .

6.1. Design Role-Specific Scorecards

To put these criteria into action, create tailored scorecards that combine technical and cultural outcomes. Start by defining 2–3 role-specific goals, such as "reduce page load time by 25%", to highlight which competencies matter most.

Behaviorally-Anchored Rating Scales (BARS) can help make ratings more objective. A simple 1–5 scale works well when each score corresponds to specific, observable behaviors. For example:

- A 5 in communication might mean "structures explanations clearly, adapts to the audience's technical level, and anticipates stakeholder questions."

- A 3 might mean "communicates ideas clearly and answers follow-up questions concisely."

Here’s how a scorecard might look:

| Competency | 1 (Insufficient) | 3 (Meets Expectations) | 5 (Exceeds Expectations) |

|---|---|---|---|

| System Design | Provides high-level ideas without tradeoffs. | Structures a design, identifies components, notes tradeoffs. | Delivers scalable designs, quantifies bottlenecks, proposes mitigation. |

| Communication | Disorganized answers lacking clarity. | Communicates clearly, summarizes decisions, answers follow-ups. | Adapts explanations, documents decisions, anticipates questions. |

| Problem Solving | Needs heavy prompting, misses root causes. | Breaks problems into steps, proposes reasonable hypotheses. | Quickly identifies root causes, proposes solutions with contingencies. |

Assign percentage weights to each competency based on its importance to the role. For example, a backend engineer's scorecard might allocate:

- 40% to Technical Skills

- 25% to Problem Solving

- 15% to Code Quality

- 10% each to Communication and Domain Knowledge

The total should always equal 100%, reflecting the priorities for success in the role.

Keep scorecards focused by limiting them to 4–6 core competencies. Map specific interview questions to each one, and assign interviewers to explore 1–2 areas deeply. Before interviews begin, establish decision thresholds like requiring a weighted score of at least 70% or setting a "minimum competency rule" (e.g., no score below 2). This ensures candidates can't advance on the strength of one area while lacking in others.

6.2. Replace Gut Feelings with Facts

This step builds on earlier efforts to counteract bias by focusing on observable behaviors. Avoid vague feedback like "I liked them" or "they didn’t feel like a fit", which reveal personal bias rather than job-relevant insights. Instead, require interviewers to provide specific examples tied to scorecard criteria. For instance, instead of saying "good communicator", an interviewer might write, "explained the tradeoffs between microservices and monolithic architecture using a clear analogy to package delivery systems."

Southwest Airlines offers a great example of this approach. To evaluate their core value of "fun", they don’t ask candidates, "What do you do for fun?" Instead, they present a scenario involving a disgruntled customer and evaluate the candidate’s response. This provides concrete data on how the candidate applies company values to solve problems, like improving productivity by turning planes around faster at the gate .

"The focus tends to be, 'Is this person a fit socially for me? How do I feel when interacting with this person?' Rather than, is this person well suited for our organizational mission and strategy?"

- Lauren Rivera, Professor of Management and Organizations, Kellogg School of Management

Ban subjective terms like "leadership presence" or "good communicator" from feedback, as these encourage bias. Instead, require short evidence-based notes for each rating. For example, an interviewer evaluating communication might note, "clearly explained tradeoffs and used an analogy to make technical concepts accessible."

To ensure consistency, hold monthly calibration sessions where interviewers independently rate the same sample candidate and compare results. This helps clarify ambiguous criteria and align expectations. Additionally, embed scorecard templates into your Applicant Tracking System (ATS) to ensure they’re completed before debriefs, reducing the risk of post-hoc rationalizations.

Poor hiring decisions can be costly. Replacing frontline employees can cost over $8,000, while the average replacement cost across roles is around $12,000 . For individual contributors, this cost can climb to 0.5–2 times their annual salary . By focusing on fact-based evaluation criteria, you can avoid these expenses and ensure hiring decisions are grounded in objective evidence.

Conclusion

Evaluating cultural fit is about finding candidates who align with your core values while introducing fresh perspectives. The move from "culture fit" to "culture add" shifts the focus from seeking sameness to embracing complementary strengths.

The shift is from asking 'Does this person fit what we already have?' to 'Does this person share our values and bring something we're missing?' - Aisha Malik, People & Leadership Editor at EntrepreneurBytes

Structured interviews are proven to be twice as predictive of job performance compared to unstructured ones. Additionally, companies with above-average diversity in management see innovation revenue that's 19% higher than those with below-average diversity . These aren’t just numbers - they represent real advantages in technical hiring.

The strategies outlined in this guide work as a cohesive system. Tools like standardized scorecards, diverse interview panels, and assessments for technical and soft skills ensure fair, measurable criteria that apply across varied backgrounds. For instance, defining values such as "ownership" with clear examples, like taking responsibility for failures as well as successes, replaces subjective judgments with observable actions.

The key is to focus on behaviors that drive success rather than personal preferences. This approach reduces bias and strengthens your team by welcoming diverse thinking styles - essential for innovation in tech. By sticking to structured, objective evaluations, you can achieve better team performance and higher retention rates.

FAQs

How do I separate values from personal “fit” in interviews?

When evaluating candidates, it's essential to base decisions on objective criteria that align with the role and the company’s values. Avoid relying on subjective impressions, as these can introduce bias into the process.

Here’s how to ensure a fair and structured approach:

- Use values-based questions: Develop structured questions that reflect the company’s core principles. This helps assess whether a candidate’s outlook and behavior align with the organization’s culture.

- Incorporate validated assessments: Leverage proven tools to evaluate job-related skills and knowledge. These assessments provide measurable insights and reduce reliance on personal judgment.

- Define specific values and competencies: Clearly outline the qualities and skills required for the role. This ensures evaluations are grounded in relevant qualifications rather than superficial traits or shared interests.

By focusing on measurable, role-specific factors and aligning them with company principles, you can create a more equitable and effective hiring process.

What should be on a culture scorecard for engineers?

When building a culture scorecard for engineers, the focus should be on how well candidates align with your company's values, behaviors, and teamwork dynamics. This involves assessing key traits like collaboration, ownership, accountability, and communication.

Start by identifying the core cultural attributes that define your organization. For example, does your team prioritize open communication? Is taking initiative a valued trait? Once these are clear, translate them into measurable criteria or structured questions. This could include situational questions to gauge a candidate's approach to teamwork or their ability to take responsibility for a project.

By creating a clear and objective framework, you not only ensure a fair hiring process but also minimize potential bias. A well-defined scorecard helps you consistently evaluate candidates while staying true to your company's culture.

How can we measure “cultural add” objectively?

To gauge "cultural add" effectively, focus on asking specific, targeted questions that uncover how candidates align with your company's values while bringing their own perspectives to the table. For instance, you might ask how they approach giving and receiving feedback, resolve conflicts, or assist with onboarding new team members. Behavioral and situational questions can be particularly useful for evaluating traits like inclusivity, collaboration, and their ability to positively influence team dynamics. This approach ensures a fair and unbiased assessment of how they could contribute to and enrich your company culture.

.png)