Hiring compliance in tech recruiting is more complex than ever, with AI tools and global regulations creating significant challenges. Here's what you need to know:

- AI Tools and Liability: Automated Employment Decision Tools (AEDTs) like resume parsers and video interview analyzers are under scrutiny for bias. Employers, not vendors, are responsible for discriminatory outcomes under laws like Title VII, NYC Local Law 144, and the EU AI Act.

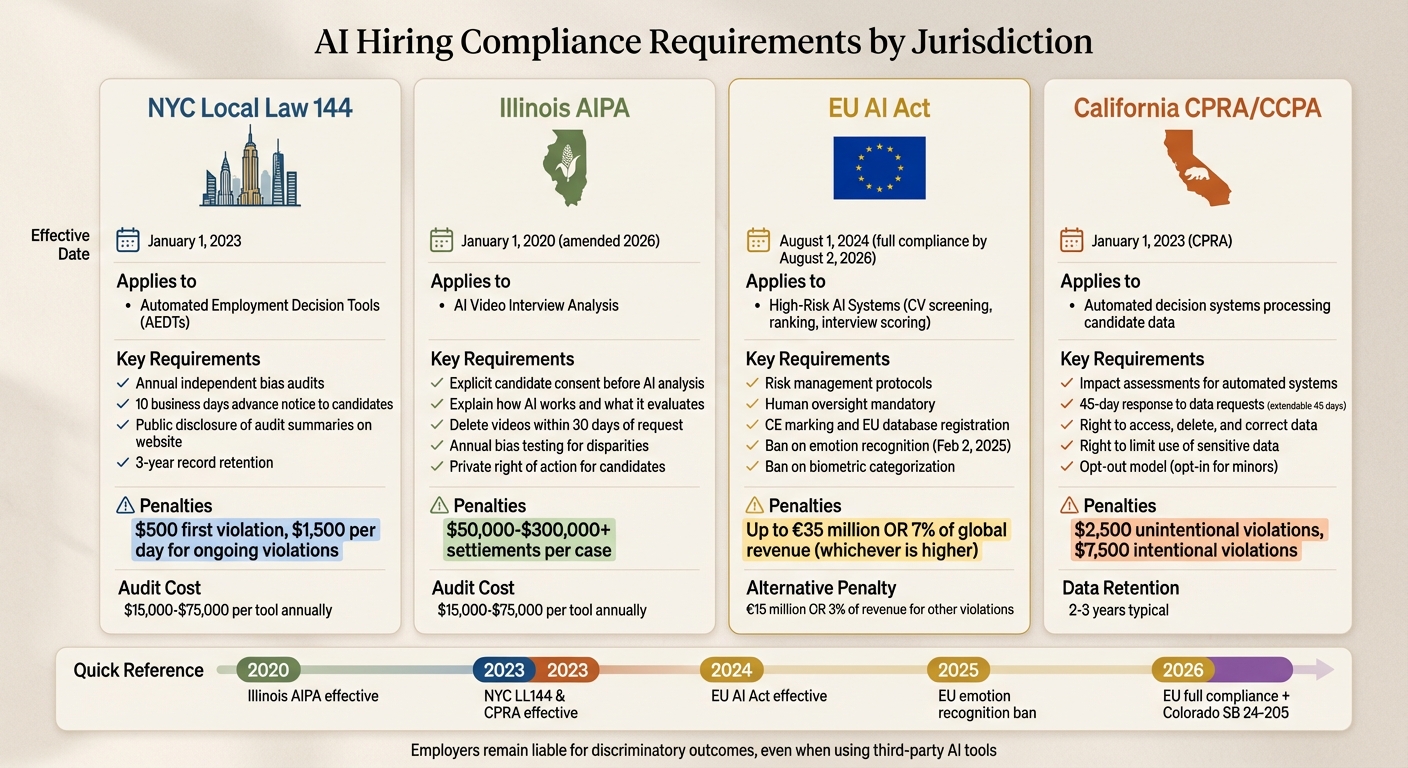

- Key Regulations: Federal laws like the EEOC, state-level laws (e.g., NYC Local Law 144, Illinois AIPA), and international rules like GDPR and CPRA demand transparency, bias audits, and strict data handling practices.

- Bias Audits and Penalties: NYC mandates yearly bias audits for AEDTs, with fines up to $1,500 per day for non-compliance. The EU AI Act imposes penalties up to $35 million or 7% of global revenue for violations.

- Data Privacy: GDPR and CCPA/CPRA require consent for data collection, deletion upon request, and strict retention policies. Illinois AIPA adds specific rules for AI video interviews.

- Preventing Bias: Structured interviews, standardized rubrics, and regular audits can reduce bias and improve compliance. Human oversight is critical for AI-driven decisions.

- Vendor Accountability: Employers must ensure third-party tools meet compliance standards, with contracts specifying audits, deletion timelines, and data security.

Bottom Line: Compliance isn't optional. Proactive measures like bias audits, structured hiring processes, and transparent data handling can help avoid hefty fines and protect your company's reputation.

The Compliance Landscape in Tech Hiring

Technical recruiters face a maze of federal, state, and international regulations designed to prevent discrimination and protect candidate data. Tech hiring brings its own hurdles, such as sourcing talent globally, evaluating open-source work, and using AI-powered screening tools. These tools, under emerging laws, are increasingly scrutinized to ensure compliance.

What’s changed? Regulations have shifted from being voluntary guidelines to enforceable systems with real penalties. Employers are held accountable for discriminatory outcomes, even when using third-party AI tools. As Pertama Partners puts it:

"Even if you purchase AI hiring tools from third-party vendors, you (the employer) remain liable for discriminatory outcomes under Title VII, ADEA, ADA, and state civil rights laws. 'The vendor said it was unbiased' is not a defense."

Below, we’ll explore key federal, data privacy, and state-level laws that guide compliance in tech hiring.

Federal Regulations: EEOC, OFCCP, and FCRA

Federal laws form the baseline for compliance, but tech recruiters must navigate additional complexities. The Equal Employment Opportunity Commission (EEOC) enforces anti-discrimination laws like Title VII of the Civil Rights Act, the Age Discrimination in Employment Act (ADEA), and the Americans with Disabilities Act (ADA). These laws address both intentional bias and practices that unintentionally disadvantage certain groups .

The EEOC uses the four-fifths rule to measure adverse impact. For example, if a coding test selects 50% of male candidates but only 35% of female candidates, the practice could be flagged as discriminatory .

The Office of Federal Contract Compliance Programs (OFCCP) sets rules for federal contractors, requiring regular adverse impact testing, validation of hiring tools, and accommodations for candidates. For companies with government contracts, this means maintaining thorough documentation and conducting proactive assessments.

The Fair Credit Reporting Act (FCRA) focuses on background checks. While these are not typically covered by AI-specific laws, recruiters must still provide clear notice and get written consent before conducting checks .

Under the ADA, recruiters are also obligated to offer reasonable accommodations to ensure fair opportunities for all applicants .

Data Privacy Laws: GDPR, CCPA, and CPRA

Data privacy laws have global implications, affecting any company that collects candidate information. The General Data Protection Regulation (GDPR) applies to organizations handling data from individuals in the EU or European Economic Area. GDPR requires explicit opt-in consent before processing candidate data .

In the U.S., California’s Consumer Privacy Act (CCPA) and its update, the California Privacy Rights Act (CPRA), apply to businesses meeting certain revenue or data thresholds. Unlike GDPR, these laws use an opt-out model, except for minors, who require opt-in consent. Both laws allow candidates to access, delete, and correct their data. CPRA adds the "Right to Limit" the use of sensitive data like race or precise geolocation .

Here’s how GDPR and CCPA/CPRA compare:

| Feature | GDPR | CCPA/CPRA |

|---|---|---|

| Primary Model | Opt-in (Consent first) | Opt-out (Right to object) |

| Response Timeline | 1 month (extendable to 2 months) | 45 days (extendable by another 45 days) |

| Sensitive Data | "Special categories" (Art. 9) | "Sensitive Personal Information" (SPI) |

| Enforcement | Data Protection Authorities (DPAs) | CA Privacy Protection Agency (CPPA) / AG |

| Employee Data | Fully covered | Fully covered (as of Jan 2023) |

Violations under GDPR can result in fines of up to €20 million or 4% of annual global revenue, whichever is higher. Under CCPA, penalties range from $2,500 for unintentional violations to $7,500 for intentional ones .

State-Level Hiring Laws

State laws add another layer of complexity, with regulations varying widely by location. For example, New York City’s Local Law 144 requires an annual bias audit for Automated Employment Decision Tools (AEDTs) and mandates a 10-day notice before their use. Fines for non-compliance start at $500 for the first offense and increase to $1,500 for daily violations .

In Illinois, the Video Interview Act requires candidate consent before using AI to analyze video interviews, with a rule to delete recordings within 30 days of a request . Maryland’s HB 1202 prohibits facial recognition during interviews unless the applicant signs a waiver . Meanwhile, Colorado’s SB 24-205, effective in 2026, will require risk management policies and annual assessments for "high-risk AI" systems .

| State/City | Primary Focus | Key Requirement | Effective Date |

|---|---|---|---|

| New York City | Automated Decision Tools (AEDT) | Annual independent bias audit; 10-day notice | Jan 1, 2023 |

| Illinois | AI Video Interviews | Candidate consent; 30-day deletion upon request | Jan 1, 2020 |

| Maryland | Facial Recognition | Signed waiver required for facial templates | Oct 1, 2020 |

| Colorado | High-Risk AI Systems | Risk management policy; annual impact assessments | Feb 1, 2026 |

The shift from experimental AI use to regulated, transparent systems is underway. Recruiters must carefully evaluate every tool in their hiring pipeline, including those built into developer hiring platforms and ATS, to ensure compliance with these evolving laws .

Hiring engineers?

Connect with developers where they actually hang out. No cold outreach, just real conversations.

What Technical Recruiters Need to Know

Technical recruiting comes with its own set of challenges, particularly when it comes to compliance. From reviewing code repositories to managing international hires and correctly classifying contractors, recruiters must navigate a complex legal landscape. With tech sector unemployment hovering around 1.5% and a projected shortfall of 1.2 million engineers by 2026, many recruiters are expanding their search globally and considering candidates with unconventional backgrounds . Below are critical compliance areas that technical recruiters need to keep in mind.

Open-Source Contributions and Developer Portfolios

When evaluating candidates, compliance with GDPR and CCPA is non-negotiable. These regulations mandate that data collection be limited to what’s necessary for making a hiring decision. For example, if you're hiring a backend engineer, reviewing unrelated frontend projects could potentially lead to claims of discrimination .

"AI tools used in screening and assessment must be monitored for algorithmic bias. Employers are held accountable if AI systems disproportionately disadvantage protected groups" .

Casey Horgan from Esteemed adds:

"Unless the recruiter is steeped in multiple programming languages, disciplines, dev environments, and popular tools, it's difficult to assess an applicant's skills" .

To address this, employers should rely on standardized rubrics to evaluate technical skills rather than subjective opinions. Keep detailed records of assessment criteria for at least one year to comply with EEOC guidelines or six months under GDPR. Additionally, candidates have the right to request the deletion of any data collected from their portfolios .

Global Sourcing and Immigration Compliance

Hiring internationally introduces another layer of complexity. Compliance with the Immigration and Nationality Act and regional employment laws is essential. For example, misclassifying a contractor as an employee in Germany can result in fines of up to €500,000. Intellectual property rights also vary by region - while the U.S. "work-for-hire" doctrine generally favors employers, developers in the EU and India may retain certain rights unless specific assignment agreements are in place .

Remote hiring also comes with its own challenges. Assessing technical and soft skills like time management and self-discipline is often more difficult in virtual settings. When sourcing talent globally, recruiters must adapt processes to accommodate different legal systems, language barriers, and time zones . Proper classification and management of freelancers and contractors are equally important to avoid compliance issues.

Freelancers, Contractors, and FMLA

With a tech industry attrition rate of 13.2% - the highest across all business sectors - companies are increasingly relying on freelancers and contractors . However, accurate classification is critical to avoid legal penalties and back-pay claims. For instance, the Family and Medical Leave Act (FMLA) only applies to employees. Misclassifying an employee as a contractor could expose the company to liability for denying FMLA protections. To minimize these risks, companies should use clear contracts, precise job descriptions, and conduct regular compliance audits.

Avoiding Bias in Technical Screening

Technical screening is a critical stage where compliance issues often arise. Recent statistics reveal that 48% of HR managers acknowledge biases in their hiring decisions, and the financial impact of these errors is staggering. While the average cost-per-hire is around $4,700, a poor hiring decision can lead to losses ranging from $50,000 to $240,000 . To minimize these risks and ensure compliance with EEOC guidelines, structured processes are essential. Below, we explore proven methods to reduce bias and improve diversity effectively.

Structured Interviews and Standardized Rubrics

Using standardized interviews takes the guesswork out of hiring. This approach ensures every candidate is asked the same questions in the same sequence, with responses evaluated against predefined criteria rather than subjective impressions. Research shows that structured interviews are nearly twice as effective at predicting job success compared to unstructured ones .

"Humans' unconscious bias will play a role in any interview, especially if it's not standardized."

- Guillermo Corea, Managing Director at SHRMLabs

To make this method work, create a numerical scoring rubric (e.g., 1–5) with clear behavioral descriptions for each score. For instance, when assessing "communication skills", define what each level represents. A "3" might mean: "Candidate clearly explains technical decisions but struggles to tailor explanations for non-technical audiences." This approach shifts the focus to job-relevant competencies, avoiding superficial factors like prestigious schools or past employers .

Additionally, use the four-fifths rule as a fairness check: if any group's selection rate is less than 80% of the highest-performing group, revisit your criteria .

Documentation matters too. Take evidence-based notes during interviews to combat recency bias and confirmation bias . Involve multiple interviewers from diverse functions - HR, technical, and operational perspectives - to dilute individual biases and improve decision-making accuracy . Keeping detailed records of assessment criteria not only strengthens compliance but also provides a clear audit trail.

Unstructured vs. Structured Interviews

The table below highlights the key differences between unstructured and structured interviews. While unstructured interviews rely on memory and gut feelings, structured ones use standardized, evidence-based methods that significantly improve reliability.

| Aspect | Unstructured Interviews | Structured Interviews |

|---|---|---|

| Predictive Validity | Low (approx. 0.20) | High (nearly 2× more predictive) |

| Questioning | Varies by candidate; conversational | Standardized; same questions for all |

| Evaluation | Memory-based; "gut feeling" | Scoring rubrics; evidence-based |

| Bias Risk | High (affinity bias, confirmation bias, halo effect) | Lower (standardized safeguards) |

| Technical Focus | Often relies on algorithmic "brain-teasers" | Focuses on job-relevant competencies |

Training interviewers to recognize cognitive shortcuts, such as affinity bias (favoring those similar to themselves) and the halo/horn effect (letting one trait dominate the evaluation), is crucial . Also, reconsider traditional whiteboard interviews, which often test a candidate's stress levels rather than their actual coding skills . Instead, adopt competency-based questions that ask candidates to provide evidence of past work. For example, have them walk through specific projects they've completed, rather than solving abstract puzzles on the spot .

Candidate Data Handling and Consent Management

When it comes to managing candidate data, the stakes are high. Every resume, video interview, or AI screening result leaves a data trail that must be handled with care. Regulations like GDPR, CPRA, and other state laws hold recruiters accountable for every piece of candidate data - from the first email to the final deletion. The challenge? Balancing candidate privacy with compliance requirements.

Data Flows and Consent Forms

To stay compliant, map out every piece of candidate data - names, emails, GitHub profiles, video recordings, AI scores - and document its origin, purpose, and deletion timeline. This practice aligns with the "purpose limitation" principle under GDPR and CPRA, ensuring transparency and creating an audit trail. If you can't justify collecting certain data, like a candidate's speech patterns or eye movements, don't collect it.

Some data uses might fall under "legitimate interests", but others require explicit opt-in consent. For instance, under Illinois' AI Video Interview Act, candidates must give active consent before AI analyzes their video interviews . Similarly, automated SMS reminders for interviews need prior written consent under TCPA regulations.

Consent forms are essential. They should clearly outline the AI tools being used, the qualifications assessed, and the data sources - whether from resumes, social media, or video analysis. In New York City, for example, recruiters must provide candidates with this information at least 10 business days before using any automated employment decision tool . Using a Consent Management Platform (CMP) can simplify the process by automating consent collection, timestamping, and preference enforcement across your systems.

This detailed mapping of data flows also lays the groundwork for managing retention and deletion processes effectively.

Data Retention and Deletion Requests

Retention policies can vary, but a common practice is to keep candidate data for 2–3 years to address potential legal claims - provided this is clearly stated in your privacy notice. However, some types of data, like video and biometric information, come with stricter rules. In Illinois, for example, interview videos must be deleted within 30 days of a candidate's request, no exceptions . On the other hand, New York City's Local Law 144 requires you to retain bias audit results and aggregated candidate data for at least three years.

To manage this, configure your ATS to automatically delete data once the retention period ends or if a candidate submits a Data Subject Access Request (DSAR). This should trigger data removal across all platforms, including your ATS, video hosting services, AI tools, and any third-party processors. Ameya Deshmukh from EverWorker emphasizes the importance of automating these workflows:

"Codify deletion/DSAR workflows in your ATS."

It's also critical to maintain a secure, access-controlled log of each deletion request and its fulfillment. This log serves as proof for regulators while ensuring you meet fairness requirements without retaining personally identifiable information. Even anonymized records of human-in-the-loop decisions can be kept to demonstrate compliance.

Streamlined deletion workflows also help enforce accountability with third-party vendors.

Vendor Agreements and Third-Party Data Sharing

Even if you're using AI tools from a third-party vendor, you're still responsible for any discriminatory outcomes or data breaches. As Pertama Partners puts it:

"The vendor said it was unbiased is not a defense" .

To protect yourself, ensure vendor contracts include a Data Processing Addendum (DPA) with Article 28 terms for GDPR compliance. Negotiate agreements that specify service levels for data deletion and include "no-train-on-your-data" clauses.

When evaluating vendors, ask key questions like: Can they provide deletion audit evidence with minimal effort? Do they notify sub-processors about deletion requests? How do they handle data purging from backups? Your agreements should give you the right to audit, require bias and privacy documentation, set breach notification timelines, restrict subcontractors, and mandate data deletion when the contract ends.

| Regulation | Key Data Requirement | Jurisdiction |

|---|---|---|

| GDPR | Right to human review of automated decisions; Article 28 vendor terms | EU / UK / Global (if processing EU data) |

| CPRA/CCPA | Impact assessments for automated systems; risk mitigation plans | California, USA |

| NYC Local Law 144 | Annual independent bias audits; 10-day advance notice to candidates | New York City, USA |

| Illinois AI Video Interview Act | Explicit consent for AI video analysis; 30-day deletion upon request | Illinois, USA |

Finally, avoid collecting sensitive data - like race, health, or religious beliefs - unless there's a very specific legal need. Configure your tools to exclude or mask these attributes to prevent algorithmic discrimination. Keeping data collection to the bare minimum isn't just about legal compliance; it's a critical step in protecting both candidates and your organization from potential risks.

AI in Hiring: Emerging Regulations

::: @figure  {AI Hiring Compliance Requirements by Jurisdiction: NYC, Illinois, EU & California}

{AI Hiring Compliance Requirements by Jurisdiction: NYC, Illinois, EU & California}

The rules surrounding the use of AI in hiring are shifting quickly. Practices that might work fine in 2024 could lead to hefty fines or legal troubles by 2026. Key frameworks like the EU AI Act, NYC Local Law 144, and Illinois AIPA are setting new standards for how recruiters can use AI tools for tasks like automated tools for screening and interviewing. These frameworks bring their own timelines, rules, and penalties, and understanding them is crucial for staying compliant.

EU AI Act and High-Risk Classification

The EU AI Act, effective as of August 1, 2024, classifies many recruitment AI tools - like those used for CV shortlisting, ranking candidates, or scoring interviews - as "high-risk systems" under Annex III . This classification comes with strict requirements, such as implementing risk management protocols, maintaining data governance, ensuring human oversight, and documenting that the system works as intended.

Starting February 2, 2025, certain AI uses will be outright banned. These include tools that use emotion recognition to analyze facial expressions or voice tones during interviews, as well as systems that categorize biometric data to infer traits like race or religion from photos. Breaking these rules could lead to fines of up to €35 million or 7% of global annual revenue, with other violations carrying penalties of up to €15 million or 3% of revenue .

By August 2, 2026, high-risk AI systems must meet core requirements. These include allowing human intervention to override decisions, training HR teams to understand the limitations of these tools, registering systems in an EU database, and obtaining CE markings before deployment. If you're using vendor tools, it's crucial to disable features like emotion recognition or biometric categorization immediately to avoid penalties.

On top of EU-wide mandates, local laws add more layers of compliance and auditing.

NYC Local Law 144 and Bias Audits

New York City's Local Law 144 applies to any Automated Employment Decision Tool (AEDT) used for roles in NYC, regardless of where your company is based . AEDTs include systems that score, rank, or classify candidates and significantly influence hiring decisions.

To comply, companies must conduct independent bias audits annually, with the first audit required before using the tool . These audits evaluate selection rates across categories like sex, race, and ethnicity, as well as intersectional groups (e.g., Black females or White males). If any group’s selection rate is less than 80% of the highest-performing group, this could signal potential bias.

Candidates must be notified at least 10 business days before an AEDT is used, and a summary of the latest audit must be available on the company website . Violations can result in fines ranging from $500 for the first instance to $1,500 for each subsequent violation. Each day an unaudited tool is used or a candidate is not properly notified counts as a separate violation.

In 2026, Summit Talent Partners faced an investigation after a candidate complained about a missing audit summary. The company paused its AI tool, conducted an independent audit, and found an intersectional category with a 0.72 impact ratio. Fixing the issue, including adding human review steps and updating notices, cost them $42,000 . On the other hand, Nexlify Fintech avoided penalties by maintaining detailed records, including raw data and signed auditor attestations .

By 2026, NYC enforcement shifted from educating companies to actively investigating compliance issues, making bias audits more critical than ever .

While NYC focuses on bias audits, Illinois targets video interview analysis and candidate consent.

Illinois AIPA and AI Impact Assessments

Under Illinois' Artificial Intelligence Video Interview Act (AIPA), recruiters must notify candidates if AI will be used to analyze their video interviews. They also have to explain how the AI works, what it evaluates, and obtain explicit consent before recording . If a candidate requests it, all video copies must be deleted within 30 days .

A 2026 amendment added new requirements: AI tools must undergo bias testing for racial, ethnic, or gender-based disparities before use, with annual audits required afterward . Illinois also prohibits AI-driven discrimination in hiring, even if it’s unintentional . Unlike other states, Illinois allows candidates to sue directly without going through a government agency first. As Sanat Hegde explains:

"Illinois is the only U.S. state with this provision [private right of action]... it changes the litigation calculus for every employer using AI in hiring decisions."

Settlements for single-plaintiff discrimination cases involving AI can range from $50,000 to over $300,000 . Independent bias audits cost between $15,000 and $75,000 per tool annually, while full remediation projects can exceed $1 million . To stay compliant, automate workflows for Illinois-specific notices and consent forms, and keep secure logs of notice delivery and alternative process requests .

These evolving regulations make it clear: companies need to take a proactive approach to AI compliance in hiring or risk serious financial and reputational consequences.

Compliance Checklist for Technical Recruiters

Ensuring compliance in technical hiring isn't just a box to check - it's a critical safeguard against penalties and reputational damage. For recruiters, even a small misstep in automation can lead to significant compliance risks. Proper documentation and proactive measures can make the difference between a smooth audit and costly violations. Despite its importance, many employers still grapple with meeting even the most basic regulatory standards.

Below is a nine-step checklist designed to weave compliance into every stage of the technical hiring process. These steps will help recruiters stay aligned with local, state, and international regulations.

9-Step Hiring Compliance Checklist

Inventory All Automated Tools

Keep a detailed record of every tool used to screen, rank, or filter candidates. This includes internal systems and third-party platforms. Your register should include the tool's vendor, the type of data it uses (like resumes or videos), and the results it generates (e.g., scores or classifications).Map Data Flows and Inputs

Track how candidate data moves through your systems - from collection to storage and sharing with third parties. This mapping is essential for complying with laws like the California Consumer Privacy Act (CCPA), which requires responding to "right to know" or "delete" requests within 45 days .Determine Jurisdictional Scope

Identify whether your hiring process involves candidates in regulated areas such as New York City (Local Law 144), Illinois (AI Video Interview Act), California (CCPA/CPRA), or the European Union (EU AI Act). Different regions have unique rules, so it's crucial to provide location-specific notices and consent forms.Perform Independent Bias Audits

Conduct yearly audits to evaluate selection rates across demographic groups. Use the "four-fifths rule" to identify potential adverse impacts - if a protected group’s selection rate is less than 80% of the highest rate, it may indicate bias. These audits should be carried out by a third party, with costs ranging from $15,000 to $75,000 per tool annually .Establish Candidate Notification Protocols

Inform candidates at least 10 business days before using an automated tool. Include details about the qualifications being assessed, the data sources used, and instructions for alternative assessments.Manage Opt-Out and Alternative Requests

Offer candidates a way to opt out or request alternative assessment methods. For CPRA-related requests, ensure you respond within 15 business days. Maintain a secure log of all notices and requests to demonstrate compliance during audits.Conduct Vendor Due Diligence

Require AI vendors to provide validation studies and independent audit results. Remember, vendor assurances alone don’t absolve your responsibility for ensuring compliance.Implement Human-in-the-Loop Oversight

Train your team to interpret AI outputs and establish clear guidelines for when human intervention is required. This is particularly important for "screen-out" decisions or when candidates request accommodations. Both the EU AI Act and NYC Local Law 144 mandate that qualified human reviewers have the authority to override AI decisions.Maintain Data Retention and Public Disclosure

Publish summaries of bias audits on your company’s website and keep all related records and vendor documentation for at least three years. Under NYC Local Law 144, annual public disclosure of audit results is mandatory, with non-compliance fines reaching up to $1,500 per day.

Summary of Compliance Requirements

The table below condenses key regulatory steps into a quick reference guide:

| Compliance Step | Requirement Detail | Frequency |

|---|---|---|

| Bias Audit | Independent calculation of selection rates and impact ratios | Every 12 months |

| Candidate Notice | Written disclosure of AI use and data sources | 10 business days prior |

| Public Disclosure | Publish audit summaries on the careers page | Annual |

| Data Retention | Keep records of audits, vendor documentation, and candidate data | 3 years |

| Monitoring | Track selection rates and human override frequencies | Quarterly (Recommended) |

How daily.dev Recruiter Handles Candidate Consent

When it comes to managing candidate data, daily.dev Recruiter takes a highly proactive approach to ensure compliance with privacy laws. The platform employs a double opt-in framework that guarantees explicit and active consent from both recruiters and developers before any introductions occur. This isn't just a passive checkbox - it's a deliberate process requiring clear actions from both parties. Consent is obtained during data collection, and every permission granted is carefully documented in a centralized system.

Developers are provided with upfront details about how their data will be collected, used, and retained. The system also keeps consent statuses updated automatically throughout the hiring process. This level of transparency aligns with technical hiring best practices and strict regulations like the GDPR and California's CPRA, which demand clear privacy notices before any personal data is processed . If a candidate decides to withdraw their consent or requests data deletion, the platform ensures that this change is reflected across all connected systems.

As Colin Gordon, a Senior Recruiter at Recruiters LineUp, puts it:

"Employers must obtain explicit consent from candidates before collecting personal data, ensure data security, and allow candidates to access, rectify, or delete their data."

Daily.dev Recruiter also maintains audit-ready records of all consent interactions, communication logs, and data-handling activities. This meticulous documentation is essential for navigating regulatory audits or fulfilling candidate requests. With GDPR penalties reaching up to €20 million or 4% of global annual turnover for violations , managing consent properly is not just a legal requirement - it’s a financial safeguard.

Additionally, the platform's emphasis on warm introductions helps reduce compliance risks. Developers are only shown opportunities they’ve expressed interest in, and recruiters reach out only to candidates who have opted in. This avoids the pitfalls of outdated contact lists or scraped profiles, ensuring a optimizing the candidate experience for everyone involved.

Conclusion

Compliance goes beyond simply meeting regulations - it's a cornerstone for earning trust with technical talent and shielding your organization from expensive penalties. Ameya Deshmukh from Integrail Corp captures this sentiment perfectly:

"Compliance isn't a brake - it's traction. When your AI hiring is fair, transparent, explainable, and governed, you can move faster with confidence" .

The financial risks are undeniable, with hefty fines under GDPR and NYC Local Law 144.

The regulatory environment is evolving quickly. The EU AI Act now categorizes recruitment AI as high-risk, mandating strict data governance and human involvement . Despite this, as of early 2024, less than 15% of NYC employers using automated hiring tools had released the required bias audit results . These changes highlight the urgency of embedding strong compliance measures into every phase of the hiring process.

As Pertama Partners warns, "The vendor said it was unbiased is not a defense" - your organization is still accountable for discriminatory outcomes, even when relying on third-party AI tools . By implementing structured interviews, standardized evaluation rubrics, and regular bias audits, as outlined earlier, you can turn compliance into a strategic advantage. Transparent consent management and vetting technical skills not only meet legal requirements but also strengthen your recruitment process.

FAQs

Do I have to audit every AI hiring tool we use?

Auditing AI hiring tools isn't just a good idea - it’s a necessity. With regulations evolving rapidly, ensuring these tools meet standards for bias prevention, transparency, and privacy is critical. For instance, laws like NYC Local Law 144 require bias audits, making regular reviews essential. Staying compliant not only helps you meet legal obligations but also minimizes risks that could arise from non-compliance.

What should our candidate AI notice and consent include?

Your AI notice and consent for candidates should clearly outline how AI is incorporated into the hiring process. It should give candidates a clear choice to opt-in or opt-out, ideally using a double opt-in framework to confirm their decision. Additionally, the notice must align with regulations like NYC Local Law 144 and EEOC guidance to ensure compliance.

Key areas to address include:

- Bias Testing: Explain the steps taken to identify and minimize bias in AI tools to ensure fair evaluations.

- Human Oversight: Highlight the role of human reviewers in overseeing AI-driven decisions, ensuring a balanced and ethical process.

- Data Privacy Protections: Detail how candidate data is collected, stored, and safeguarded to maintain confidentiality and meet privacy standards.

This approach ensures transparency while prioritizing fairness and regulatory compliance.

How do I handle data deletion requests across all hiring systems?

To manage data deletion requests effectively, start by setting up a straightforward process for candidates to submit their requests. This could be a dedicated form or a clear contact method. Make sure this process aligns with legal requirements, such as the California Consumer Privacy Act (CCPA).

Here’s how you can approach it:

- Map Your Systems: Identify every platform or system where candidate data is stored. This ensures nothing is overlooked during the deletion process.

- Verify the Requestor: Confirm the identity of the person making the request to prevent unauthorized actions.

- Delete or Anonymize Data: Depending on the legal and operational requirements, either remove the data entirely or anonymize it so it can no longer be tied to the individual.

- Keep Records: Document each request and the actions taken. This not only ensures transparency but also helps in case of audits or disputes.

- Stay Updated: Regularly review and update your procedures to comply with changing regulations, especially as AI becomes more integrated into hiring practices.

By maintaining a well-documented and compliant process, you can respect candidate rights while safeguarding your organization.

.png)