Technical interviews often fail to assess true skills due to outdated methods like whiteboard exercises and algorithmic puzzles, which prioritize stress management over practical abilities. Research shows that structured interviews are nearly twice as effective as unstructured ones in predicting job performance. They reduce bias, improve fairness, and streamline hiring processes.

Key takeaways:

- Structured interviews: Use consistent questions and rubrics for better accuracy.

- Bias reduction: Minimize unconscious bias through standardized evaluation and diverse panels.

- Rubrics: Focus on observable behaviors tied to job performance.

- Formats: Combine live coding, take-home assignments, and system design tasks based on role requirements.

- Inclusive design: Adjust processes to accommodate neurodivergent and non-traditional candidates.

- Metrics tracking: Measure predictive validity, interrater reliability, and demographic trends to refine processes.

These strategies ensure hiring decisions are based on job-relevant evidence, reducing costs and improving team performance.

Why Technical Interviews Often Fail

Technical interviews often struggle with two major issues: a low signal-to-noise ratio - where irrelevant factors cloud the assessment of actual skills - and unconscious bias, which skews evaluations and limits diversity. These problems create a system where a candidate's true abilities can be overshadowed by unrelated influences. Here's a closer look at how this happens.

Low Signal-to-Noise Ratio

Unstructured interviews are notorious for adding "noise" by focusing on irrelevant details. Without a consistent set of questions or evaluation criteria, interviewers often ask different questions to different candidates, making comparisons unreliable . Time is wasted on small talk or judging superficial traits like confidence or tone of voice, none of which correlate with on-the-job performance . As Patrick Koss, Senior Tech Lead, aptly points out:

"Whiteboard interviews might be measuring anxiety more than actual coding ability" .

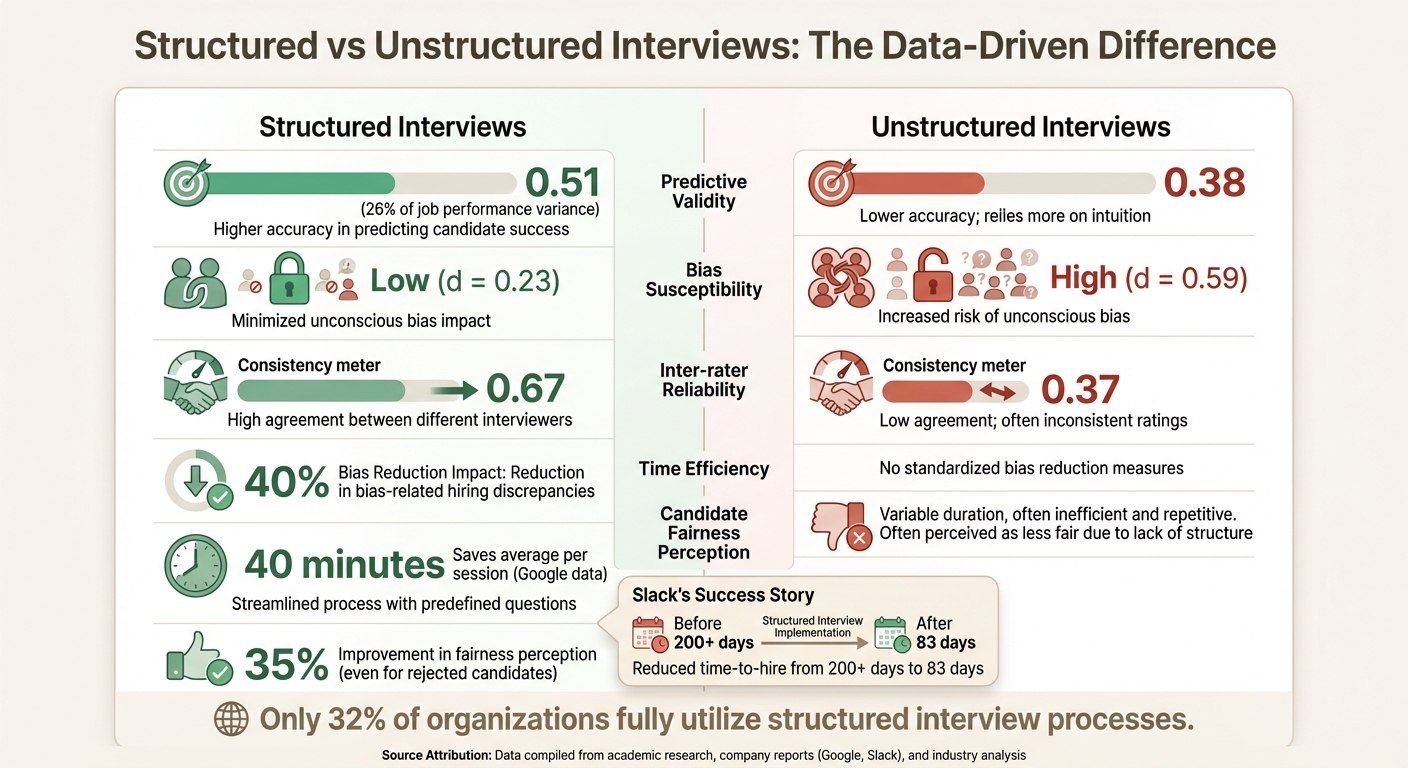

The data backs this up. Unstructured interviews have a predictive validity of only .38, which is just slightly better than flipping a coin. In contrast, structured interviews score higher at .51 . Even high-performing candidates aren’t immune to this inconsistency - over one-third of them have failed at least one interview, highlighting how unpredictable these evaluations can be . Without a standardized process, interviewers often let personal biases, like a preference for certain programming languages or design approaches, overshadow qualities that are critical for the job.

The Impact of Unconscious Bias

Unconscious bias adds another layer of distortion to technical interviews. For example, anchoring causes interviewers to form fixed opinions within the first four minutes, which can influence how they interpret the rest of the interview . Similarly, the halo effect can inflate scores when a candidate mentions working at a prestigious company like Google, while the horn effect can unfairly penalize someone for a single mistake. Another common issue is affinity bias, where interviewers favor candidates with similar backgrounds, communication styles, or hobbies. This is often mislabeled as "culture fit", leading to teams that lack diverse perspectives. To avoid this, companies must learn how to assess cultural fit properly. .

The numbers tell the story: unstructured interviews are far more prone to bias (d = .59) compared to their structured counterparts (d = .23) . And the financial impact is staggering. A bad hire can cost a company approximately $240,000, factoring in recruitment, salary, and lost productivity . There's also the human cost - nearly half of UK organizations reported that biased hiring decisions negatively affected team morale, according to a January 2026 report .

Recognizing these flaws highlights the need for structured interview methods that focus on actual job performance while minimizing bias.

Structured vs Unstructured Interviews: The Evidence for Structure

::: @figure  {Structured vs Unstructured Technical Interviews: Performance Metrics Comparison}

{Structured vs Unstructured Technical Interviews: Performance Metrics Comparison}

To minimize noise and bias in hiring, a structured interview process is essential. These interviews follow a set of predetermined questions in a fixed order, with responses evaluated using standardized rubrics. In contrast, unstructured interviews often rely on varying questions and subjective impressions, focusing more on rapport than actual competence .

The numbers highlight the difference. Structured interviews boast a predictive validity of 0.51, which accounts for 26% of job performance variance - making them twice as predictive as unstructured interviews, which sit at 0.38 . Companies adopting structured formats report a 40% reduction in bias-related hiring discrepancies, with inter-rater reliability jumping from 0.37 to 0.67 . Even candidates who aren’t selected benefit, as their perception of fairness improves by as much as 35% .

"Research consistently shows structured interviews are twice as predictive of job performance as unstructured ones." - Adithyan RK, Author

The real-world impact is clear. For example, Slack revamped its hiring process in 2020 by introducing structured work-sample tests, including a standardized code review. This change slashed their time-to-hire for software engineers from over 200 days to just 83 days . Similarly, Google found that structured interview guides save an average of 40 minutes per session . Yet, despite these advantages, only 32% of organizations fully utilize structured interview processes .

Key Differences Between Structured and Unstructured Formats

Here’s a comparison of the two approaches:

| Dimension | Structured Interview | Unstructured Interview |

|---|---|---|

| Question Format | Predetermined, consistent for all candidates | Improvised, varies by candidate |

| Evaluation Basis | Standardized rubric with behavioral anchors | Subjective "gut feeling" or impressions |

| Predictive Validity | 0.51 (High) | 0.38 (Low) |

| Bias Susceptibility | Low (d = 0.23) | High (d = 0.59) |

| Legal Defensibility | Very Strong | Weak |

| Inter-rater Reliability | 0.67 | 0.37 |

Structured interviews focus every moment on gathering job-relevant data, cutting out distractions . The foundation is a job analysis to pinpoint 5 to 8 key competencies that differentiate top performers from average ones. Questions and rubrics are then crafted around these skills . When paired with a cognitive ability test, structured interviews achieve a composite validity of 0.63 - one of the most reliable predictors of job success . This underscores the importance of a data-driven, comprehensive approach to recruitment. This includes implementing candidate experience quick wins to ensure the process remains efficient and respectful.

In the next section, we’ll explore how to design rubrics that effectively predict on-the-job performance.

Designing Rubrics That Predict Job Performance

The strength of a rubric lies in its ability to provide clear, objective guidance. For interviewers, this means scoring candidates based on observable behaviors rather than vague impressions. Shifting from subjective evaluations to evidence-based assessments can make the difference between a hiring process that succeeds and one that falls short.

To start, identify 5–7 core competencies that are essential to the role. These might include skills like problem decomposition, code quality, system design trade-offs, testing strategy, and communication. Each competency should have a numerical scale (commonly 1–5) tied to specific, measurable behaviors. For instance, instead of using ambiguous phrases like "seemed like a team player", opt for detailed criteria such as "provided clear examples of resolving technical disagreements" .

"A rubric is useful only if each interviewer knows what each score means. Every interviewer approaching a rubric should be able to understand what observable behaviors or answers... merit a particular score." - Michael Newman

Modern rubrics should also reflect the evolving nature of engineering. This includes evaluating AI-related competencies, such as how candidates prompt AI tools, identify inaccuracies (like hallucinations), and adapt AI-generated outputs to fit system constraints . Today’s engineers are not just writing code - they’re also assessing and integrating code from various sources.

It’s also important to distinguish between completing a task and the quality of the process. A candidate who produces functional code but fails to explain trade-offs or address edge cases could signal potential challenges down the line . To maintain objectivity, interviewers should cite specific examples - such as quotes, tests, or design decisions - to justify their scores. This level of precision not only predicts job performance but also reduces bias in technical evaluations.

Key Components of Effective Rubrics

Behavioral anchors should align with the seniority level of the role. For example, the expectations for a mid-level engineer (L3) and a senior engineer (L5) will differ. In a backend role, a "3 out of 5" on system design might mean "structures a design, identifies major components, and notes trade-offs with latency estimates." On the other hand, a "5 out of 5" might require "delivers a scalable design, quantifies bottlenecks, and proposes mitigation strategies" .

Scoring should happen immediately after each question, not at the end of the interview. Delaying scores can lead to anchoring bias, where early impressions skew final judgments . If the final recommendation is calculated from rubric scores, any overrides should include a written explanation to ensure transparency and accountability.

To maintain consistency, create a "gold" benchmark library containing 3–5 anonymized examples that represent clear pass, borderline, and no-pass outcomes . Use these examples in regular calibration sessions - 45 minutes each month - where interviewers independently score the same artifact and then discuss any discrepancies. This practice helps align the team and ensures consistency in scoring. Studies show that data-driven hiring methods outperform instinct-driven approaches by at least 25% .

Finally, weight competencies according to their importance for the role. For example, debugging might account for 40% of the total score for a site reliability engineer, while communication could be weighted at 10%. Set minimum thresholds for each competency - such as requiring no score below 2 out of 5 - to avoid hiring candidates with critical skill gaps in key areas.

Live Coding vs Take-Home Assignments vs System Design: When to Use Each

Choosing the right interview format can significantly improve hiring accuracy by focusing on the skills that matter most for the role. By aligning the assessment method with the job's requirements, you can reduce bias and better evaluate candidates' abilities using evidence-based approaches.

Live coding is great for assessing how candidates break down problems and collaborate in real time. It also shows how they handle feedback under pressure and communicate their thought process. However, it’s worth noting that around 62% of candidates report high levels of anxiety during live coding sessions, which can unintentionally favor those who remain calm under stress.

Take-home assignments provide insights into code quality, architecture, and self-discipline. These are particularly useful for roles that demand maintainable and well-structured code. Take-home tests simulate real-world work without the pressures of a live audience. In 2024, candidates rated take-home challenges 3.75 out of 5. That said, these assignments often come with higher rejection rates (45–55%, compared to 30–40% for live coding), and completion rates hover around 60–70% unless candidates are compensated, typically with a flat fee of $200–$500.

System design interviews are ideal for senior and staff engineering roles. They focus on high-level architectural thinking, assessing how candidates approach scalability, manage trade-offs, and design complex systems. While this format carries moderate stress and some risk of bias, it’s particularly effective for evaluating leadership and technical depth.

A hybrid model - combining take-home assignments with live code walkthroughs - has shown better predictive accuracy for job performance. This approach achieves a 0.71 correlation with job success, compared to 0.62 for take-home challenges alone and 0.57 for live coding alone.

"Take-home coding tests predict long-term success while live coding predicts short-term onboarding speed. Our process integrates both to capture complete performance data." - Matthew Johnson, CTO

Evaluation Formats Comparison

| Format | Best For | Time Investment | Primary Signal | Stress Level | Cheating Risk | Rejection Rate |

|---|---|---|---|---|---|---|

| Live Coding | Screening and collaboration-heavy roles | 60–90 minutes | Real-time communication & problem-solving | High | Low | 30–40% |

| Take-Home Assignment | Senior roles and specialized positions | 2–10 hours | Code quality, architecture, self-discipline | Low | High (AI/Plagiarism) | 45–55% |

| System Design | Senior/Staff Engineers and Architects | 60 minutes | High-level architecture, scalability, trade-offs | Medium | Low | N/A |

Tailoring Formats to Seniority:

- Junior engineers (L3): Opt for live coding or concise take-home challenges centered on algorithmic problem-solving.

- Mid-level engineers (L4): Pair take-home tests with debrief sessions to discuss code organization and edge cases.

- Senior engineers (L5+): Use system design exercises or design document tasks to evaluate architectural trade-offs and leadership skills.

To make take-home assignments manageable, keep their scope under four hours. For live coding, standardize the process with browser-based IDEs like CoderPad or CodeSignal to ensure a consistent experience for all candidates. Above all, rely on structured rubrics with clear behavioral criteria rather than subjective impressions to evaluate candidates effectively.

Common Bias Patterns in Technical Interviews

Building on the structured techniques discussed earlier, it's essential to understand specific bias patterns that can shape evaluations in technical interviews.

Affinity Bias, Halo Effect, Horn Effect, and Anchoring Bias

Several biases commonly interfere with objective assessments:

- Affinity bias: This occurs when interviewers favor candidates who share similar backgrounds, interests, or experiences. While it may feel natural, it often results in teams that lack diversity and fresh perspectives.

- Halo effect: Here, one positive attribute - like a candidate's association with a prestigious company - can overshadow weaker technical performance during the evaluation.

- Horn effect: The opposite of the halo effect, this bias sees early mistakes unfairly influencing the remainder of the assessment.

- Anchoring bias: This happens when interviewers form strong initial opinions based on resumes or first impressions and then unconsciously seek evidence to confirm those opinions .

Even seemingly harmless cultural references, like using baseball statistics in problem scenarios, can unintentionally disadvantage candidates from non-traditional or international backgrounds .

The stats paint a clear picture: 65% of recruiters acknowledge that bias remains a persistent challenge in technical hiring . Additionally, a single bad hire can cost a company as much as $240,000 . Women in tech also face unique challenges, being 1.6 times more likely to experience layoffs compared to their male counterparts .

Strategies to Reduce Bias

To combat these biases, structured approaches can make a significant difference:

Standardized interviews: Asking every candidate the same set of technical interview questions and evaluating them with consistent criteria drastically improves objectivity. Research indicates that structured interviews are much better at predicting job performance than unstructured ones. However, only 57% of organizations use them consistently .

Scoring rubrics: Define clear performance benchmarks. For example, in a system design interview, a top score might require addressing database sharding and caching layers, while a moderate score might reflect basic familiarity with scaling concepts . Rubrics ensure all candidates are judged by the same standards.

Independent feedback: Require interviewers to submit their notes and scores before discussing with others. This method avoids groupthink and prevents dominant voices from swaying decisions. Collaborative hiring platforms can further streamline this process, reducing interviewer bias by 35% and cutting time-to-hire by up to 25% .

"Calibration does not imply that every interviewer must assign the same score to an applicant. Instead, it guarantees that when discrepancies arise, they represent meaningful variations in viewpoint, rather than misalignment."

– Elena Bejan, People Culture and Development Director

Diverse hiring panels: Include interviewers from a mix of backgrounds and departments. If internal diversity is limited, consider bringing in external consultants or cross-functional team members.

Job-relevant questions: Eliminate questions that rely on cultural or regional knowledge, like sports metaphors, and focus on challenges directly tied to the role. This ensures a fairer evaluation for all candidates, regardless of their background .

These strategies lay the groundwork for more equitable hiring practices. Up next, we'll delve into how calibration sessions can further align interviewer standards and reinforce these approaches.

Calibration Sessions: How to Align Interviewers on Standards

Calibration sessions are essential for ensuring that interviewers evaluate candidates consistently and fairly. By aligning everyone on skills, scorecards, and evaluation criteria, these sessions help eliminate misunderstandings about the role and reduce subjective influences like "gut feel" or "culture fit" that can skew results . The ultimate goal? To make sure differences in scores reflect genuine perspectives, not confusion about expectations .

Why is this important? A bad hire can cost a company up to $240,000, and managers often spend 26% of their time coaching employees who fall short of performance standards . Structured interviews with calibrated processes significantly outperform unstructured ones, boosting their ability to predict job performance by 34% (predictive validity of 0.51 vs. 0.38) .

"Calibration does not imply that every interviewer must assign the same score to an applicant. Instead, it guarantees that when discrepancies arise, they represent meaningful variations in viewpoint, rather than misalignment with the role's requirements."

– Elena Bejan, People Culture and Development Director

These sessions lay the groundwork for creating interview panels that are fair, consistent, and effective.

Best Practices for Calibration

To get the most out of calibration, follow these actionable steps:

Shadowing and Reverse Shadowing: Begin by having new interviewers observe five sessions conducted by experienced leads to see how rubrics are applied in real-time . Then, flip the script - senior interviewers should observe the new interviewer's first five interviews. This allows for early coaching on pacing, questioning, and scoring accuracy to prevent misalignment .

Monthly Calibration Meetings: Dedicate 45 minutes each month to reviewing anonymized recordings or transcripts as a team. Everyone silently scores the same benchmark submission before comparing results. This exercise highlights variances in interpretation and reinforces consistent standards .

Benchmark Library: Maintain a collection of 3–5 anonymized submissions that exemplify clear pass, clear no, and borderline cases. These serve as reference points during training and calibration sessions .

Independent Feedback Systems: To avoid anchoring bias, implement a system where interviewers submit scores privately before any group discussion. Chuck Edward, Head of Global Talent Acquisition at Microsoft, explained the risks of shared feedback:

"Everybody on the interview loop could see what others were saying... It's real clear how that could lead to biases and being influenced by someone else's views" .

By requiring independent scoring, organizations have reduced interviewer bias by up to 35% .Monitoring and Recalibration: Regularly review score distributions to identify outliers. Interviewers with inconsistent scoring should attend recalibration sessions with top evaluators. These sessions emphasize evidence-based scoring, requiring specific examples like a candidate explaining trade-offs between eventual consistency and strong consistency, instead of vague impressions .

Inclusive Interview Design: Accommodating Neurodivergent and Non-Traditional Candidates

Inclusive interview design builds on structured techniques and bias reduction strategies to refine how candidates are evaluated. Traditional technical interviews often unintentionally exclude talented individuals who don’t fit a conventional mold. Neurodivergent individuals make up about 15% to 20% of the global population, yet the unemployment rate for college-educated autistic adults is an alarming 85%. At the same time, studies show that autistic employees in technical roles at JPMorgan Chase have demonstrated productivity levels 90% to 140% higher than their neurotypical counterparts . The issue isn’t a lack of skill - it's interview processes that tend to measure anxiety or conformity rather than actual ability.

The business case for inclusive design is hard to ignore. For example, SAP’s "Autism at Work" program boasts a 90% employee retention rate, while EY’s "Neurodiverse Centres of Excellence" have brought in $1 billion in revenue and saved 3.5 million work hours, thanks to innovative contributions from neurodivergent staff . Yet, traditional recruitment methods - like timed tests or panel interviews - are seen as disadvantageous by 76% of neurodivergent job seekers . Making thoughtful adjustments can improve the candidate experience while still maintaining rigorous evaluation standards.

Accommodations for Neurodivergent Candidates

Small changes can make a big difference. For instance, providing interview questions 24–48 hours in advance gives candidates more time to process and prepare. Adjusting the interview environment - such as using quiet rooms with adjustable lighting and allowing noise-canceling headphones - can help reduce sensory distractions like fluorescent lights or background noise.

Microsoft’s "Neurodiversity Hiring Programme" is a great example of this approach in action. Instead of traditional phone screens, they introduced a multi-day "academy" workshop format, leading to the successful hiring of 200 full-time employees in roles spanning finance, engineering, and business operations as of 2024 .

"None of this costs a lot and the accommodations are minimal. Moving a seat, perhaps changing a fluorescent bulb, and offering noise-cancelling headphones are the kinds of things we're talking about" .

Other adjustments include scheduling 15-minute breaks between interview rounds to help candidates manage their nerves and replacing open-ended "think aloud" prompts with specific, direct questions to maintain focus during assessments.

Breaking Down Barriers for Non-Traditional Candidates

Inclusive interview design also considers candidates from non-traditional backgrounds - those without formal computer science degrees or experience with prestigious employers. By focusing on skills rather than credentials, companies can create opportunities for individuals who may have caregiving responsibilities or limited time for side projects. Eliminating rigid requirements like specific degrees or high GPAs can help widen the talent pool.

Replace academic-style brainteasers with tasks that reflect actual job challenges. Allowing candidates to use tools like Google, third-party libraries, or their own laptops during technical assessments creates a more realistic and equitable evaluation process.

Additionally, shift the focus from "culture fit" to "culture add." This approach values the unique perspectives a candidate brings, rather than simply seeking someone who mirrors the existing team. Sheri Soliman, Senior Staff Software Developer at Shopify, offers this advice:

"Don't ask yourself 'Do I want to grab a beer with this person?'... Ask yourself, 'Can I write good code with this person?'"

To ensure fairness, use objective rubrics with clearly defined behavioral indicators, and avoid culturally specific questions - like those based on niche sports stats or pop culture trivia - that may inadvertently disadvantage candidates from different backgrounds.

Next, we’ll explore how tracking key metrics can further improve the effectiveness of interview processes.

Measuring Interview Effectiveness: Tracking Pass-Through Rates and New-Hire Performance

Once you've established a calibrated interview process, the next step is to measure its effectiveness. Even the most carefully designed systems need regular evaluation to ensure they're doing their job. Without tracking the right metrics, you might miss out on top talent or, worse, make costly hiring mistakes. Consider this: a single poor hire can cost a company up to $240,000 when you factor in recruitment expenses, compensation, and lost productivity . This makes tracking and refining your process a must.

Key Metrics to Track

Predictive validity is a critical measure. It evaluates how well interview scores predict future job performance. Research shows that structured interviews have a validity score of .51, compared to .38 for unstructured ones . That’s a 34% improvement in accurately predicting success. To gauge this, compare interview scores with performance reviews conducted at 6 and 12 months. If you don’t see a strong correlation, your rubrics might not be measuring the right skills or traits.

Interrater agreement rate checks how consistently interviewers score candidates. It’s typically defined as interviewers’ scores aligning within one point on a 1-5 scale. High agreement rates (above 70%) suggest clear rubrics and effective calibration sessions . On the flip side, low agreement rates could mean interviewers are interpreting performance differently, which undermines the structure of your process.

The interview-to-offer ratio is another essential metric. It reveals how well your sourcing and screening processes are working. For instance, if you’re interviewing 10 candidates to make one offer, it might indicate that unsuitable candidates are slipping through your filters . Lower ratios, however, point to stronger initial screenings and a more efficient process overall.

| Metric | What It Reveals | Target Benchmark |

|---|---|---|

| Interrater Agreement | Consistency in scoring and rubric clarity | >70% agreement |

| Predictive Validity | Ability to forecast job performance | .51 for structured interviews |

| Interview-to-Offer Ratio | Effectiveness of pre-interview screening | Lower ratios indicate better filtering |

| Time-to-Hire | Efficiency of decision-making | 25% faster with structured feedback |

It’s also important to monitor demographic pass-through trends. If certain groups consistently advance at lower rates despite having similar qualifications, it could signal unintended barriers in your process. Elena Bejan, People Culture and Development Director at Index.dev, emphasizes that calibration ensures any discrepancies reflect meaningful differences in perspective, not misalignment .

Tracking these metrics not only helps you validate your process but also provides a foundation for ongoing improvement, as discussed in the next section.

Using Feedback to Iterate and Improve

Feedback plays a crucial role in refining your evaluation process. Gathering input from both candidates and interviewers creates a loop for continuous improvement. Metrics like candidate drop-off rates and offer acceptance rates can reveal whether your process feels professional and fair. Research shows that rejected candidates are 35% more satisfied when they experience a consistent, structured evaluation process .

Independent scoring, emphasized during calibration sessions, helps reduce biases like anchoring . For example, scoring candidates immediately after each response minimizes memory errors and recency effects . This real-time documentation keeps evaluations objective and reliable.

Conducting quarterly audits is another effective way to maintain quality. These audits can uncover patterns in score distributions, such as whether most candidates receive the same score. If 80% of scores cluster in one range, your rubrics might lack the specificity needed to differentiate between candidates. Analytics can also identify outlier interviewers whose scores consistently deviate from the panel average. These individuals may benefit from additional coaching or pairing with more experienced evaluators .

Rich Rosen, an Executive Recruiter at Cornerstone Search, shared that structured evaluation methods helped his team generate over $250,000 in revenue within six months by improving candidate quality and placement efficiency . This highlights how careful measurement and iterative feedback can drive tangible business results.

Conclusion

Structured interviews stand out as nearly twice as effective at predicting job performance compared to unstructured ones, with a predictive validity of .51 versus .38 . Without standardized questions, clear scoring rubrics, and properly calibrated interview panels, hiring decisions are left to chance - and the stakes are high. A single poor hire can cost up to $240,000 in recruitment expenses, compensation, and lost productivity .

Creating a strong interview process isn't about crafting clever questions. It's about approaching interviews as a measurement system, one that generates consistent, comparable evidence across all candidates . This involves designing questions based on a formal job analysis, scoring answers immediately based on observable behaviors (not gut feelings), and ensuring independent evaluations before group discussions to avoid biases like anchoring . These practices not only improve efficiency but also pave the way for fair and inclusive hiring.

Shifting to a structured, evidence-driven hiring approach also broadens access to underrepresented talent pools. For instance, SAP's Autism at Work initiative highlights how adapting interview methods for neurodivergent candidates - who make up an estimated 15–20% of the population - can uncover technical talent while achieving retention rates near 90% . Additionally, companies with high racial and ethnic diversity outperform industry norms by 35% . This makes inclusive interview design both a moral commitment and a competitive edge.

"Calibration is more than just a one-time activity; it is a commitment to creating a culture of consistent, fair review that allows you to recruit and retain great personnel faster."

- Elena Bejan, People Culture and Development Director, Index.dev

An effective interview process is never static. Regular calibration sessions, quarterly audits to monitor interviewer consistency, and ongoing validation against new-hire performance ensure your system remains sharp. These strategies create a hiring framework that evolves with your needs. The goal is simple yet powerful: reduce noise, amplify signal, and base hiring decisions on job-relevant evidence rather than subjective impressions. When done right, it’s a win for everyone - your team, your candidates, and your business.

FAQs

How do I start moving from unstructured to structured technical interviews?

To build a fair and reliable hiring process, start by establishing a consistent framework. Use the same set of questions, scoring rubrics, and evaluation criteria for all candidates applying for the same role. Clearly define role levels and standardize any exercises or tasks involved in the evaluation. Shared rubrics are key to maintaining objective grading across the board.

Additionally, conduct calibration sessions with interviewers to align on performance expectations and standards. These sessions ensure everyone is on the same page, reducing inconsistencies. Together, these practices help minimize bias, improve the accuracy of interviews, and create a hiring process that’s equitable for all candidates.

What should a good technical interview rubric include?

A well-structured technical interview rubric lays out clear evaluation criteria that align directly with the skills needed for the job. This approach allows assessments to be grounded in evidence rather than subjective impressions. By incorporating predefined scoring dimensions, the rubric ensures consistency across interviews and minimizes the potential for bias. Prioritizing measurable competencies leads to hiring decisions that are both fair and dependable.

Which interview format fits my role: live coding, take-home, or system design?

The best interview format depends on the role and the specific skills you're assessing. For instance, live coding is ideal for gauging how candidates tackle problems under pressure in real-time. On the other hand, take-home assignments allow you to evaluate their ability to work independently and showcase their practical coding skills. For senior-level positions, system design interviews are a better fit, as they focus on architectural thinking and high-level decision-making. Select the format that aligns with your hiring objectives.

.png)