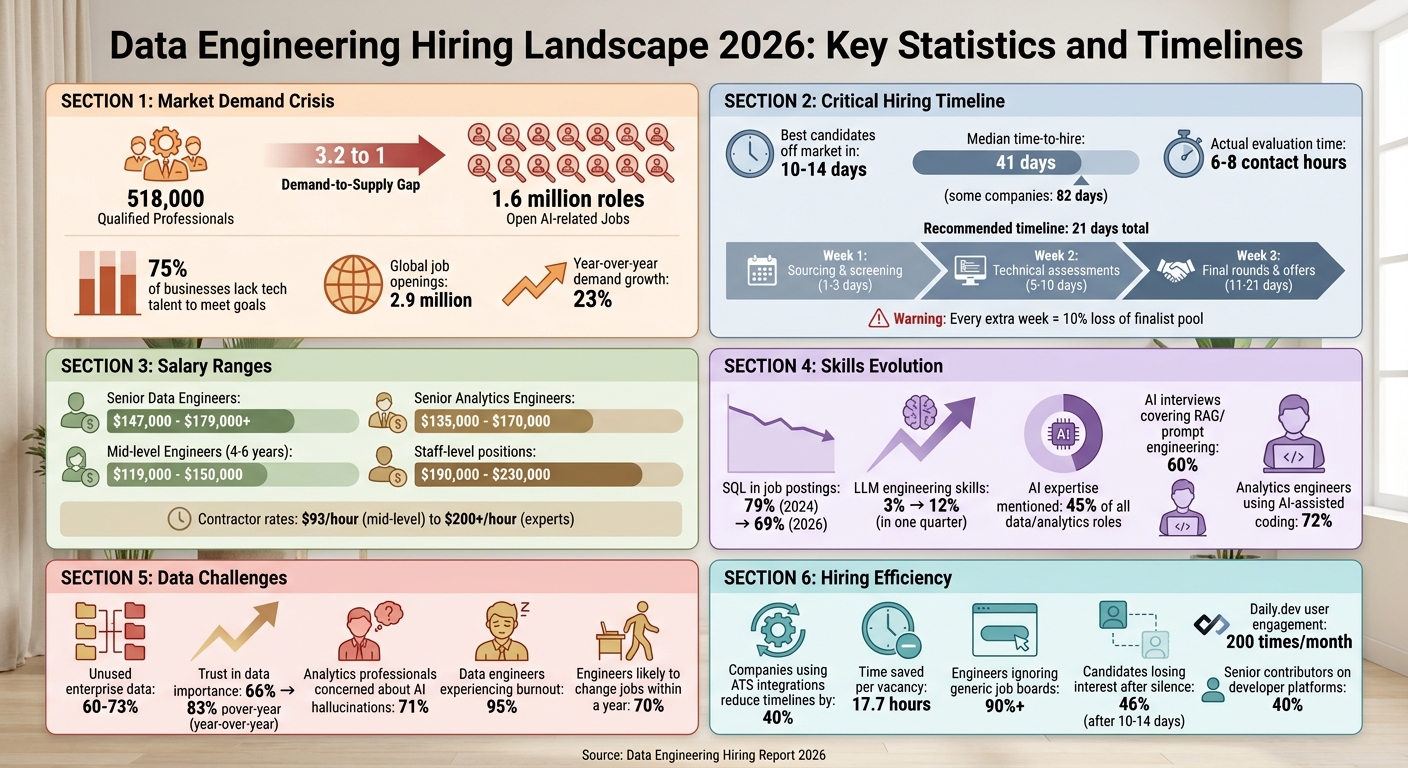

Hiring data engineers and analytics professionals in 2026 is tougher than ever. Companies face a 3.2 to 1 demand-to-supply gap, with only 518,000 qualified professionals available for 1.6 million open AI-related jobs. Roles have evolved significantly, requiring expertise in vector databases, LLM orchestration, and RAG pipelines - skills that barely existed two years ago. Here’s what you need to know:

- Key Challenges: 75% of businesses lack the tech talent to meet goals, and hiring delays (60–90 days) are impacting AI projects and modernization efforts.

- New Skill Demands: Data engineers now need to manage tools like LangChain, Pinecone, and Weaviate, while analytics engineers leverage AI-assisted coding to streamline workflows.

- Hiring Timelines: The best candidates are off the market in 10–14 days, so a fast, structured hiring process is essential to stay competitive.

- Recruitment Models: Companies are balancing full-time roles for core tasks with contractors for specialized projects or surges in demand.

- Remote and Location Strategies: Secondary hubs like Hyderabad and Madrid offer less competition, while remote hiring expands access to global talent.

To succeed, focus on clear job descriptions, efficient timelines, and modern recruitment tools like developer-first platforms. Highlight your tech stack, provide transparent salary details, and evaluate candidates’ decision-making skills - not just their familiarity with tools.

::: @figure  {Data Engineering Hiring Landscape 2026: Key Statistics and Timelines}

{Data Engineering Hiring Landscape 2026: Key Statistics and Timelines}

How Data Engineering and Analytics Roles Have Changed

The landscape of data engineering roles has undergone a major transformation. Today, the field is split into two distinct paths: "Classic" roles, which focus on traditional ETL processes and data modeling, and "AI-adjacent" roles, which emphasize skills like RAG pipelines and vector infrastructure . This division means companies no longer hire for one generic role; instead, they must choose candidates based on their organization's specific technical needs.

Data Engineers vs. Analytics Engineers: What's the Difference?

Data engineers are responsible for building and maintaining data pipelines, ensuring data is ingested, stored, processed, and delivered efficiently. They handle tasks like managing production pipelines, monitoring SLAs, and even participating in on-call rotations . As Tom Kenaley, Senior Partner at KORE1, explains:

"Data engineers build the roads. Data scientists drive on them" .

By 2026, data engineers are expected to work with tools like LangChain to build LLM orchestration layers and manage vector databases such as Pinecone and Weaviate, alongside traditional data warehouses .

Analytics engineers, on the other hand, focus on turning raw data into business-ready insights. They use tools like dbt, SQL, and Snowflake to create analysis-ready models while employing practices like version control and automated testing . This role has evolved significantly, with 72% of analytics engineers now using AI-assisted coding to streamline model creation and documentation .

While both roles require SQL and Python expertise, their focus areas differ. Data engineers are increasingly tested on AI-related skills like RAG, hallucination mitigation, and prompt engineering (with 60% of interviews now covering these areas), whereas analytics engineers are evaluated on their ability to create centralized metrics and semantic models . These differences are reflected in salary ranges: senior data engineers earn $147,000 to $179,000+, while senior analytics engineers earn $135,000 to $170,000 .

Technical Skills Required in 2026

Python has become the go-to language for AI integration, while SQL's prominence in job postings has declined slightly - from 79% in 2024 to 69% in 2026 . The real game-changer lies in AI-related infrastructure skills. For example, LLM engineering skills in data engineering job postings surged from 3% to 12% in just one quarter between late 2025 and early 2026. Additionally, 45% of all data and analytics roles now explicitly mention AI expertise .

| DE Skill Shift | 2024 Requirement | 2026 Requirement |

|---|---|---|

| Orchestration | Airflow / Prefect | Airflow + LLM Orchestration (LangChain) |

| Storage | Snowflake / BigQuery | Snowflake + Vector DBs (Pinecone/Weaviate) |

| Modeling | dbt, Star Schema | dbt + RAG Pipeline Design |

| New Skills | Streaming (Nice to have) | Embedding lifecycle & Hallucination mitigation |

For analytics engineers, AI-assisted workflows are now standard, while dbt remains the backbone of SQL modeling . The industry is also moving toward "Zero ETL" architectures and AI-native data processes, reducing the relevance of traditional ETL specialists . When hiring, look for candidates who can discuss architectural trade-offs - like choosing between Pinecone and pgvector - rather than simply listing technical features .

While technical expertise is essential, the ability to communicate effectively and collaborate across teams is just as critical.

Communication and Collaboration Skills

Technical know-how alone isn't enough to succeed in these roles. As Bruno Lima from phData puts it:

"AI won't fix a messy foundation. It just makes the lack of discipline much more visible" .

Data professionals must be able to translate vague business needs into clear technical requirements and explain complex concepts to non-technical stakeholders . Studies show that 60% to 73% of enterprise data remains unused for analytics, often because of misalignment between what engineers build and what businesses actually need .

With the rise of data democratization, engineers must create datasets that are easy for non-technical teams - like marketing, HR, and sales - to use safely . This approach requires a "product mindset", where data is treated as a product designed with end-users in mind . When assessing candidates, communication skills should be evaluated based on seniority:

- Junior engineers should clearly explain debugging processes.

- Mid-level engineers must document their assumptions and flag potential risks.

- Senior engineers should align technical decisions with business goals and justify trade-offs .

Trust in data has become a key priority, with its importance rising from 66% to 83% year-over-year in 2026. At the same time, 71% of analytics professionals are concerned about AI hallucinations or incorrect data reaching stakeholders . This makes collaboration skills - like negotiating technical debt and pushing back on unrealistic requests - essential for senior-level roles .

Hiring engineers?

Connect with developers where they actually hang out. No cold outreach, just real conversations.

Setting Your Hiring Timeline and Approach

In 2026, speed is everything when hiring data engineers. The best candidates are off the market within 10 to 14 days, so dragging the process beyond three weeks means you're likely losing top talent to faster-moving competitors . While the median time-to-hire for engineering roles is 41 days, some companies take as long as 82 days - a timeline that simply doesn't cut it in today's competitive environment . Interestingly, the actual evaluation time is just 6 to 8 contact hours; the real delays come from scheduling conflicts, approval bottlenecks, and lengthy debriefs . As Tom Kenaley from KORE1 aptly puts it:

"Every silent day between interview rounds is a day your best candidate is talking to someone else" .

To stay competitive, you need to rethink your strategies for reducing time-to-hire. Companies like Linear have streamlined their process to just 12 days by conducting two technical interviews and a founder discussion in a single week . Similarly, Vercel wraps up hiring in 14 to 18 days by using live pair-programming sessions instead of take-home assignments . PostHog takes a "SuperDay" approach, packing all interviews into one day and extending offers within 48 hours . To achieve this level of efficiency, consider pre-scheduling 2 to 3 interview slots weekly for all relevant team members before opening the role. Commit to reviewing every qualified application within 48 business hours and hold hiring committee debriefs within 24 hours of the final interview .

How Long Does It Take to Hire Data Engineers?

Timelines vary widely depending on your hiring approach. Internal hires can take 45 to 90 days, while specialized staffing agencies can shorten this to 3 to 6 weeks . In enterprise settings, the process often stretches to 60 to 90 days . A faster timeline might look like this: 1 to 3 days for sourcing and screening, 2 to 4 days for phone interviews, 5 to 10 days for technical assessments, 11 to 15 days for final rounds, and 16 to 21 days for decisions and offers .

With demand for data engineers growing at about 23% year-over-year and global job openings reaching 2.9 million, the competition is fierce . Every additional week in your hiring process could cost you 10% of your finalist pool to competing offers . To stay ahead, aim for a 21-day framework: dedicate Week 1 to sourcing and screening, Week 2 to technical assessments, and Week 3 to final interviews and offers .

Full-Time, Contract, or Contract-to-Hire: Which to Choose

Once your hiring timeline is optimized, the next step is deciding on the right employment model for your needs.

Full-time roles are ideal for building core infrastructure and executing long-term plans. For example, hiring a Senior Data Engineer as a full-time employee in the first 6 months ensures your foundational systems are in place before bringing on analysts or data scientists . Skipping this step could leave other hires idle, waiting for infrastructure to catch up.

Contract roles are perfect for temporary, project-based needs like feature development, AI pilots, or platform migrations. Specialized platforms can connect you with vetted talent in just 24 to 48 hours . Rates typically range from $93/hour for mid-level engineers to $160/hour for senior talent, with top experts charging $200+/hour .

Contract-to-hire models allow you to test engineers on real projects before committing to a full-time role. This approach is particularly useful for verifying skills or assessing fit before offering a high-salary position . Many companies now use a blended workforce model: a permanent core team handles infrastructure, while contractors tackle specific projects or surges in demand .

Choosing the right model depends on your priorities - whether it's speed, flexibility, or long-term stability.

Building Your Own Pipeline vs. Using Staffing Partners

Recruitment today is a balancing act between developing internal talent pipelines and leveraging external expertise to fill critical data roles quickly. Internal pipelines provide long-term stability and a deep understanding of your organization, but they come with heavy upfront investments in training and mentorship. On the other hand, staffing partners offer speed and access to pre-vetted professionals, though often at a higher cost. Many companies in 2026 are adopting a hybrid approach: maintaining permanent staff for core roles while bringing in specialized contractors for high-demand periods, such as platform migrations or large-scale build phases.

With 75% of businesses reporting tech talent shortages and 2.9 million data-related job vacancies worldwide , relying solely on one recruitment strategy is risky. Specialized staffing partners, for example, can deliver candidates in as little as two weeks, with most placements taking 3 to 6 weeks . This agility is crucial when delays in platform migrations or AI pilots can lead to significant expenses . Let’s explore why these partners are so effective for data roles.

Why Specialized Staffing Partners Work for Data Roles

Specialized staffing firms have a knack for finding passive candidates - those who aren't actively job hunting . They maintain relationships with professionals skilled in areas like machine learning infrastructure, cloud certifications, and modern data technologies (e.g., Snowflake, Databricks, dbt). If you're under tight deadlines, scaling rapidly after securing funding, or need someone with niche expertise - like delivering production-ready code using Apache Iceberg or Lakehouse architectures - a staffing partner can connect you with talent that traditional methods might miss .

The best partners don’t just send resumes - they dive into your data stack to understand your needs before presenting candidates . As Peter Korpak, Chief Analyst & Founder of DataEngineeringCompanies.com, puts it:

"The vendor you want is the one that asks hard questions about your environment before talking about headcount" .

Contract-to-hire models are becoming increasingly popular because they allow you to evaluate a candidate’s actual performance on production systems before committing to a permanent hire . This is particularly useful when assessing judgment - like knowing when a simple batch process is better than distributed computing or when DuckDB is a smarter choice than Spark for small-scale queries .

How to Evaluate Recruitment Partners

Not all staffing partners are created equal, and a proper evaluation goes beyond flashy marketing materials. Look for what Peter Korpak calls "operating evidence" . Ask specific questions like, "Who will review pull requests in week one?" If their answers are vague, it could signal trouble integrating their candidates into your team. Go a step further - interview not just the sales team but also the proposed engineers and architects. You might even consider running a test session where the partner solves a sanitized version of one of your problems to uncover potential risks.

Data shows that companies focusing on embedded delivery achieve 2.5x higher ROI . Yet, many firms still prioritize cost over quality - 70% of buyers choose staffing vendors based on rates alone, a decision linked to a 15% higher project failure rate. Additionally, 42% of data engineering augmentations face delays and compliance issues, eroding up to 18% of projected cost savings . The right partner should understand your technical environment and evolving needs, not just provide candidates who check off a list of tools .

Cost and Speed: Internal vs. External Recruitment

Comparing hourly contractor rates to annual salaries doesn’t tell the whole story. For instance, mid-level data engineers typically cost around $93 per hour as contractors, while experts can charge over $200 per hour . Meanwhile, full-time salaries for similar roles range from $119,000 to $150,000 annually . Delays in hiring or onboarding can quickly escalate costs and stall essential projects.

Building internal pipelines also requires investment in mentorship. A good rule of thumb is to assign one junior engineer for every three seniors, with mentorship goals tied to senior performance reviews . While this approach pays off in the long run, the immediate need for talent often overshadows it. As Chris Hillman, Data Strategist, warns:

"Every junior you don't hire today is a mid-level engineer you won't have in 2028 and a senior you won't have in 2030" .

With 95% of current data engineers experiencing burnout and 70% likely to change jobs within a year , waiting years to develop talent isn’t feasible. The solution? Use external partners to meet immediate demands while building your internal team. Remember, technology decisions made in 2026 can be reversed, but talent decisions have lasting effects .

How to Assess Technical Skills and Architecture Knowledge

With the growing demands in data engineering, it’s crucial to have thorough technical evaluations to ensure candidates can handle production-level challenges. But assessing talent goes beyond just testing SQL or Python skills. The real challenge lies in identifying candidates with real-world, production experience compared to those with only experimental knowledge. While SQL and Python proficiency make up around 60% of data engineering interviews , the ability to make smart decisions under real-world constraints - judgment - is what separates mid-level engineers from senior professionals.

To effectively evaluate candidates, consider a three-step process:

- Stage 1: Screen for reasoning by discussing past trade-offs they’ve made.

- Stage 2: Test practical skills with realistic, time-bound assignments.

- Stage 3: Conduct system design interviews that focus on architectural trade-offs and adaptability.

As MyCulture.ai aptly states:

"Tool fluency is easy to fake. Judgment is not." – MyCulture.ai

During system design interviews, pay attention to how candidates justify complexity. A strong candidate will naturally use phrases like “the tradeoff here is…” multiple times when explaining their decisions. To test adaptability, introduce new constraints mid-conversation - like reducing latency requirements from five minutes to 30 seconds. This approach reveals whether their design can pivot or if they’re stuck on a single solution. These steps lay the groundwork for deeper technical evaluations.

Testing Hands-On Data Engineering Skills

Take-home assignments should reflect real-world tasks but remain concise and practical. Assignments might include loading multiple data sources, creating a streamlined model, and adding tests to ensure idempotency. Beyond code quality, assess how well candidates document their work. Those who write simpler pipelines and clearly explain their limitations often demonstrate better judgment than those who over-engineer solutions.

A critical aspect to evaluate is whether candidates can build idempotent pipelines - pipelines that produce the same results no matter how many times they are rerun. This is key in production environments where jobs may fail or data might arrive late. Additionally, ask candidates to discuss concepts like batch versus incremental loading, Change Data Capture (CDC), and handling schema drift. These topics reveal whether they’re thinking beyond one-off scripts and toward building resilient systems.

For senior roles, dig deeper into their "production scars." Ask for specific examples of pipelines or models they’ve built that served real users at scale. Probe into how they managed failures, what monitoring tools they used, and what measures they implemented for observability. With 60% to 73% of enterprise data going unused for analytics - often due to poorly designed pipelines - it’s essential to hire engineers who understand these pitfalls.

Do Certifications Matter? AWS, GCP, and Azure

Cloud certifications, like those for AWS, GCP, or Azure, can signal a baseline understanding of cloud fundamentals. However, they should never replace hands-on testing. Use certifications as an initial filter, but remember that a candidate with credentials but no production experience can be riskier than one who has shipped reliable systems without certifications.

The real value of certifications lies in demonstrating a candidate’s commitment to learning and their knowledge of specific platforms. For example, if your tech stack relies on AWS, a candidate with AWS certifications might adapt more quickly to services like Glue, Redshift, or EMR. However, the ultimate test is whether they can explain why they’d choose one service over another based on factors like cost, scale, and business needs - not just that they’re familiar with the tools.

Warning Signs During Technical Interviews

Be cautious of candidates who jump straight to tools without first understanding the problem. If someone immediately suggests “I’d use Kafka and Spark” without asking about data volume, latency requirements, budget, or existing infrastructure, it’s a major red flag. The best engineers start by clarifying requirements before proposing solutions.

Also, watch for candidates who only describe ideal scenarios without addressing error handling or fault tolerance. For instance, ask how they’d debug a pipeline if revenue metrics suddenly dropped by 30%. Strong candidates will outline systematic approaches, like checking row counts, validating null percentages, and analyzing logs. Those who can’t describe a debugging process likely lack experience with production failures.

Another red flag is proposing overly expensive solutions for simple problems. Real-time streaming pipelines, for example, can cost up to 100 times more than daily batch jobs - $500/day versus $5/day . Senior engineers should justify such expenses from the outset. As goLance puts it:

"A model that costs $50K/month to serve isn't a working solution. Senior practitioners think about cost-per-prediction from day one." – goLance

Lastly, beware of rigid tool enthusiasts who insist on using one specific tool without considering trade-offs. The data landscape evolves quickly, and engineers who can’t adapt to changing technology or discuss multiple approaches may struggle as your stack evolves. Similarly, candidates who dismiss concerns over data quality, monitoring, or governance are likely to hit roadblocks when building systems that analysts and stakeholders can trust.

Using Location and Market Data to Your Advantage

When it comes to hiring, relying solely on internal pipelines and staffing partners isn’t enough anymore. In 2026, ignoring geographic advantages can seriously hurt your talent acquisition efforts. With demand for talent growing at 23% year-over-year, it’s not just about who you hire - it’s about where you look. As JobsPikr aptly states:

"Location strategy is now part of data infrastructure strategy" .

Sticking to traditional tech hubs is creating roadblocks for many companies. On the other hand, those leveraging location data are finding better opportunities by targeting regions with deep talent pools and less competition. The focus has shifted from cost savings to capability arbitrage, meaning it’s no longer just about finding the cheapest labor but about identifying areas rich in AI-aligned expertise. While major hubs like San Francisco and New York still boast large talent pools, they often suffer from inefficiencies due to "structural mismatches" . In contrast, secondary hubs like Hyderabad, Pune, and Madrid offer more accessible talent with fewer market pressures, avoiding the productivity slowdowns seen in overheated areas.

Where to Find Data Engineering Talent

In 2026, Seattle leads globally for elite engineering talent, with 38.2% of its candidates scoring in the top quartile . Toronto has climbed to 5th place, with 29.2% of top-tier talent . But rankings only tell part of the story. Different cities cater to specific types of employers, which shapes the kind of talent you’ll find. For example:

- Tech-heavy cities like Seattle and Austin have 60–80% of their workforce in tech companies.

- Enterprise hubs like New York and Dallas lean toward 45–60% enterprise employment.

- Outsourcing hotspots like Bangalore and Warsaw see 30–50% of engineers working in IT services .

These distinctions matter. Engineers in tech-dominated hubs often prioritize cutting-edge tools and equity compensation, while those in enterprise-focused regions may value stability and benefits more. Meanwhile, Latin America has become a go-to nearshore option for U.S. companies, offering 40–50% cost savings compared to domestic hires, along with time-zone alignment that traditional outsourcing hubs can’t match .

Pay close attention to job posting intensity in your target regions. For instance, if postings for data engineers have surged 35% year-over-year - as they have in early 2026 - you can expect rising salaries and longer hiring timelines. To stay ahead, track salary trends quarterly instead of annually, as last year’s benchmarks are already outdated . These insights are essential for navigating the challenges and opportunities of remote hiring.

Hiring Remote Data Engineers: Benefits and Challenges

Remote hiring has opened up access to a global talent pool, which is critical as demand for data and AI specialists is expected to outpace supply by 30–40% by 2027 . Even leadership roles like Head of Data Engineering are becoming more remote-friendly, especially at mid-sized companies . Remote flexibility allows companies to scale their sourcing efforts, but it doesn’t completely eliminate the challenges of hiring in a competitive market . Now, you’re not just competing locally - you’re up against global players, and speed is often the deciding factor.

However, remote hiring comes with its own set of hurdles. Time zone differences can slow down agile workflows, and assessing candidates remotely requires more rigorous screening to avoid hiring someone with strong credentials but limited real-world experience . Even with remote options, the average time to fill a data engineering role in an enterprise setting remains 60 to 90 days . If your hiring process drags on for more than three weeks from initial screen to offer, you risk losing top candidates - whether they’re local or remote .

The shift to remote work has also narrowed salary gaps. While some non-tech companies can save 20–30% by hiring remotely , competition for senior roles ($147,000–$179,000 base) and staff-level positions ($190,000–$230,000) remains fierce . Mid-level engineers with 4–6 years of experience, earning between $119,000 and $150,000, are particularly sought after, often juggling multiple job offers simultaneously . Your edge isn’t just offering remote work - it’s showcasing a modern tech stack (think Snowflake, dbt, Airflow) and running a faster, more efficient hiring process than companies still stuck in outdated methods.

Tools and Platforms for Finding Data Engineering Talent

By 2026, traditional hiring methods are becoming less effective. Over 90% of engineers on specialized platforms now ignore outreach from generic job boards . Instead, the focus has shifted to developer-first communities - spaces where engineers actively engage, learn, and stay updated on their field. These platforms go beyond static profiles, using real-time activity and behavior to connect recruiters with candidates. This shift enables more targeted and efficient hiring.

Using Developer-First Platforms Like daily.dev Recruiter

Platforms such as daily.dev Recruiter are transforming how recruiters connect with data engineers by meeting them in the spaces they already frequent. Engineers interact with daily.dev an impressive 200 times per month . Unlike traditional resume databases, this platform is an active community where 85-90% of users are already employed but open to the right opportunity when approached by a trusted source .

What makes these platforms stand out is their behavioral matching system. Instead of relying on keyword searches, daily.dev connects recruiters with candidates based on their reading habits, community contributions, and current projects. For example, you can target engineers in specific groups, or "squads", like "Frontend Architects" or "GenAI", ensuring you're reaching professionals with a proven interest in your tech stack . With 40% of the talent pool made up of senior contributors and engineering leaders , you’re not just sourcing junior talent - you’re accessing seasoned professionals and decision-makers.

To maintain quality interactions, the platform uses a double opt-in process where both parties must agree before a conversation begins . This eliminates cold outreach and ghosting entirely. Nimrod Kramer, CEO & Co-Founder of daily.dev, explains:

"We built a place where engineers can turn off the noise. To enter this space, you don't need a hack. You need trust" .

Pricing is simple: $350 per role, per month, with no placement fees, long-term contracts, or limits on introductions .

How to Reach Out to Candidates Effectively

Cold outreach is no longer effective. Success in 2026 comes from warm introductions and transparency. When contacting candidates through developer-first platforms, focus on what matters to them: your tech stack (e.g., Snowflake, dbt, Airflow), clear 30/90-day objectives, and salary ranges . Engineers want to see how the role aligns with their career goals before committing to a conversation.

To streamline the process, use custom screening questions - up to three short ones - to assess technical fit and interest before scheduling interviews . This saves time for both parties. Also, speed is crucial. With 46% of candidates losing interest after 10-14 days of silence , prompt follow-ups can make all the difference.

Connecting Recruitment Platforms with Your ATS

Once you’ve engaged candidates, integrating your sourcing platform with your Applicant Tracking System (ATS) is essential. A smooth connection between these systems ensures no opportunities slip through the cracks. Today’s platforms offer native two-way sync with ATS tools like Greenhouse, Lever, Ashby, and Workable, automatically syncing candidate profiles and updates in real time . This eliminates manual data entry and keeps the hiring team up to date.

The benefits are clear: companies using ATS integrations reduce hiring timelines by 40% and save 17.7 hours per vacancy on administrative tasks . On the flip side, disconnected systems can cost recruiters approximately $17,000 annually in lost productivity . To avoid these inefficiencies, set up automated status updates to keep candidates informed via email or SMS as their applications progress. Additionally, use real-time monitoring to catch API or webhook issues before they disrupt your workflow . The goal is a seamless process from initial match to final offer, ensuring no data is lost between platforms and candidates stay engaged throughout the hiring journey.

Conclusion

Recruiting data engineers and analytics talent in 2026 requires rethinking traditional hiring methods. The old strategies - posting on generic job boards, waiting for applications, and conducting drawn-out interview processes - are outdated in a market where 75% of companies struggle to find the tech talent they need .

Start with a clear plan. Focus on hiring data engineers first to build the foundational infrastructure before expanding your analytics team. As Marcus Clement from Spectraforce explains:

"Data engineering is now an infrastructure strategy. Talent acquisition must reflect that reality" .

Speed matters. Aim to reducing time to hire for technical roles to under three weeks, as opposed to the usual 60- to 90-day process . Tools like daily.dev Recruiter can help you connect with engineers who are already active in their field. Highlight modern tech stacks, set clear 30/90-day goals, and provide transparent salary details to stand out.

When evaluating candidates, focus on their decision-making abilities rather than just their familiarity with tools. For example, ask how they handle trade-offs like batch versus streaming processing or balancing cost and performance . If you include take-home assignments, keep them short (under four hours) and compensate candidates fairly ($200–$500) to respect their time . Lastly, streamline your hiring process by integrating your sourcing tools with your ATS. This is especially effective when implementing automated candidate sourcing to maintain a steady pipeline. Adapting to these practices is essential in today’s competitive hiring environment.

FAQs

What skills should I prioritize for AI-adjacent data engineers in 2026?

In 2026, prioritizing skills in AI and large language model (LLM) technologies will be crucial. Expertise in areas like pipeline ownership, debugging production issues, streaming, change data capture (CDC), and lakehouse development will also be highly sought after. Proficiency with tools such as Snowflake, dbt, Spark, Kafka, and vector databases is essential. Additionally, a solid understanding of architecture and reliability will help meet the growing needs of data-focused industries.

How can I run a 2–3 week hiring process without sacrificing quality?

To manage a 2–3 week hiring process without cutting corners, focus on making evaluations efficient and eliminating unnecessary delays. Here’s how to make it work:

- Prioritize quick scheduling for interviews and assessments.

- Use structured interview formats to ensure consistency and fairness.

- Set clear timelines for decisions and stick to them.

Complete all evaluations promptly, deliver feedback without delay, and extend offers as soon as possible. Acting quickly is critical since top candidates often make decisions within 10 days. By streamlining the process, you can maintain high standards while meeting tight deadlines.

What interview signals best predict real production experience?

When evaluating candidates for data engineering roles, look for those who can provide clear, detailed examples of end-to-end projects they’ve worked on. This includes walking through the entire process: problem framing, choosing the right model, training, evaluating, and deploying it.

What sets strong candidates apart is their ability to explain trade-offs they made along the way, the lessons they learned, and how they applied practical judgment to solve real-world challenges.

Another key indicator? Candidates who can dive into topics like architecture, reliability, governance, and team collaboration. These areas highlight experience that’s directly relevant to production settings, where systems need to be scalable, reliable, and well-integrated with team workflows.

.png)