Navigating hiring compliance in 2026 is no longer optional - it's a legal requirement with serious consequences. New regulations across the U.S. and EU are reshaping how technical recruiters use AI, handle candidate data, and ensure fair hiring practices. Here's what you need to know:

- AI Tools in Hiring: States like New York, California, and Colorado now require bias audits, candidate consent, and transparency for automated hiring tools. The EU AI Act classifies most recruitment AI as "high-risk", demanding strict oversight and documentation.

- Pay Transparency: Salary ranges must be included in job postings in many U.S. states, with remote roles needing to comply with multiple jurisdictions.

- Data Privacy: GDPR, CCPA, and other laws require clear data handling practices, including deletion requests and human oversight for AI decisions.

- Bias and Accessibility: Federal and state laws mandate that hiring processes avoid adverse impacts and accommodate candidates with disabilities.

Recruiters must balance compliance with effective hiring by conducting audits, maintaining detailed records, and embedding ethical tech recruitment practices and human oversight into AI-driven processes. The stakes are high, but a proactive approach can help avoid legal risks while building trust with candidates.

The Compliance Landscape for Technical Recruiters

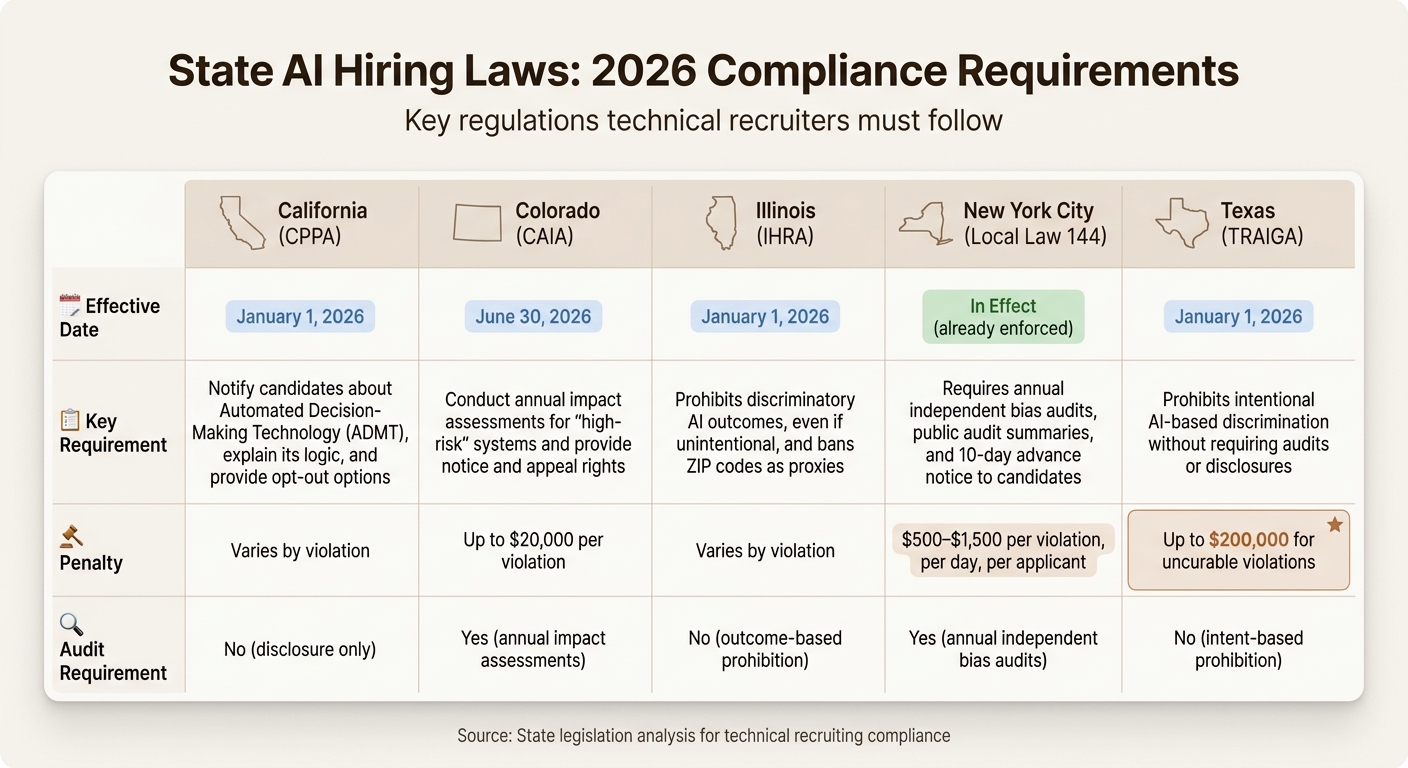

::: @figure  {2026 State AI Hiring Laws: Requirements, Deadlines, and Penalties Comparison}

{2026 State AI Hiring Laws: Requirements, Deadlines, and Penalties Comparison}

By 2026, technical recruiters are navigating a maze of regulations that combine federal anti-discrimination laws with state-specific rules on AI, pay transparency, and data privacy. Successfully managing these regulations can mean the difference between creating a defensible hiring process and facing costly legal challenges.

The difficulty lies not just in the sheer number of laws but in how each targets a different stage of hiring. Federal EEOC rules focus on whether screening criteria lead to adverse impacts. State AI laws require disclosures and audits for automated tools. Pay transparency laws dictate what salary details must appear in job postings. Beyond legal requirements, transparency builds trust with candidates throughout the hiring process. Meanwhile, data privacy rules regulate how candidate information is collected, stored, and deleted. For technical recruiters, a single hire can trigger multiple compliance requirements.

Here’s a reality check: 99% of Fortune 500 companies use AI to screen resumes, and 55% of HR leaders rely on algorithms to make hiring decisions . Yet many recruiters wrongly believe that using a third-party tool shields them from liability. It doesn’t. As Akerman LLP explains, "The 'we're just using a vendor's tool' defense is rapidly eroding" .

This sets the stage for a closer look at the federal and state regulations shaping technical recruiting today.

Federal and State Laws You Need to Know

At the federal level, the EEOC oversees compliance with Title VII of the Civil Rights Act and the Uniform Guidelines on Employee Selection Procedures. Employers are held accountable for ensuring that automated tools don’t create adverse impacts, are job-related, and accommodate individuals under the ADA - even if the tools are developed by an external vendor . For federal contractors, the Office of Federal Contract Compliance Programs (OFCCP) adds requirements like affirmative action plans and audits to monitor hiring practices across protected groups.

On the state level, AI laws vary widely depending on where candidates live. Here’s a snapshot of key state regulations:

| State | Effective Date | Key Requirement | Penalty |

|---|---|---|---|

| California (CPPA) | January 1, 2026 | Notify candidates about Automated Decision-Making Technology (ADMT), explain its logic, and provide opt-out options | Varies by violation |

| Colorado (CAIA) | June 30, 2026 | Conduct annual impact assessments for "high-risk" systems and provide notice and appeal rights | Up to $20,000 per violation |

| Illinois (IHRA) | January 1, 2026 | Prohibits discriminatory AI outcomes, even if unintentional, and bans ZIP codes as proxies | Varies by violation |

| New York City (Local Law 144) | In effect | Requires annual independent bias audits, public audit summaries, and 10-day advance notice to candidates | $500–$1,500 per violation, per day, per applicant |

| Texas (TRAIGA) | January 1, 2026 | Prohibits intentional AI-based discrimination without requiring audits or disclosures | Up to $200,000 for uncurable violations |

These laws mean recruiters must carefully adjust their hiring processes to stay compliant. Even a small oversight could lead to escalating legal and financial consequences.

Pay transparency laws are also evolving. California's SB 642 requires that salary ranges include bonuses and stock options, not just base pay . Colorado mandates salary ranges in all job postings for roles that can be performed in the state. Delaware’s law, effective in 2027, will require employers to keep salary records for at least three years . For recruiters hiring remote developers, this creates a challenge: job postings must meet the disclosure rules of multiple states, as a Colorado-based developer viewing a California job triggers both states' requirements.

Data Privacy Regulations: GDPR and CCPA

By 2026, 20 U.S. states have enacted comprehensive privacy laws, with California’s CCPA/CPRA leading the way. These laws grant job applicants and employees rights to access, delete, correct, or opt out of data use . States like Indiana, Kentucky, and Rhode Island have also introduced new privacy laws in 2026 .

For U.S.-based recruiters, the GDPR is still relevant if they source candidates from Europe, hire for European offices, or use tools that process data within the EU. Under Article 22, candidates have the right to challenge "solely automated" decisions that have significant effects . This means recruiters must include a "human-in-the-loop" process where a trained professional reviews AI recommendations before finalizing decisions.

The EU AI Act further classifies most recruitment AI systems as "high-risk", requiring detailed risk management, technical documentation, and human oversight . If you’re using AI to rank candidates from platforms like GitHub or Stack Overflow, you’ll need to maintain records of how the system generates its rankings and provide candidates with explanations of the logic used .

Adding to the complexity, AI-generated content - such as prompts, drafts, and conversation logs - is now considered discoverable electronically stored information (ESI) in legal cases . If a candidate files a discrimination claim, the internal logic of your AI tool - even if it wasn’t shared externally - can be subpoenaed. As Jen Rein of SHIFT HR Compliance Training warns:

"The biggest compliance risk isn't that you are using AI, it's that you are using it casually, without clear oversight, documentation, or accountability" .

Hiring engineers?

Connect with developers where they actually hang out. No cold outreach, just real conversations.

What Makes Technical Recruitment Compliance Different

Technical recruiters face a distinct set of challenges when it comes to compliance, particularly in how they evaluate candidates and handle data. Unlike traditional roles, technical recruitment often involves sourcing from platforms that weren’t designed for hiring. For example, recruiting a marketing manager via LinkedIn is straightforward because it’s a platform built for professional networking. But sourcing a backend engineer from GitHub involves navigating a space built primarily for code collaboration, not recruitment.

The compliance landscape becomes even more complex during the screening process. Technical recruiters might analyze open-source contributions, review coding test results, or use AI tools to match skills like "Go experience" with "Rust roles" based on semantic similarities . These activities generate unique types of data, each subject to privacy laws, anti-discrimination rules, and emerging AI regulations. As Susan Anderson from Mitratech explains:

"We have found that it's the combination of human experts and technology together that creates the most reliable outcome for our customers" .

The challenge lies in maintaining compliance at every stage of the process.

Here’s an important consideration: only 16% of developers are actively looking for jobs, but 75% are open to new opportunities . This means technical recruiting often relies on passive sourcing from platforms like GitHub or Stack Overflow, which introduces unique compliance hurdles. Let’s break down the specifics.

Sourcing from GitHub, Stack Overflow, and Developer Communities

Platforms like GitHub and Stack Overflow offer a treasure trove of signals about a candidate’s skills. GitHub hosts over 100 million repositories, while Stack Overflow sees more than 50 million unique visitors each month . However, using these platforms for recruitment comes with serious compliance risks.

1. Consent Issues

Even though GitHub and Stack Overflow profiles are public, users don’t always expect their information to be used for hiring purposes. Using third-party tools to extract unlisted contact details, like email addresses, can raise privacy concerns under laws like GDPR and CCPA [14, 17]. For example, scraping emails from commit histories or using pattern-matching techniques violates data minimization principles.

2. Automated Ranking Risks

AI tools that rank candidates based on GitHub activity - like merged pull requests or contribution frequency - must comply with laws like NYC Local Law 144. This law mandates an annual bias audit for automated employment decision tools .

3. Protected Attribute Inference

Technical profiles often include data that could unintentionally reveal protected attributes like age, gender, or ethnicity. For example, profile photos or social media links can lead to inadvertent bias. Under the EU AI Act, recruitment AI systems are classified as high-risk and require strict oversight . To stay compliant, recruiters must suppress these attributes during ranking processes .

A good practice here is to focus only on verifiable, job-related proof points. For instance, instead of making subjective inferences about a candidate’s "passion" or "culture fit", use GitHub data to confirm specific skills. Cite concrete examples, like "authored 5 merged pull requests improving PostgreSQL query performance" . Always link directly to the source code or commit to ensure your process is transparent and defensible.

| Platform | Compliance Risk | Mitigation Strategy |

|---|---|---|

| GitHub | Inferring protected attributes from photos or social links | Remove names and photos; focus on job-related indicators like merged pull requests |

| Stack Overflow | Using reputation scores as a sole ranking factor | Use scores alongside other inputs and ensure human review before final decisions |

| Developer Communities | Extracting unlisted emails via third-party tools | Stick to publicly listed contact information; avoid scraping or pattern-matching [14, 17] |

Managing Code Test and Technical Assessment Data

Compliance challenges don’t stop at sourcing. The data generated during technical assessments - like code submissions, video walkthroughs, and timed performance metrics - requires careful handling.

For example, under the Illinois Artificial Intelligence Video Interview Act, companies must delete video interviews within 30 days of a candidate’s request . If you’re using AI to analyze video walkthroughs - tracking things like speech patterns or eye contact - you must obtain explicit consent before recording .

Retention and deletion policies are equally critical for code test data. GDPR and CCPA allow candidates to request the deletion of their assessment data . Automated scoring systems that evaluate code quality or debugging speed must comply with GDPR Article 22, which restricts decisions made solely by automation and guarantees the right to human review . Ameya Deshmukh puts it succinctly:

"If you can't justify why you collect a signal (e.g., webcam gaze), don't collect it" .

To stay compliant, map every data element you collect to a specific legal justification. For example, if you’re measuring time-to-completion in a coding test, document why that metric is relevant to the role. Additionally, configure your assessment tools to automatically delete data once the retention period ends .

Accessibility is another key consideration. If a timed coding test disadvantages a candidate with a disability, you’re legally required to provide reasonable accommodations under the ADA . This might include offering untimed tests or oral explanations. Failing to address accessibility risks discrimination claims, even if the bias is unintentional.

Reducing Bias in Technical Screening

Meeting EEOC standards means ensuring evaluation criteria align directly with job requirements. Bias often sneaks in when irrelevant factors, like a candidate's educational pedigree, are used as stand-ins for actual technical ability. For example, attending a prestigious school may reflect access to resources rather than true competence, and this can tie closely to a candidate's socioeconomic background.

Legally, every screening criterion must be tied to the job and meet business needs. This means interview questions and evaluation metrics must be backed by a current job analysis that connects them to essential job functions. If the hiring process results in a selection rate for any protected group falling below 80% of that for the highest-scoring group, the EEOC could flag it as having an adverse impact under the four-fifths rule. As Ameya Deshmukh, Director of Recruiting at Everworker, puts it:

"The question isn't 'Can we use AI?' It's 'Can we prove it's fair, valid, and legally defensible?'"

Structured processes help reduce legal risks while improving hiring quality by focusing on measurable skills. These principles pave the way for using structured evaluations and blind reviews to further cut down on bias.

Using Structured Interviews and Standardized Rubrics

In structured interviews, all candidates are asked the same set of core questions, and their answers are assessed against predefined criteria. This method prevents "panel drift", where interviewers unconsciously shift their standards based on individual impressions.

To make this work, set evaluation rubrics ahead of time. Break down each technical competency - like debugging, API architecture, or systems design - into specific Knowledge, Skills, Abilities, and Other characteristics (KSAOs) required for the role. Assign more weight to must-have skills than to nice-to-haves. For instance, if Kubernetes expertise is essential, clearly outline what mastery looks like, such as explaining pod lifecycle details or troubleshooting common errors.

Every score should be backed by concrete evidence. This not only ensures fairness but also creates a defensible record of job-related decision-making.

When incorporating AI into candidate evaluations, structured rubrics provide a framework for human reviewers to assess AI-generated recommendations. This oversight is crucial to avoid fully automated decisions, which can conflict with GDPR Article 22. If a human overrides an AI recommendation, there should be a written explanation to back the decision.

Regular adverse impact analyses are also key. Monitor pass-through rates at each stage of the hiring process across different demographic groups. If, for example, backend engineers from a particular group are advancing at lower rates, identify the features of the screening process that may be causing the disparity.

| Strategy | Compliance Benefit | Practical Implementation |

|---|---|---|

| Structured Rubrics | Ensures criteria are job-related | Map competencies to essential job functions; prioritize critical skills. |

| Blind Resume Review | Reduces risk of biased treatment | Remove names, photos, and schools; use tools to identify relevant skills objectively. |

| Bias Audits | Meets legal standards like NYC Local Law 144 | Compare selection rates across groups; identify and address problematic screening steps. |

| ADA Accommodations | Meets ADA/EEOC requirements | Offer alternative assessments and human review options for candidates with disabilities. |

Blind Resume Reviews for Engineering Roles

To ensure fair evaluations, identity cues should be removed from resumes. Blind resume reviews involve redacting details like names, photos, gender markers, school names, graduation dates, and locations before the evaluation stage. This helps reviewers focus on technical qualifications and job-related outcomes.

Details like school names and graduation years often act as proxies for factors like age or socioeconomic background. Removing these elements minimizes unconscious bias.

Automated applicant tracking systems (ATS) can help by redacting sensitive details during the initial review phase. Clearly define which parts of the process can be assisted by AI and which require human oversight.

Keep detailed records of every decision. For candidates who advance, document specific achievements, such as "Led migration from monolithic to microservices architecture, reducing API latency by 40%." For candidates who are not selected, note the specific technical gaps identified.

Blind reviews should also accommodate candidates with disabilities. If a candidate requests adjustments due to a disability, such as extra time for a technical assessment, these accommodations must be provided. This approach is both fair and legally mandated.

Conduct regular adverse impact analyses to ensure blind screening is achieving its goal of reducing bias. Compare selection rates across protected groups monthly or quarterly. As Jen Rein, Content Strategist at SHIFT HR Compliance Training, explains:

"Legal exposure increasingly depends on whether employers can explain decisions, document processes, and demonstrate fairness."

Candidate Data Handling and Privacy Requirements

When sourcing developers from platforms like GitHub or Stack Overflow, you're dealing with personal data, which brings legal responsibilities under regulations like GDPR, CCPA, and state privacy laws. Compliance isn't optional - violations can lead to hefty penalties, with GDPR fines reaching as high as €20M or 4% of global annual turnover.

Start with data minimization. Only collect information that's directly relevant to the role you're hiring for. For instance, if you're looking for a backend engineer, focus on evidence of API design skills - such as public repositories, conference presentations, or articles. Avoid irrelevant data, like analyzing webcam behavior during coding tests.

GDPR Article 22 mandates human oversight for decisions significantly affecting candidates. This means if an applicant tracking system (ATS) or AI tool flags someone based on their activity in developer communities, a human must review the recommendation and have the authority to override it. Make sure to document decisions with clear reasoning. For example, you might note that a candidate was advanced due to their Kubernetes expertise or not selected due to insufficient experience with distributed systems.

The following sections outline how to handle consent and data deletion requests while staying compliant with these legal standards.

Managing Consent and Data Deletion Requests

Explicit consent isn't always required to source candidates from public profiles. Under GDPR, you can rely on "legitimate interests" as a lawful basis, provided you complete a Legitimate Interests Assessment (LIA) and implement safeguards. When adding someone from platforms like LinkedIn or GitHub to your ATS, you must inform them about the data processing and give them the option to object or request deletion.

If a candidate requests data deletion, GDPR Article 17 gives you one month to comply. This means removing their information from all storage locations, including ATS platforms, emails, spreadsheets, local drives, and backups. Before processing, always verify the candidate's identity to ensure the request is legitimate.

Creating a standardized workflow for deletion requests is essential. Many modern ATS tools now offer "Right to be Forgotten" features, which automate the process across databases and archives. Document each request thoroughly, including the verification process and confirmation of deletion, to protect your organization during audits.

Here’s a quick reference table for regulatory deletion requirements:

| Regulation | Deletion Requirement | Deadline | Max Penalty |

|---|---|---|---|

| GDPR | Right to Erasure (Article 17) | 1 Month | €20M or 4% of global annual turnover |

| CCPA | Right to Delete | Varies (usually 45 days) | $7,500 per intentional violation |

| Illinois AI Video Interview Act | Video interview deletion | 30 Days | Varies by violation type |

GDPR Rules for Sourcing from Developer Communities

Recruiters must follow strict GDPR rules when sourcing candidates from developer platforms like GitHub or Stack Overflow. Stick to public, role-relevant data like repositories, conference talks, or articles, and avoid scraping private information or making assumptions about protected attributes.

Provide candidates with clear, straightforward notices about the data you collect, why it's being used, and how AI contributes to decision-making. These notices should be easily accessible - such as on your career page - and properly versioned to show what candidates saw at the time of sourcing.

If AI tools are ranking candidates based on developer community activities, you must conduct a Data Protection Impact Assessment (DPIA) for high-risk workflows. Under the EU AI Act, recruitment-related AI is considered "high-risk", requiring detailed logging and oversight. Keep in mind that by August 2, 2026, you’ll need full documentation and transparency to comply with the EU AI Act.

When working with third-party tools for sourcing or screening, ensure your vendor contracts include the right to audit, strict limits on data usage (e.g., no training their models with your data without permission), and clear deletion agreements. Vendors should also hold SOC 2 Type II certification to meet baseline security and privacy standards.

AI in Hiring: New Regulations for 2026

AI regulations in hiring are tightening, emphasizing that every automated decision must be clear, reviewable, and justifiable. If you're using AI tools to screen resumes, rank candidates, or evaluate coding tests, you're navigating a rapidly changing legal landscape. Both the EU and the US now classify most recruitment AI as high-risk, requiring transparency, bias testing, and mandatory human oversight. These rules align with earlier discussions on handling candidate data, reinforcing the need for careful monitoring throughout the hiring process.

EU AI Act and Automated Screening Tools

Under Annex III of the EU AI Act, any AI system used for hiring - such as automated screening or ranking - is considered high-risk . These regulations, enforceable starting August 2, 2026, will apply to US-based companies if their AI outputs are used within the EU, even when screening candidates across borders .

Here’s what high-risk classification means for your AI-driven hiring tools:

- Risk Management: You must establish a risk management process for the AI’s lifecycle .

- Data Governance: Training and testing datasets need to be checked for biases and meet standards for diversity and accuracy .

- Documentation and Logging: Maintain detailed records and automated logs to support audits .

- Human Oversight: Ensure systems allow recruiters to intervene, understand AI limitations, and override decisions when necessary .

The penalties for non-compliance are steep. Using banned AI practices - like emotion recognition in hiring or categorizing applicants based on sensitive traits such as race or political views - can lead to fines of up to €35,000,000 or 7% of global annual revenue, whichever is higher . Violating high-risk requirements can result in fines of up to €15,000,000 or 3% of global annual revenue .

Practical Tip: Don’t let AI be the sole decision-maker. Always have a recruiter or hiring manager review automated decisions, documenting the reasoning, such as "Advanced due to Kubernetes expertise" or "Not selected due to lack of distributed systems experience" .

US AI Laws: NYC Local Law 144 and Illinois AIVIA

While the EU sets a comprehensive framework, US regulations introduce their own unique requirements, adding complexity for recruiters. For instance, NYC Local Law 144 mandates annual bias audits for Automated Employment Decision Tools (AEDTs) and requires public disclosure of the results . This applies to tools that "substantially assist or replace" human decision-making for roles in New York City .

The Illinois Artificial Intelligence Video Interview Act (AIVIA) regulates AI-driven video interview analysis. Recruiters must disclose how the AI works, get explicit consent from candidates, and delete video records within 30 days upon request .

Colorado SB 24-205, effective June 30, 2026, requires recruiters to take "reasonable care" to prevent algorithmic discrimination in critical decisions like hiring. This includes conducting impact assessments and implementing measures to avoid biased results .

| Regulation | Key Requirement for Recruiters | Effective Date |

|---|---|---|

| EU AI Act | High-risk compliance (logging, oversight, data governance) | August 2, 2026 |

| NYC Local Law 144 | Annual independent bias audits with public results | Currently Enforced |

| Illinois AIVIA | Consent and explanation for AI video interview analysis | Currently Enforced |

| Colorado SB 24-205 | Duty of care to prevent algorithmic bias | June 30, 2026 |

"Compliance is an operating model, not a checkbox." – Ameya Deshmukh, EverWorker

Practical Tips:

- Perform annual bias audits using the "four-fifths rule" to evaluate selection rate disparities across protected groups .

- Update application platforms to include clear AI disclosures for candidates in NYC, Illinois, and the EU .

These steps should integrate into your broader compliance strategy for technical hiring. Utilizing a data-driven recruitment checklist can help ensure these regulatory requirements are met consistently.

Vendor accountability is also crucial. Request "model cards" or bias reports from AI vendors, and ensure contracts include audit rights and strict data deletion timelines . Remember, under US anti-discrimination laws, you’re responsible for any adverse impact caused by automated tools, even if a vendor supplies them .

The upcoming Mobley v. Workday case (March 2026) will examine vendor liability for discriminatory outcomes. This class-action lawsuit involves claims that AI screening tools excluded candidates unfairly, potentially impacting millions of applicants . It highlights why AI compliance should be treated as an ongoing process, not a one-time task.

Pay Transparency Laws and Developer Hiring

Pay transparency laws are changing the way developer job postings are written. Starting January 1, 2026, 22 states will have new minimum wage requirements in place . On top of that, many jurisdictions now require employers to disclose wage ranges in job postings or provide them when candidates ask . For technical recruiters, this means every job listing for developers needs a thorough review to avoid legal trouble. This becomes even more important when hiring remote developers.

Remote roles add another layer of complexity. Compliance depends on where the developer is physically working - not where your company is based . For instance, a remote backend engineer role advertised nationwide might need to comply with California’s wage disclosure rules, New York’s county-specific requirements, and Massachusetts’ new law for employers with 25+ employees (effective October 2025) . A common mistake recruiters make is basing pay on the company’s location instead of the developer’s work location, which can lead to penalties for unpaid wages.

"Compliance and managing risk are always bigger considerations than simply what the law requires. Having multiple laws with different requirements that apply doesn't always mean creating a separate practice for each law." – Meg Ferrero, Vice President and Assistant General Counsel, ADP

The most efficient way to handle this is to use the strictest standard across all job postings. In practice, this means including salary ranges in every developer job listing to ensure compliance across different jurisdictions .

State Salary Posting Requirements

Let’s break down some specific state mandates. In Massachusetts, starting October 2025, employers with 25 or more employees must include pay ranges in job postings . States like California, Colorado, New York, and Washington have similar rules, each with its own thresholds and penalties. Delaware adds another layer by requiring employers to keep salary records for at least three years starting in 2027 . Looking beyond the U.S., the EU Pay Transparency Directive, effective June 2026, will extend these requirements to multinational tech companies .

To stay compliant, it’s crucial to monitor remote work locations and maintain thorough compensation records. Regular audits of job postings and payroll documentation can ensure wage ranges meet state-specific thresholds . It’s also worth noting that meeting a salary threshold doesn’t automatically mean a role is exempt from overtime rules - job duties play a key role in classification . Misclassifying roles can lead to penalties and additional compliance headaches.

For recruiters managing hiring across multiple states, standardizing job postings with salary ranges and tracking remote work locations is a smart move. This proactive approach not only ensures compliance but also builds trust with candidates. By including salary ranges in all postings, you align with state laws while also supporting broader goals like data privacy and reducing hiring bias.

Technical Hiring Compliance Audit Checklist

Running a compliance audit might sound daunting, but it’s all about spotting issues before they escalate into penalties or lawsuits. With New York City actively auditing, Illinois candidates gaining the right to sue directly starting January 1, 2026, and the EU AI Act taking full effect by August 2, 2026, the pressure to stay compliant is greater than ever . A structured audit can help you identify risks early and build a hiring process that stands up to scrutiny. This checklist is designed to ensure that the regulations discussed are fully integrated into your hiring workflow.

How to Audit Your Hiring Process

This checklist offers practical steps to review and improve your hiring process, addressing the regulatory hurdles mentioned earlier.

Start by cataloging every tool in your hiring stack. Take stock of all the tools you use, noting the data they collect, the decisions they influence, and how they’re applied across different candidate locations . This step is crucial because what works for a California-based role might violate NYC Local Law 144 if you’re hiring in New York City. Also, review data retention policies and workflows for handling deletion requests, as outlined in your candidate data handling guidelines.

Then, match specific regulations (like NYC Local Law 144, GDPR Article 22, and the Illinois AI Video Interview Act) to each step in your hiring process . For instance, if you’re using AI to screen resumes for backend engineering roles in NYC, you’ll need an annual independent bias audit, and you must publish a summary of that audit on your website. Non-compliance could cost you $500 to $1,500 per day, per violation .

Run adverse impact tests at every major decision point - screening, interview invitations, and offers. Use the four-fifths rule: if a protected group’s selection rate falls below 80% of the highest-performing group, you may have a compliance issue that requires further investigation . For high-volume technical roles, consider moving from annual testing to monthly or quarterly reviews to catch issues sooner . Also, review vendor contracts to ensure third-party platforms provide bias audit support, model documentation, and clear timelines for data deletion .

Lastly, set up human oversight checkpoints. No candidate should be rejected solely by an automated system. Define decision points where trained recruiters or hiring managers review AI-generated recommendations and have the authority to override them . Make sure your job postings include required AI usage disclosures, and confirm that you can process data deletion requests for Illinois candidates within 30 days . Centralize all audit documentation - bias reports, consent logs, evaluation rubrics, and deletion confirmations - within your ATS for easy access and review . This documentation will serve as evidence of compliance with regulatory standards.

"Compliance is an operating model, not a checkbox." – Ameya Deshmukh, EverWorker

| Audit Phase | Key Actions | Compliance Target |

|---|---|---|

| Inventory | Map tools, data inputs, and decision points | Transparency & Risk Mapping |

| Fairness | Conduct 4/5 rule analysis; validate criteria | EEOC & Title VII Compliance |

| Transparency | Update job descriptions with AI notices; log consent | NYC AEDT & Illinois AIVIA |

| Data Privacy | Define retention policies; automate deletions | GDPR & CCPA |

| Governance | Document human overrides and decision codes | EU AI Act & NIST RMF |

Use these audit findings to continuously refine your hiring processes and avoid compliance pitfalls.

How daily.dev Recruiter Handles Compliance

Most recruiting platforms bury compliance details in lengthy privacy policies, making them hard to understand. daily.dev Recruiter takes a different route by embedding compliance directly into its process. It uses a double opt-in system designed to align with major regulations like GDPR, CCPA, NYC Local Law 144, and the EU AI Act. This approach creates a solid foundation for managing consent effectively.

Double Opt-In Introductions and Candidate Consent

Here’s how the double opt-in process works: Developers must actively opt in and confirm their participation before recruiters can reach out. This two-step process not only builds trust but also ensures compliance with GDPR Article 6, which requires a lawful basis for data processing. It also addresses concerns about automated decision-making under GDPR Article 22 and the EU AI Act .

Since participation is entirely voluntary, the platform collects data only from candidates who explicitly agree. This aligns with the principle of data minimization, ensuring only necessary information is gathered. By weaving compliance into its workflow, daily.dev Recruiter tackles common challenges in tech hiring, particularly around candidate privacy. This setup also makes it easier to handle deletion requests under GDPR and CCPA while meeting specific laws like the Illinois AI Video Interview Act . The double opt-in system doesn’t just meet legal requirements - it also streamlines hiring by filtering out candidates who aren’t interested.

The framework also meets transparency standards required by NYC Local Law 144 and CCPA. It provides clear notifications about how data will be used and explains AI-assisted matching before candidates give their consent . This ensures developers are fully informed about data processing and guarantees recruiters engage only with those who have opted in.

Conclusion: Staying Compliant in 2026

Technical recruiting compliance isn’t just about ticking boxes - it’s the backbone of effective operations. With regulations like the EU AI Act (categorizing most hiring AI as "high-risk"), NYC Local Law 144 (requiring annual bias audits), Colorado SB 24‑205 (demanding "reasonable care" to prevent algorithmic discrimination), and Illinois AIVIA (mandating deletion of video interview data within 30 days upon a candidate's request), organizations face a complex regulatory landscape that calls for proactive management . Companies poised for success in 2026 will approach AI not as a fleeting tech trend but as a critical governance issue .

There’s no room for ignorance anymore - every automated decision must hold up to scrutiny. AI-driven selection criteria must be clearly job-related, and human reviewers need the authority to challenge and override AI recommendations instead of simply approving them . Keeping detailed audit trails - covering model versions, data sources, prompts, and human interventions - is essential for accountability .

"Compliance isn't a tax on innovation - it's how you make AI recruiting scalable, fair, and trusted."

– Ameya Deshmukh

To meet these rigorous standards, organizations must build systems ready for scrutiny from the start. Moving from generic keyword-based scans to skills-focused rubrics, providing clear transparency notices about AI usage, and implementing continuous monitoring to catch issues like model drift early are more than just legal necessities - they also strengthen trust with candidates and enhance your employer brand .

Beyond simply adhering to the law, this approach offers a strategic edge. By treating compliance as the groundwork for equitable and consistent hiring, rather than as a limitation, companies can position themselves for long-term success . While conversations around DEI and AI continue to evolve, one thing remains constant: the importance of mitigating liability . Document your processes thoroughly, adopt the highest standards across jurisdictions, and embed fairness into every step of your hiring workflow.

FAQs

Do I need bias audits if my ATS vendor provides the AI?

Absolutely. Even when your ATS vendor supplies the AI, bias audits remain essential. Regulations such as NYC Local Law 144 mandate transparency and regular bias testing to promote fairness in hiring practices. By conducting these audits, you not only stay compliant with legal requirements but also help minimize the risk of discrimination in automated hiring systems.

What hiring data should I avoid collecting from GitHub and coding tests?

When gathering data from sources like GitHub or coding tests, steer clear of anything that includes malicious code, obfuscated scripts, suspicious hooks, or compiled binaries. These elements can introduce serious security risks and may infringe on candidate privacy standards. Always ensure that any information you collect aligns with established privacy and safety regulations, safeguarding both your organization and the candidates involved.

How do I stay pay-transparency compliant for remote developer roles?

When hiring remote developers, it's crucial to include clear salary ranges in job postings to meet pay-transparency laws. These laws vary by state, so make sure you're familiar with the specific requirements in every location where your remote employees might work.

To stay on top of compliance, regularly review pay regulations across all applicable regions. Always adhere to the highest wage standards based on the employee's work location, ensuring fairness and alignment with legal obligations.

.png)