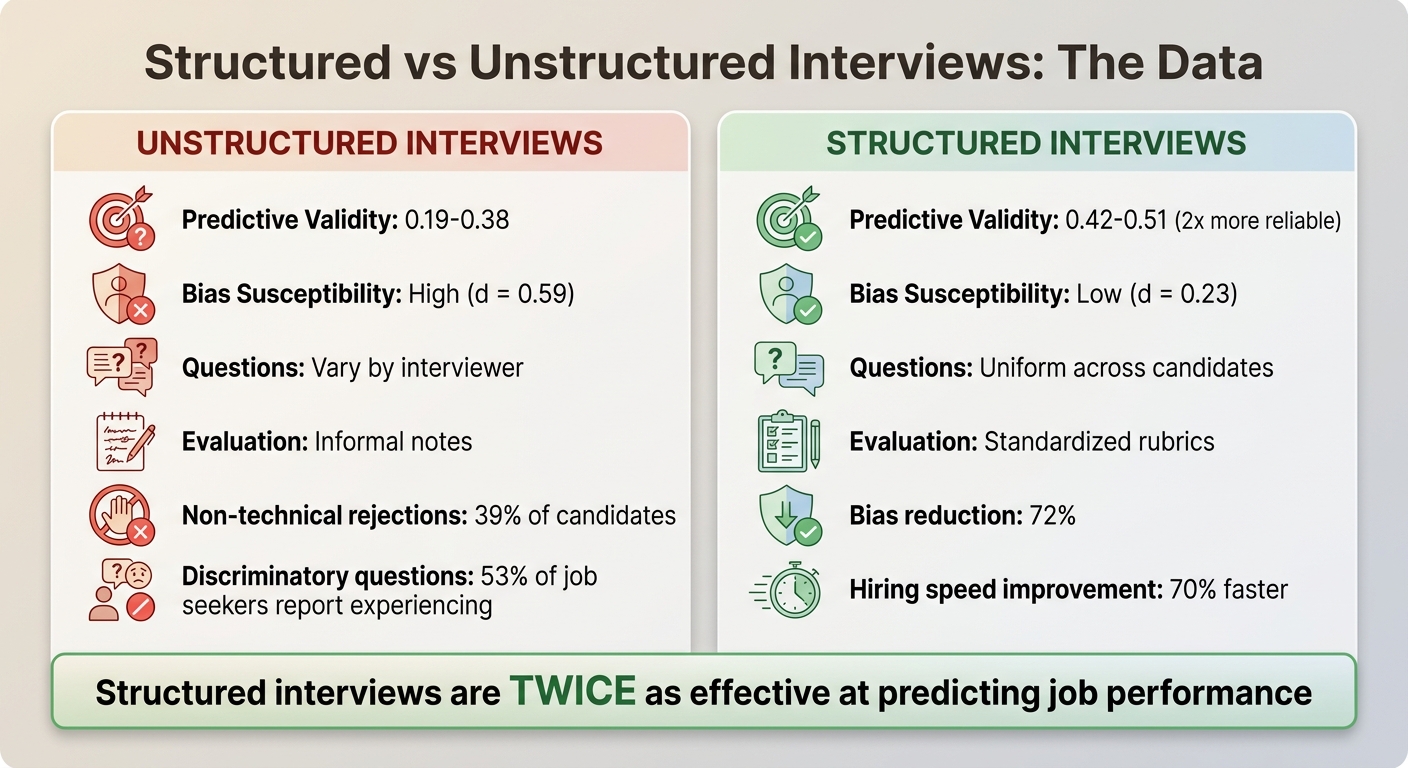

Unstructured interviews lead to inconsistent hiring decisions, higher bias, and costly mistakes. A single bad hire can cost up to $240,000, and unstructured interviews have poor predictive validity (0.19–0.38). In contrast, structured interviews, using scorecards, are twice as effective at predicting job performance and reduce hiring bias by 72%. Here's how scorecards help:

- Clear Criteria: Predefined, job-specific skills (e.g., system design, communication) ensure consistent evaluations.

- Rating Scales: Defined scoring (e.g., 1–5) with behavioral examples eliminates guesswork.

- Evidence-Based Ratings: Interviewers document observations to justify scores, avoiding gut-based decisions.

- Calibration: Regular training aligns interviewers on standards, reducing discrepancies.

- Fairness in Culture Evaluation: Focus on ["culture add" (new perspectives) over vague "culture fit"] (https://recruiter.daily.dev/resources/cultural-fit-engineering-teams-assess-properly/).

Scorecards save time, improve hiring accuracy, and build candidate trust by ensuring a transparent, merit-based process. Companies using structured interviews report 70% faster hiring times and better offer acceptance rates. The cost savings and improved outcomes make scorecards a smart choice for any hiring team.

Why Unstructured Tech Interviews Produce Inconsistent Results

::: @figure  {Structured vs Unstructured Interviews: Performance Comparison}

{Structured vs Unstructured Interviews: Performance Comparison}

The Problem with Gut-Feel Decisions

When interviews lack structure, decisions often hinge on gut feelings rather than measurable criteria. This happens because interviewers make quick judgments and then unconsciously look for evidence to back their initial impressions - this is confirmation bias at work . Instead of focusing on assessing technical skills without coding tests, the process shifts toward validating a subjective first impression.

Another issue is how information is captured during these interviews. Some interviewers might jot down detailed notes, while others scribble vague comments like "seems like a good cultural fit" . Without clear, standardized criteria, terms like "communication skills" or "technical depth" can mean wildly different things depending on who’s evaluating, leading to inconsistent assessments across the hiring team .

"Research shows that during first encounters we make snap, unconscious judgments heavily influenced by our existing unconscious biases and beliefs. For example, in an interview context, we shift from assessing the complexities of a candidate's technical and soft skills to hunting for evidence that confirms our initial impression." - re:Work Editorial Team, Google

A real-world example highlights the cost of this approach. In late 2024, a KORE1 client in San Diego conducted 14 unstructured interviews for a senior backend role across five candidates. Each interviewer used their own preferred questions, and two candidates were rejected for "lacking energy." The person they eventually hired quit after just 41 days, citing a misrepresentation of the role. The failed process cost the company roughly $67,000 in recruiter time, lost productivity, and unrecovered bonuses .

This kind of subjectivity exposes unstructured interviews to biases that undermine their effectiveness.

Research Data on Interview Bias

The data paints a clear picture: unstructured interviews perform poorly when it comes to predicting job success. Their predictive validity ranges from 0.19 to 0.38, barely better than chance . In contrast, structured interviews score 0.42 to 0.51, making them more than twice as reliable . Unstructured formats are also far more prone to bias, with a bias susceptibility score of d = 0.59, compared to just d = 0.23 for structured methods .

The practical impact is significant. For instance, 39% of candidates are rejected for non-technical reasons like confidence or tone . On top of that, 53% of job seekers report being asked discriminatory, irrelevant, or inappropriate questions during unstructured interviews . A striking example involved a tech company in Irvine that repeatedly rejected a female backend engineer for being "quiet" and "uncomfortable on camera", labeling her as a poor "culture fit." When the company adopted a structured scoring system, she received a 3.8 out of 4.0 across technical competencies, was hired, and became one of the team’s top performers within 11 months .

| Dimension | Unstructured Interview | Structured Interview |

|---|---|---|

| Predictive Validity | 0.19 to 0.38 | 0.42 to 0.51 |

| Bias Susceptibility | High (d = 0.59) | Low (d = 0.23) |

| Questions | Vary by interviewer | Uniform across candidates |

| Evaluation | Informal notes | Standardized rubrics |

"Structured interviews are one of the best tools we have to identify the strongest job candidates. Not only that, they avoid the pitfalls of some of the other common methods." - Dr. Melissa Harrell, Former Hiring Effectiveness Expert, Google People Analytics

These findings highlight the importance of structured interviews in minimizing bias and improving hiring outcomes.

Anatomy of an Effective Technical Interview Scorecard

A technical interview scorecard acts like a grading tool. Instead of relying on memory or gut feelings, interviewers use it to evaluate candidates based on predefined skills tied to the job requirements . This method turns subjective opinions into clear, measurable data, ensuring every candidate is judged by the same standard. By using this structured approach, companies can reduce bias in technical hiring and eliminate inconsistencies that often plague unstructured interviews.

The best scorecards include three key elements: job-specific evaluation criteria that focus only on what’s relevant for the position, rating levels with clear behavioral examples to explain each score, and evidence fields where interviewers document observations to back up their ratings. Together, these pieces create a system that improves accuracy and fairness in hiring decisions.

Defining Job-Specific Evaluation Criteria

To start, choose 5–7 core skills or competencies based on the job’s actual needs . For example, a backend engineer’s scorecard might include:

- Technical skills: Proficiency with algorithms, system design, and architecture tradeoffs.

- Problem-solving: Tackling ambiguous challenges and debugging effectively.

- Code quality: Testing strategies and familiarity with CI/CD practices.

- Communication: Clear explanations and strong documentation skills.

- Domain knowledge: Understanding of the relevant tech stack .

Be specific about outcomes. Instead of listing a vague skill like "performance optimization", define it as "ability to reduce page load time by 25%" .

To focus on what matters most, use weighted scoring. Assign percentages to each competency based on its importance. For instance, technical skills might count for 40%, problem-solving for 25%, code quality for 15%, and communication and domain knowledge for 10% each . This ensures that weaknesses in less critical areas don’t overshadow strengths in key ones. Stick to 6–12 competencies total - anything more becomes too complex and less effective .

Creating Rating Levels with Behavioral Anchors

Standardized rating levels help ensure consistent evaluations. A 1–5 scale works well, but it’s important to define what each score means with specific examples. For instance, for system design:

- 1 (Insufficient): Offers high-level ideas but skips tradeoffs or scalability.

- 3 (Meets Expectations): Structures a design, identifies key components, and discusses tradeoffs with latency estimates.

- 5 (Exceeds Expectations): Proposes a scalable design, quantifies bottlenecks, and suggests ways to address them .

"A clear rubric reduces variance in ratings and helps different interviewers evaluate the same behavior similarly." - ZYTHR

Focus on actions and results, not impressions. For example, instead of saying a candidate "seemed like a team player", evaluate their ability to communicate effectively or collaborate during problem-solving . When assessing coding skills, distinguish between basic competency (e.g., writing functional code with minimal errors) and advanced skills like anticipating edge cases and scalability . This avoids inconsistencies, where one interviewer’s "4" might be another’s "3" for the same performance .

| Competency | 1 (Insufficient) | 3 (Meets Expectations) | 5 (Exceeds Expectations) |

|---|---|---|---|

| System Design | Provides high-level ideas without tradeoffs or scaling considerations | Structures a design, identifies major components, and notes tradeoffs with latency estimates | Delivers a scalable design, quantifies bottlenecks, and proposes mitigation strategies |

| Communication | Answers are disorganized and lack clarity | Communicates ideas clearly and summarizes decisions concisely | Adapts explanation to audience and anticipates stakeholder questions |

| Problem Solving | Requires heavy prompting; misses the root cause of the issue | Breaks problem into steps; proposes and validates reasonable hypotheses | Rapidly narrows root cause; proposes effective solution with testing plan and contingencies |

Adding Evidence Fields to Support Each Score

While numerical ratings show how a candidate performed, evidence fields explain why. These fields, typically 3–5 blank lines under each competency, allow interviewers to record specific observations .

Taking notes during or right after the interview prevents memory loss and ensures detailed, accurate records. Independent completion of scorecards before group discussions also preserves diverse perspectives and avoids groupthink .

"If interviewers can skip notes, you lose the context needed to adjudicate differing scores." - Titus Juenemann, ZYTHR

Train interviewers to use STAR-based behavioral questions for concise, clear evidence . For example, instead of writing "good problem solver", they might note: "When asked about debugging a production issue (Situation), candidate explained their systematic approach to isolating the database query causing timeouts (Task), implemented connection pooling (Action), and reduced response time from 8 seconds to 200ms (Result)."

Require evidence for extreme scores. This rule helps prevent unsupported ratings and forces interviewers to justify their assessments. During debriefs, these notes allow teams to resolve discrepancies by reviewing specific examples rather than debating opinions. They also create a defensible record of the decision-making process, which is critical for legal compliance and transparency.

Once you’ve built a strong scorecard, the next step is training interviewers to use it effectively.

Calibrating Interviewers to Use the Same Scoring Framework

To ensure fairness and consistency across technical vs non-technical hiring approaches, it's essential to calibrate interviewers so they apply the same scoring framework. Without proper calibration, scorecards lose their value - what one interviewer considers a "3", another might see as a "5." This inconsistency undermines the hiring process. The solution? Train your team to interpret and apply scorecards consistently through structured exercises and regular alignment sessions.

"Calibration does not imply that every interviewer must assign the same score to an applicant. Instead, it guarantees that when discrepancies arise, they represent meaningful variations in viewpoint, rather than misalignment with the role's requirements or uncertainty regarding scoring." - Elena Bejan, People Culture and Development Director, Index.dev

Running Mock Interview Sessions

Mock interviews are a great way to help your team practice using the scorecard before they evaluate actual candidates. In these sessions, team members role-play: one acts as the candidate, while others conduct the interview and independently complete scorecards. Afterward, compare the scores to identify where interpretations differ. For instance, if one interviewer rates a candidate's explanation much higher than another, discuss what specific behaviors led to the differing scores and address any unclear criteria .

For new interviewers, shadowing experienced team members is key. During their first few sessions, they should observe how seasoned interviewers ask questions and document evidence. Afterward, conduct "side-by-side" scoring for their first three interviews. In this setup, the new interviewer and a peer independently score the same candidate, then immediately compare their results. This hands-on approach builds confidence and ensures early misinterpretations are corrected before they impact hiring decisions .

Holding Norming Sessions

Norming sessions - often called calibration sessions - are regular meetings where interviewers align on scoring standards. These sessions involve reviewing and scoring the same recorded or anonymized candidate interview, followed by a group discussion to address any discrepancies. Aim to hold these sessions monthly, dedicating 30–45 minutes each time to prevent "rating drift" as team standards evolve .

Start by having everyone silently review a recorded interview or submission, score it using the scorecard, and submit their ratings. Silent scoring avoids groupthink and ensures each evaluation reflects individual judgment . Once scores are submitted, the group can discuss discrepancies, focusing on evidence rather than opinions. For example, if two scores differ significantly, interviewers should cite specific examples from the candidate's performance to explain their ratings. This process helps clarify whether the differences stem from genuine observations or a misunderstanding of the rubric. If the latter is the case, update the scorecard's behavioral anchors to eliminate ambiguity .

To further align your team, build a benchmark library of past interview examples that represent "clear pass", "borderline", and "no hire" cases for each role . These examples serve as a reference point during norming sessions, helping interviewers understand what each rating level looks like in practice. Additionally, track which interviewers consistently score higher or lower than the group average and pair them with top evaluators for coaching. This ongoing process ensures your team stays aligned and maintains a consistent hiring bar .

Separating Culture Fit from Culture Add in Scorecards

When evaluating candidates, it’s essential to measure cultural contributions as carefully as technical skills. Without clear guidelines, assessments can fall prey to bias. The term "culture fit" often seems harmless but can quickly shift into a shallow test of social compatibility. As Devonaire Ortiz from Cockroach Labs explains:

"What most people really mean when they say someone is a good fit culturally is that he or she is someone they'd like to have a beer with… [but] very often the people we enjoy hanging out with have backgrounds much like our own." - Devonaire Ortiz, Cockroach Labs

This is where culture add comes in. Instead of seeking someone who blends seamlessly into the existing team, culture add focuses on what new perspectives, skills, or experiences a candidate can bring to enhance the team. Cockroach Labs defines this as "any quality which would bring new or culturally beneficial experiences to our team" . It’s a shift in mindset: rather than asking if someone mirrors the current team, the question becomes how they can strengthen it.

What Culture Fit and Culture Add Mean

The concept of culture fit should be tied to alignment with a company’s core values - principles like integrity, teamwork, or customer focus. It’s not about finding someone similar to the current team but about weeding out red flags. On the other hand, culture add prioritizes diversity in thought and experience. For instance, if your team thrives on synchronous communication, a candidate skilled in asynchronous collaboration could bring a fresh and valuable perspective .

Ambiguously defined culture fit criteria often lead to inconsistent evaluations. One interviewer’s "great fit" might be another’s "not quite right", and these judgments often reflect personal biases rather than job-related criteria . To avoid this, scorecards should include operational definitions - specific, observable behaviors that leave no room for subjective interpretations. This objectivity is a cornerstone of ethical tech recruiting, which prioritizes fairness and respect for all candidates.

Once these definitions are in place, the next step is to incorporate objective, evidence-based prompts into the scorecard.

Using Bias-Check Prompts in Your Scorecard

Bias-check prompts help ensure evaluations are based on observable actions rather than gut feelings. Replace vague culture fit questions with targeted prompts like: "What specific skill or experience does this candidate have that is currently underrepresented on our team?" or "Did the candidate provide a clear example of resolving a conflict in a way that aligns with our values?" . These kinds of questions steer the focus toward measurable behaviors.

Additionally, include a mandatory evidence field in the scorecard. Interviewers should document specific moments from the interview to justify their culture add score. For example, if mentorship is a key culture add criterion, an interviewer might note:

"Candidate described leading a weekly code review session for junior developers and setting up a Slack channel for Q&A."

If an interviewer can’t provide this level of detail, their score might be rooted in bias rather than evidence.

Finally, treat culture add as a bonus to a candidate’s profile, not a dealbreaker . This approach prevents interviewers from using cultural factors to disqualify technically strong candidates who simply don’t align with personal preferences.

Common Scorecard Mistakes That Introduce Bias

Even with the most carefully designed scorecards, bias can creep in when interviewers rely on subjective judgments. Below are three common pitfalls - halo effect, pattern matching, and scoring without evidence - that can compromise the fairness and accuracy of structured interview processes.

The Halo Effect

This bias occurs when one standout trait overshadows other competencies, leading to inflated or skewed scores. For instance, a candidate who makes a great first impression with strong communication skills might receive higher ratings across unrelated areas, like technical problem-solving, even if the evidence doesn’t support it. Without clear behavioral benchmarks, this "halo" can distort evaluations, making it harder to accurately assess a candidate's overall abilities.

To avoid this, score each competency independently and back up every rating with specific, concrete examples. For instance, if you're evaluating problem-solving skills, look for actual evidence - like how the candidate approached a technical challenge - instead of letting unrelated strengths influence your judgment .

Pattern Matching

Pattern matching happens when interviewers favor candidates who remind them of themselves or align with the perceived traits of current top performers. This bias often sidelines candidates with different but equally valuable strengths. As Rachel Thomas, co-founder of fast.ai, explains:

"The types of programmers that each company looks for often have little to do with what the company needs or does. Rather, they reflect company culture and the backgrounds of the founders."

A clear example of this comes from Airbnb. In 2017, the company’s Data Science team increased the percentage of women from 15% to 30% by standardizing their evaluation process with developer assessment tools for take-home exercises. Before this, inconsistent criteria led to pattern matching that disproportionately excluded female candidates . Similarly, a Yale University study found that evaluators consistently favored male candidates - regardless of whether they had practical or academic credentials - until predefined criteria were introduced .

To reduce pattern matching, define job-specific competencies and behavioral anchors before reviewing candidates. Additionally, require interviewers to complete scorecards independently. Research shows that when interviewers see their peers’ scores, they are 3.6% more likely to match those ratings, which could impact decisions in hundreds of thousands of interviews each year .

Scoring Without Evidence

Failing to base scores on documented evidence is another common mistake. When scorecards are treated as an afterthought, interviewers risk relying on memory, which can lead to recency bias or rationalizing gut feelings .

To ensure fair and accurate ratings, scorecards should be completed in real-time or immediately after the interview - ideally within 30 minutes. Mandatory evidence for each competency, such as direct quotes, code snippets, or specific behavioral examples, helps maintain objectivity. This is especially important for extreme ratings (1 or 5), which require stronger justification .

Discrepancies in scoring should also prompt discussion. For example, if two interviewers rate the same criterion three or more points apart, they should review the recorded evidence together rather than simply averaging their scores . By grounding evaluations in clear, documented examples, interviewers can turn subjective impressions into fair, defensible decisions.

Using a developer hiring checklist can help ensure these steps are followed consistently. Next, we’ll explore scorecard templates designed to streamline developer interview evaluations.

Scorecard Templates for Different Role Types

When building a scorecard, tailoring it to the specific technical demands of the role is key. Using generic criteria across different positions can lead to ineffective evaluations. Instead, customizing scorecards for backend, frontend, data, or DevOps roles ensures you're focusing on the skills and knowledge that truly matter for success. These templates provide clear examples of how to evaluate candidates fairly and consistently while minimizing bias.

Backend Developer Scorecard Template

Backend roles emphasize system design, API design, and data modeling. A strong scorecard should assess how well candidates handle scalability, performance bottlenecks, and reliability under load. Common evaluation areas include:

- System Design (20%)

- API Design (15%)

- Data Modeling (15%)

- Performance Optimization and Testing/Reliability (20%)

For example, a 3 in System Design might indicate: "The candidate suggested a modular architecture with clear service boundaries but didn't address scaling beyond 10,000 concurrent users." A 5, on the other hand, could be: "The candidate proposed a horizontally scalable architecture with load balancing, caching strategies, and failover mechanisms, explaining trade-offs between consistency and availability."

Key signals to document include discussions on API backward compatibility, schema optimization, caching strategies, and fault injection aligned with service-level objectives (SLOs).

Frontend Developer Scorecard Template

Frontend roles require a balance between technical execution and user experience. Scorecards should assess areas like technical craft, architecture, performance optimization, UI/UX, quality, and collaboration.

For performance optimization, strong candidates will demonstrate knowledge of techniques like lazy loading, code splitting, and asset compression. For instance, a standout response might be:

"I implemented route-based code splitting in React, reducing the initial bundle size by 40% and cutting Time to Interactive from 4.2 seconds to 2.1 seconds."

Look for expertise in frameworks (e.g., React, Vue, Angular), responsive design, accessibility (e.g., WCAG compliance), and ensuring UI consistency across different browsers and devices.

Data Engineer and DevOps Scorecard Templates

Data Engineer scorecards should focus on pipeline design, query optimization, system integration, and data modeling. Evaluate how candidates design ETL/ELT workflows, optimize queries for large datasets, manage schema changes, and maintain data quality and lineage tracking.

DevOps and SRE roles prioritize automation, reliability engineering, cloud infrastructure management, and deployment strategies. Key areas to assess include:

- CI/CD pipeline design and maintenance

- Infrastructure-as-code tools like Terraform or CloudFormation

- Deployment strategies, such as blue-green or canary releases

- Automated rollback processes and cross-team collaboration

Summary Table

| Role Type | Core Evaluation Criteria | Key Signals to Look For |

|---|---|---|

| Backend Developer | System Design, API Design, Data Modeling, Performance, Testing | Scalable architecture, backward compatibility, schema optimization, SLOs, fault injection |

| Frontend Developer | Technical Craft, Architecture, Performance, UX, Quality | Framework knowledge, responsive design, asset optimization, accessibility, UI consistency |

| DevOps / SRE | Automation, Reliability, Cloud Infrastructure, Deployment | CI/CD pipeline maintenance, infrastructure-as-code, canary deploys, automated rollbacks |

Adjust the weighting of competencies based on seniority. For example, junior roles might focus more on technical fundamentals (up to 40%), while senior roles should emphasize system design (25%) and collaboration (15%). Regular calibration sessions - where team members score the same sample responses - can help ensure consistency in applying these templates. By tailoring scorecards to the role, you can create a structured and fair evaluation process that measures the skills that matter most.

How to Debrief Using Scorecards Instead of Gut Feel

Using the structured interview rubric mentioned earlier, debrief sessions can shift from subjective impressions to decisions based on clear data. To keep the process unbiased, interviewers should submit their scorecards independently within 30 minutes of the interview. This ensures that no single opinion dominates the discussion and allows for a more balanced evaluation . The structured debrief process builds on initial calibration efforts to promote fair and consistent decision-making.

Start your debrief by displaying all the interviewer ratings side-by-side, rather than diving into general impressions. This method prioritizes data and quickly uncovers both areas of agreement and points of divergence. To calculate an overall score, apply the job-specific weighting system described earlier: multiply each competency rating by its assigned weight to get a weighted total. Many teams establish a minimum weighted threshold - commonly around 70% - to determine whether a candidate moves forward .

Aggregating Scores Across Interviewers

When combining scores from multiple interviewers, focus on identifying trends and outliers. For instance, if most interviewers give a 5 for System Design but one gives a 2, this discrepancy should prompt discussion rather than simply averaging the scores . Analytics can also reveal patterns, such as whether certain interviewers consistently rate candidates higher or lower. If this happens, it may signal the need for recalibration . Once you've spotted these outliers, the next step is to address them by reviewing the evidence provided.

Resolving Score Discrepancies with Evidence

When scores differ significantly, turn to the documented evidence on the scorecards. Ask interviewers to share the specific observations that led to their ratings for a particular competency. This ensures that decisions are based on concrete data rather than averaging conflicting scores .

"Calibration does not imply that every interviewer must assign the same score to an applicant. Instead, it guarantees that when discrepancies arise, they represent meaningful variations in viewpoint, rather than misalignment with the role's requirements." – Elena Bejan, People Culture and Development Director

Extremely high or low ratings should always be backed by detailed examples from the interview . After reviewing the evidence as a group, teams can also set minimum competency rules. For example, candidates scoring below a 2 in critical areas might be disqualified, regardless of their overall weighted score .

Connecting Structured Interviews to Better Offer Acceptance Rates

A thoughtfully crafted technical interview scorecard doesn't just improve hiring decisions - it also increases the likelihood of candidates accepting your job offers. Here's why: 66% of candidates say a positive interview experience significantly influences their decision to accept a job offer . When developers notice that your evaluation process is fair, transparent, and rooted in merit rather than subjective instincts, they begin to trust your company even before an offer is extended. This trust is a direct result of structured evaluation practices that enhance hiring accuracy. The smooth transition from structured debriefs to transparent assessments builds confidence and sets the stage for higher offer acceptance.

Building Candidate Trust Through Transparency

Structured scorecards immediately convey fairness and professionalism. When candidates realize they're being evaluated using consistent, job-relevant criteria instead of subjective opinions, they perceive the process as equitable . This perception matters - a lot. Studies show that rejected candidates who experienced a structured interview process were 35% more satisfied compared to those who went through unstructured interviews . That satisfaction not only shields your employer brand but also encourages referrals from candidates who appreciated the fairness of your approach.

"Structured interviews are one of the best tools we have to identify the strongest job candidates. Not only that, they avoid the pitfalls of some of the other common methods." - Dr. Melissa Harrell, Former Hiring Effectiveness Expert, Google People Analytics

Technical candidates, in particular, value a merit-based approach. When your scorecard zeroes in on measurable outcomes - like system design capabilities or code quality - instead of vague concepts like "culture fit", developers see that you're evaluating what truly matters for the job. This clarity instills confidence in your process and makes your offer more appealing compared to companies relying on subjective evaluations. In turn, this trust enhances candidates' perceptions of your company and speeds up the hiring process.

Minimizing Candidate Drop-Off

In the competitive world of tech hiring, speed is crucial. Structured scorecards streamline decision-making by providing clear, quantitative data and predefined benchmarks. This is vital because 42% of candidates drop out of the hiring process due to delays in scheduling or decision-making . By aggregating weighted scores into a clear decision, your team reduces the time during which top candidates might consider other opportunities.

The combination of fairness and efficiency directly impacts your success. Using pre-designed questions and scoring rubrics saves hiring teams an average of 40 minutes per interview , enabling faster progress without compromising quality. When candidates see that your process is both thorough and respectful of their time, they enter offer discussions with a positive impression of your company - an advantage that can significantly influence acceptance rates.

Conclusion: Implementing Scorecards for Better Hiring Outcomes

Unstructured interviews often lead to inconsistent and biased hiring decisions, but scorecards offer a way to change that. By using technical interview scorecards, hiring managers can shift from subjective judgments to decisions rooted in data. The advantages are hard to ignore: structured interviews are twice as effective at predicting job performance compared to unstructured ones , and organizations that adopt scorecards report a 72% reduction in hiring bias . On top of that, teams experience 70% faster time-to-hire and save approximately $12 million annually per 1,000 hires .

To get started, focus on defining 5–8 key competencies for your next role. Create clear behavioral anchors for each rating level, and ensure interviewers provide evidence-based scores within 30 minutes after the interview . Consider running a calibration session where your team scores the same recorded interview to align on standards before going live. While this setup requires some initial effort, it quickly pays off. You'll reduce reliance on "gut feelings" and make decisions with greater confidence and consistency.

"The scorecard turns interviewing from subjective judgment into a repeatable process." - Dover

If you're looking to enhance your hiring process further, daily.dev Recruiter can help by connecting you with pre-qualified developers through warm, double opt-in introductions. Pairing this sourcing method with a scorecard-based evaluation ensures you not only attract engaged candidates but also create a fair and effective selection process that identifies top talent consistently.

FAQs

How do I choose the right competencies for a scorecard?

When building a candidate evaluation scorecard, it's essential to select 4–6 measurable competencies that directly tie to the most critical outcomes of the role. These should emphasize observable behaviors such as technical expertise, problem-solving abilities, or communication skills.

To ensure clarity and consistency:

- Define Clear Evaluation Criteria: Break each competency into specific, measurable behaviors or outcomes that interviewers can objectively assess.

- Align Interview Questions to Competencies: Craft questions that directly evaluate the selected skills, ensuring candidates have the opportunity to demonstrate their abilities.

- Calibrate Interviewers: Regularly train interviewers to maintain consistent scoring standards, reduce personal biases, and improve the reliability of evaluations.

By keeping the scorecard simple and focused on fairness, you'll create a tool that accurately captures a candidate's performance and suitability for the role.

What’s the best way to calibrate interviewers quickly?

The fastest way to get interviewers on the same page is by using a structured, data-driven approach. Regular calibration sessions are key - they help define role-specific criteria, establish clear rating scales, and specify what evidence interviewers should focus on.

To make the process even smoother, leverage collaborative tools. Shared scorecards and real-time feedback systems can simplify alignment, maintain consistency, and help reduce bias in evaluations.

How do I score culture add without bias?

To evaluate culture add objectively, start by setting clear, role-specific criteria that emphasize measurable skills. For example, consider a candidate’s ability to contribute diverse viewpoints or encourage fresh ideas. Use a standardized rating scale with specific, behavior-based examples to ensure consistency across evaluations.

Interviewers should be trained to focus on evidence rather than gut feelings or personal impressions. Regular calibration sessions can help align scoring methods and minimize biases, such as the tendency to favor candidates who resemble current team members (pattern matching) or being overly influenced by one positive trait (halo effect).

.png)